EpiExplorer: A Complete Guide to Live Visualization and Analysis of Large Epigenomic Datasets in 2025

This guide provides a comprehensive overview of EpiExplorer, a powerful platform for the interactive exploration of large-scale epigenomic data.

EpiExplorer: A Complete Guide to Live Visualization and Analysis of Large Epigenomic Datasets in 2025

Abstract

This guide provides a comprehensive overview of EpiExplorer, a powerful platform for the interactive exploration of large-scale epigenomic data. We detail its foundational principles for navigating complex datasets, present step-by-step methodological workflows for multi-omics integration, offer solutions for common troubleshooting and performance optimization, and provide a framework for validation and comparative analysis against other tools. Aimed at researchers and drug development professionals, this article synthesizes current best practices to empower hypothesis generation, accelerate biomarker discovery, and translate epigenetic insights into clinical applications.

Foundations of Epigenomic Exploration: Understanding EpiExplorer's Core Architecture for Data Navigation

The Evolution of Epigenomic Assays

The field of epigenomics has rapidly evolved from bulk population-level assays to high-resolution single-cell multi-omics technologies. This progression has exponentially increased data complexity, revealing cell-type-specific regulatory landscapes critical for understanding development, disease, and therapeutic intervention.

Quantitative Comparison of Key Epigenomic Technologies

The following table summarizes the core quantitative characteristics of major epigenomic assays, illustrating the evolution in scale and resolution.

Table 1: Key Characteristics of Modern Epigenomic Assays

| Assay Type | Typical Resolution | Cells per Experiment | Key Measured Features | Primary Data Output | Typical Dataset Size |

|---|---|---|---|---|---|

| Bulk ChIP-seq | 200-300 bp (peak calls) | 10^5 - 10^7 | Histone modifications, TF binding sites | Peak BED files, BigWig | 5-50 GB |

| Bulk ATAC-seq | < 100 bp (cut sites) | 5x10^4 - 1x10^5 | Chromatin accessibility | Insertion BED files | 10-30 GB |

| scATAC-seq | Single-cell | 5x10^3 - 1x10^5 | Cell-type-specific accessibility | Sparse count matrix | 50-500 GB |

| scRNA-seq | Single-cell | 1x10^3 - 1x10^6 | Transcriptome | Sparse gene count matrix | 50-1000 GB |

| CUT&Tag | 200-300 bp | 5x10^4 - 1x10^5 | Histone marks, TFs with low input | Peak BED files | 5-30 GB |

| Multiome (scATAC+scRNA) | Single-cell | 5x10^3 - 1x0^4 | Paired accessibility & expression | Paired sparse matrices | 200-1000 GB |

Core Experimental Methodologies

Standard Bulk ChIP-seq Protocol

Objective: To map genome-wide binding sites of a transcription factor or histone modification in a population of cells.

Detailed Protocol:

- Crosslinking: Treat cells with 1% formaldehyde for 10 min at room temperature to fix protein-DNA interactions. Quench with 125 mM glycine.

- Cell Lysis & Sonication: Lyse cells in SDS lysis buffer. Sonicate chromatin to 200-500 bp fragments using a Covaris S220 (Settings: 140W Peak Power, 5% Duty Factor, 200 cycles/burst for 12 min).

- Immunoprecipitation: Incubate 50-100 µg of sheared chromatin with 5-10 µg of validated antibody overnight at 4°C with rotation. Capture with Protein A/G magnetic beads.

- Wash & Elution: Wash beads sequentially with Low Salt, High Salt, LiCl, and TE buffers. Elute complexes in elution buffer (1% SDS, 0.1M NaHCO3) at 65°C for 15 min.

- Reverse Crosslinking & Purification: Incubate eluate with 200 mM NaCl at 65°C overnight. Treat with RNase A and Proteinase K. Purify DNA using SPRI beads.

- Library Prep & Sequencing: Use the NEBNext Ultra II DNA Library Prep Kit. Size select for 200-400 bp fragments. Sequence on Illumina NovaSeq (PE 150 bp).

10x Genomics Single-Cell Multiome (ATAC + Gene Expression) Protocol

Objective: To simultaneously profile chromatin accessibility and gene expression in the same single cell.

Detailed Protocol:

- Nuclei Isolation: Suspend fresh/frozen tissue or cells in chilled lysis buffer (10mM Tris-HCl pH7.4, 10mM NaCl, 3mM MgCl2, 0.1% Tween-20, 0.1% Nonidet P40, 1% BSA, 1U/µl RNase inhibitor). Incubate on ice for 5 min. Filter through a 40µm flow cell strainer.

- Nuclei Counting & Viability: Count using Trypan Blue or AO/PI on a fluorescent counter. Aim for >80% viability and concentration of 700-1200 nuclei/µl.

- Transposition & Partitioning: Use the 10x Genomics Chromium Next GEM Chip G. Combine nuclei with Transposase and Master Mix. Load into the Chip with Single Cell Multiome Gel Beads. The transposition reaction occurs in each droplet (GEM).

- Post-GEM Cleanup & Processing: Break droplets, amplify transposed DNA via PCR (12 cycles). Perform SPRI cleanups.

- Dual Library Construction:

- ATAC Library: Add i5 and i7 sample indexes via PCR (14 cycles).

- Gene Expression Library: Capture poly-adenylated RNA from the same GEMs, reverse transcribe, and amplify (14 cycles).

- Sequencing: Pool libraries. Sequence on Illumina: ATAC library (PE 50 bp, high depth), Gene Expression library (PE 50 bp).

CUT&Tag for Low-Input Epigenetic Profiling

Objective: To map histone modifications or transcription factors with high signal-to-noise ratio from low cell numbers.

Detailed Protocol:

- Cell Preparation: Bind 100,000 live cells to Concanavalin A-coated magnetic beads in Binding Buffer (20mM HEPES pH7.5, 10mM KCl, 1mM CaCl2, 1mM MnCl2).

- Permeabilization & Antibody Incubation: Permeabilize cells in Dig-wash buffer (0.05% Digitonin). Incubate with primary antibody (1:50 dilution) in Dig-wash buffer for 2 hr at RT.

- Secondary Antibody & pA-Tn5 Loading: Incubate with Guinea Pig anti-Rabbit (or appropriate) secondary antibody for 1 hr. Wash. Incubate with pre-assembled pA-Tn5 adapter complex (1:250 dilution) for 1 hr.

- Tagmentation: Induce tagmentation by adding MgCl2 to 10mM final concentration. Incubate at 37°C for 1 hr.

- DNA Extraction & PCR: Stop reaction with EDTA, SDS, and Proteinase K. Extract DNA with Phenol-Chloroform. Amplify library with indexed primers (12-15 cycles).

- Cleanup & Sequencing: Clean up with SPRI beads. Sequence on Illumina NextSeq (PE 42 bp).

Key Signaling Pathways in Epigenetic Regulation

Single-Cell Multi-omics Data Generation Workflow

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagent Solutions for Modern Epigenomics

| Category | Specific Item/Kit | Supplier Examples | Primary Function |

|---|---|---|---|

| Chromatin Shearing | Covaris S220/S2 | Covaris, Inc. | Ultrasonicator for consistent chromatin fragmentation to 200-500 bp. |

| Magnetic Beads | Protein A/G Magnetic Beads, SPRIselect | Thermo Fisher, Beckman Coulter | Antibody capture (ChIP) and size-selective nucleic acid purification. |

| Validated Antibodies | CUT&Tag-Validated Antibodies, ChIP-seq Grade | Cell Signaling, Abcam, Active Motif | Specific immunoprecipitation of histone marks or transcription factors. |

| Transposase | Illumina Tagmentase TDE1, Hyperactive Tn5 | Illumina, Diagenode | Enzymatic fragmentation and adapter tagging for ATAC-seq/CUT&Tag. |

| Single-Cell Platform | Chromium Next GEM Chip G, Controller | 10x Genomics | Microfluidic partitioning of single nuclei for multi-ome libraries. |

| Library Prep | NEBNext Ultra II, 10x Multiome ATAC+Gene Exp | NEB, 10x Genomics | Addition of sequencing adapters and indexes with high efficiency. |

| Nuclei Isolation | Nuclei EZ Lysis Buffer, RNase Inhibitor | Sigma, Takara | Gentle isolation of intact nuclei for single-cell assays. |

| Sequencing | NovaSeq 6000 S4, NextSeq 2000 | Illumina | High-throughput, paired-end sequencing. |

| Data Analysis | Cell Ranger ARC, Seurat, Signac | 10x Genomics, Satija Lab | Pipeline for processing multi-ome data, alignment, and QC. |

| Live Exploration | EpiExplorer Research Platform | (Hypothetical) | Interactive visualization and analysis of large integrated epigenomic datasets. |

Data Integration & Live Exploration with EpiExplorer

Modern multi-omics datasets necessitate platforms capable of integrating diverse data layers (accessibility, expression, methylation, protein binding) for live, hypothesis-driven exploration.

EpiExplorer Research Workflow Logic:

The integration of scalable computational frameworks like EpiExplorer with the complex data from modern epigenomic technologies enables researchers to move from static datasets to dynamic, queryable systems biology models, accelerating discovery in fundamental biology and drug development.

Within the paradigm of live exploration of large epigenomic datasets, as exemplified by the EpiExplorer research initiative, consortium-level projects present both unprecedented opportunity and profound challenge. Initiatives like the Roadmap Epigenomics Project, ENCODE, BLUEPRINT, and CEEHRC generate multi-terabyte datasets encompassing histone modifications, DNA methylation, chromatin accessibility, and 3D conformation across hundreds of cell types and disease states. This technical guide addresses the core challenges of data navigation, integration, and visualization inherent to such scale, providing methodologies for effective real-time scientific exploration.

The volume and complexity of data from major consortia necessitate a clear understanding of scale before attempting navigation.

Table 1: Scale of Major Epigenomic Consortium Data Releases (2022-2024)

| Consortium | Primary Focus | Approximate Public Data Volume | Typical File Types | Key Assay Count (Avg. per Sample) |

|---|---|---|---|---|

| ENCODE4 (2023) | Functional Elements | 1.2 PB | bigWig, bigBed, BAM, HDF5 | 8-15 (ChIP-seq, ATAC-seq, RNA-seq) |

| IHEC (2022 Update) | International Harmonization | 900 TB | bigWig, bedMethyl, cool | 6-12 (WGBS, ChIP-seq, Hi-C) |

| PsychENCODE (Phase II) | Neuroepigenetics | 350 TB | BAM, bigWig, synapse objects | 10+ (snRNA-seq, H3K27ac, Methylation array) |

| 4DN (2024 Portal) | 3D Nucleome | 700 TB | .cool, .hic, .mcool | 3-5 (Hi-C, Micro-C, ChIA-PET) |

Core Methodologies for Data Access and Preprocessing

Effective live exploration requires robust, reproducible pipelines for data ingestion and normalization.

Protocol: Federated Query and Metadata Standardization

Objective: To programmatically identify relevant datasets across distributed consortium repositories without bulk download.

- Query Endpoints: Utilize consortium-specific APIs (e.g., ENCODE's

search, IHEC'sdata-portal, CEEHRC'sdiscovery-api). - Metadata Harmonization: Map all query results to a unified schema (e.g., following the GA4GH Phenopackets standard) using a custom Python/R script. Key fields must include:

biosample_term_id,assay_type,target,file_format,hub_url. - Quality Filter: Apply a predefined filter matrix scoring data integrity (read depth, FRiP score for ChIP-seq, bisulfite conversion rate for WGBS). Retain only datasets passing thresholds.

- Hub Generation: Automatically generate a UCSC Genome Browser

trackHubor a WashU Epigenome Browsersessionfile for visual aggregation.

Protocol: On-the-Fly Normalization for Cross-Study Comparison

Objective: To enable comparative visualization of signal tracks from disparate experimental batches.

- Read Depth Scaling: For sequencing depth normalization, use

bamCoveragefrom deepTools (v3.5.3) with parameters--normalizeUsing CPM --binSize 10. - Signal Range Harmonization: Apply a quantile normalization across selected bigWig tracks. Using

wiggletools(v1.2.5), compute the 99th percentile value for each track and scale all values proportionally. - Reference Epigenome Anchoring: For analyses focused on differential signals, define a common control sample (e.g., a standard cell line like GM12878) present across studies. Calculate a scaling factor relative to this control for each track.

Visualization Architectures for Live Exploration

The EpiExplorer paradigm emphasizes interactive, hypothesis-testing visualization over static figures.

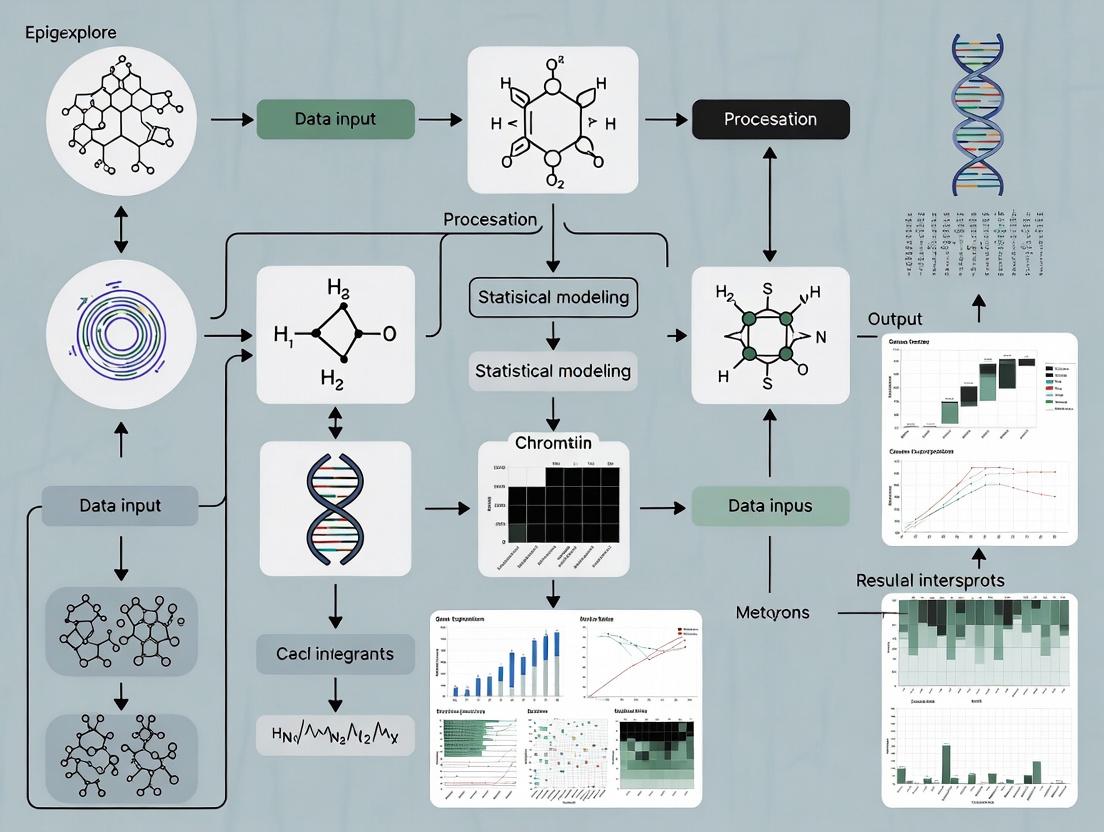

Diagram: EpiExplorer Live Query and Rendering Pipeline

Title: Live EpiExplorer Data Flow

Diagram: Multi-Consortium Data Integration Strategy

Title: Cross-Consortium Integration Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools & Reagents for Consortium Data Exploration

| Item Name | Category | Function/Benefit | Example Product/Software |

|---|---|---|---|

| High-Memory Compute Node | Hardware | Enables local loading of multiple genome-wide signal tracks for real-time interaction. | AWS r6i.32xlarge / GCP n2-highmem-128 |

| Epigenomic Data Browser | Software | Specialized visualization platform for dense, multi-track data. | WashU Epigenome Browser, JBrowse2, IGV |

| Federated Query API Client | Code Library | Programmatic access to consortium portals without manual website navigation. | encode_rest_api (Python), IhecToolkit (R) |

| Normalization Pipeline | Bioinformatics Tool | Standardizes signal intensities from disparate lab protocols for fair comparison. | deepTools bamCoverage, wiggletools |

| Track Hub Manager | Data Orchestration | Creates a single, manageable pointer set to distributed data files. | UCSC trackHub specification & generators |

| Epigenome Reference Matrix | Reference Data | Provides baseline states for annotation and interpretation of novel data. | Roadmap 25-state ChromHMM model |

| Bulk Data Transfer Solution | Infrastructure | For scenarios requiring local analysis, enables efficient terabyte-scale transfers. | Aspera, rsync over HPN-SSH, Globus |

Advanced Protocol: Real-Time Differential Epigenomic Analysis

Objective: To perform a live comparative analysis between two cellular states (e.g., diseased vs. healthy) across consortium data.

- Cohort Definition: Using harmonized metadata, select at least 5 replicates per condition from one or more consortia, ensuring assay and platform consistency.

- Region-of-Interest (ROI) Definition: Option A: Input a BED file of genomic coordinates. Option B: Perform an initial scan using a pre-computed chromHMM state (e.g., "Active Enhancer") as ROI.

- Signal Extraction: For each ROI and each bigWig file, use

pyBigWig(v0.3.18) to extract mean signal intensity. - Statistical Computation: In real-time, apply a Mann-Whitney U test (for non-normal distributions) comparing signal intensities between the two cohorts across each ROI. Correct for multiple testing using the Benjamini-Hochberg procedure (FDR < 0.05).

- Visual Output: Generate an interactive Manhattan plot (for genome-wide scan) or a dynamic heatmap (for pre-defined ROIs) highlighting significantly differential regions, embedded within the EpiExplorer interface.

Navigating the scale of consortium epigenomic data is a formidable challenge that demands a shift towards automated, live exploration systems. By implementing standardized query protocols, on-the-fly normalization, and interactive visualization architectures as detailed in this guide, researchers can transform these vast datasets from static archives into dynamic resources for discovery. The EpiExplorer framework provides a conceptual and technical model for this transition, turning the challenge of large-scale data into its greatest asset.

EpiExplorer is a dynamic web-based platform designed for the interactive exploration of large-scale epigenomic datasets. Framed within the broader thesis of enabling live, real-time interrogation of epigenetic data, this guide details its technical architecture, core functionalities, and its pivotal role in accelerating hypothesis generation for researchers and drug development professionals. By integrating heterogeneous data sources and providing intuitive visual analytics, EpiExplorer bridges the gap between massive public repositories and actionable biological insight.

The central thesis of EpiExplorer research posits that scientific discovery in epigenomics is accelerated not just by data accumulation, but through systems that allow for immediate, iterative, and user-driven exploration. Traditional static analysis pipelines are giving way to live exploration platforms where researchers can pose "what-if" questions in real-time, visualize relationships across genomic loci and epigenetic marks, and rapidly form testable hypotheses.

Core Architecture & Data Integration

EpiExplorer's backend is built on a scalable data engine that integrates primary data from key public repositories. The platform performs regular live updates to ensure data currency.

| Data Source | Data Type | Sample Scale (As of Latest Update) | Update Frequency |

|---|---|---|---|

| ENCODE (v4) | ChIP-seq, ATAC-seq, DNase-seq | >20,000 experiments across >1,000 cell/tissue types | Quarterly |

| Roadmap Epigenomics | Histone modifications, DNA accessibility | 127 reference epigenomes | Finalized, used as reference |

| TCGA | DNA methylation (Illumina 450K/850K) | ~11,000 tumor/normal samples | Fixed release |

| GEO (Curated Subset) | User-submitted epigenomic assays | >500,000 sample entries (meta-indexed) | Weekly meta-index |

| gnomAD | Genomic variant frequencies | >140,000 whole genomes | With major releases |

Experimental Protocol 1: Data Ingestion and Normalization

- Data Acquisition: Automated scripts query FTP sites and APIs of sources like ENCODE and GEO using scheduled cron jobs.

- Metadata Annotation: Each dataset is tagged with a controlled vocabulary (e.g., cell type, disease state, epigenetic mark, assay type).

- Genomic Alignment: Raw sequencing files (FASTQ) are processed through a standardized pipeline (Bowtie2/BWA for alignment, MACS2 for peak calling).

- Normalization: Signal files (e.g., bigWig) are generated using reads per kilobase per million mapped reads (RPKM) or similar normalization.

- Indexing: Processed data is loaded into a genomic interval database (e.g., PostgreSQL with GiST indexing) for rapid range-based queries.

Interactive Hypothesis Generation Workflow

The platform facilitates a multi-step interactive cycle.

Diagram Title: EpiExplorer Interactive Hypothesis Generation Cycle

Experimental Protocol 2: On-Demand Epigenetic Correlation Analysis

- Region Selection: User defines a genomic locus (e.g., chr1:50,000,000-55,000,000) via the interactive browser.

- Data Matrix Construction: EpiExplorer extracts signal values for all available epigenetic marks (e.g., H3K27ac, H3K9me3, DNAme) across all cell types in the selected region, binning into 1kb windows.

- Correlation Computation: A pairwise Pearson correlation matrix is computed in real-time using WebAssembly-accelerated routines.

- Clustering & Visualization: Results are displayed as an interactive heatmap with hierarchical clustering. Strong positive/negative correlations suggest coordinated regulation.

- Hypothesis Output: A strong negative correlation between DNA methylation and H3K4me3 in a promoter region across tumor samples may suggest a specific silencing mechanism to investigate.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Tools & Reagents for Validating EpiExplorer-Generated Hypotheses

| Item | Function in Validation | Example Product/Catalog |

|---|---|---|

| Validated Antibodies for ChIP | Immunoprecipitation of specific histone modifications or transcription factors identified as key in exploration. | Anti-H3K27ac (Diagenode, C15410196); Anti-CTCF (Cell Signaling, 2899S) |

| CRISPR Activation/Inhibition Systems | Functional validation of enhancer-promoter links predicted by co-accessibility. | dCas9-VPR (Addgene, 63798); dCas9-KRAB (Addgene, 71237) |

| Bisulfite Conversion Kits | Quantitative validation of DNA methylation patterns predicted from public datasets. | EZ DNA Methylation-Lightning Kit (Zymo Research, D5030) |

| ATAC-seq Kit | Profiling chromatin accessibility in a novel cell model to confirm predicted open regions. | Illumina Tagment DNA TDE1 Enzyme and Buffer Kits (20034197) |

| Multiplexed qPCR Assays | Rapid testing of gene expression changes following epigenetic perturbation. | TaqMan Gene Expression Assays (Thermo Fisher) |

| Pathway Analysis Software | Placing lists of candidate genes from EpiExplorer into biological context. | Ingenuity Pathway Analysis (QIAGEN) or Metascape |

Case Study: Identifying a Novel Enhancer in Disease

Scenario: A drug development scientist explores a GWAS locus linked to autoimmune disease.

Table 3: Quantitative Analysis of a Candidate Enhancer (chr6:123,450,000-123,455,000)

| Epigenetic Mark | Signal in T-cells (RPKM) | Signal in B-cells (RPKM) | Signal in Hepatocytes (RPKM) | Enrichment (T-cell vs. Avg.) |

|---|---|---|---|---|

| H3K27ac | 45.2 | 5.1 | 1.8 | 8.5x |

| H3K4me1 | 32.1 | 15.4 | 3.2 | 3.1x |

| ATAC-seq Signal | 28.7 | 6.3 | 2.1 | 6.2x |

| H3K27me3 | 1.5 | 12.8 | 5.4 | 0.2x |

Diagram Title: From GWAS to Validated Enhancer via EpiExplorer

Experimental Protocol 3: Candidate Enhancer Validation

- Amplification & Cloning: PCR amplify the candidate region from genomic DNA. Clone into a pGL4.23[luc2/minP] vector (Promega) for luciferase assays.

- Cell Transfection: Transfect the reporter construct into relevant cell lines (e.g., Jurkat T-cells) using Lipofectamine 3000.

- Luciferase Assay: Measure firefly luciferase activity 48h post-transfection, normalizing to Renilla control. A >5-fold increase over minimal promoter confirms enhancer activity.

- CRISPR Deletion: Design sgRNAs flanking the enhancer and deliver via nucleofection with Cas9 protein. Confirm deletion by PCR.

- Phenotypic Readout: Perform RNA-seq or qPCR on knockout cells to identify dysregulated target genes, confirming the regulatory link.

EpiExplorer operationalizes the thesis of live epigenomic exploration, transforming static datasets into an interactive discovery environment. By providing immediate access to integrated data, intuitive visual analytics, and tools for on-the-fly analysis, it serves as a critical catalyst in the bioinformatics ecosystem, accelerating the journey from genomic observation to mechanistic hypothesis and, ultimately, to therapeutic intervention.

In the pursuit of a broader thesis on the live exploration of large epigenomic datasets, the EpiExplorer research platform emerges as a critical tool. This technical guide deconstructs its modular architecture, designed to empower researchers, scientists, and drug development professionals to interact dynamically with complex multi-omic data, enabling real-time hypothesis generation and validation.

Core Components of the EpiExplorer Interface

EpiExplorer’s interface is built upon four interconnected core components that facilitate live data exploration.

The Data Integration Engine

This engine serves as the backbone, providing real-time access to pre-processed epigenomic datasets. It handles data normalization, format conversion, and dynamic indexing for rapid querying.

The Interactive Visualization Canvas

A dynamic web-based canvas renders complex data types—such as chromatin accessibility tracks, methylation profiles, and histone modification peaks—as interactive, overlayable graphics. Users can zoom, pan, and adjust visualization parameters on the fly.

The Query Builder & Analysis Module

This module allows users to construct complex, multi-faceted queries across datasets using a point-and-click interface or a domain-specific language. It supports operations like cohort filtering, feature intersection, and correlation analysis.

The Results & Annotation Dashboard

Query outputs are presented in a structured dashboard that integrates statistical summaries, raw data tables, and linked external biological annotations from public databases.

Modular Architecture of Data Hubs

EpiExplorer employs a hub-and-spoke model, where centralized Data Hubs manage specific data types or experimental sources. This modular design ensures scalability and maintainability.

Table 1: Primary EpiExplorer Data Hub Specifications

| Data Hub Module | Primary Data Type | Standardized Format | Typical Volume per Dataset | Update Frequency |

|---|---|---|---|---|

| ATAC-Seq Hub | Chromatin Accessibility | BED, bigWig | 5-50 GB | Weekly |

| ChIP-Seq Hub | Histone Modifications | narrowPeak, BAM | 20-200 GB | Bi-weekly |

| WGBS Hub | DNA Methylation | bedMethyl, bigBed | 50-500 GB | Monthly |

| Hi-C Hub | Chromatin Conformation | .hic, .cool | 100 GB - 2 TB | Quarterly |

| Clinical Covariates Hub | Patient Metadata | CSV, TSV | < 1 GB | On ingestion |

Hub Communication Protocol

Hubs communicate via a standardized API using JSON-RPC. Each hub is responsible for its own data validation, versioning, and compliance with the FAIR (Findable, Accessible, Interoperable, Reusable) principles.

Experimental Protocols for Data Integration

The following methodology is central to populating EpiExplorer's Data Hubs with user-provided or public data.

Protocol: Bulk Data Ingestion and Normalization for a ChIP-Seq Hub

- Raw Data Acquisition: Download sequence read archive (SRA) files or FASTQ files from repositories like GEO or ENCODE.

- Quality Control & Trimming: Use FastQC v0.12.1 and Trimmomatic v0.39 to assess and trim adapter sequences/low-quality bases.

- Alignment: Map reads to a reference genome (e.g., GRCh38) using Bowtie2 v2.5.1 with default parameters for paired-end reads.

- Peak Calling: Identify regions of significant enrichment using MACS2 v2.2.7.1 with a q-value cutoff of 0.05.

- Normalization & Format Conversion: Generate normalized bigWig files using deepTools bamCoverage v3.5.1 (RPKM normalization). Convert peak files to the standardized narrowPeak format.

- Metadata Annotation: Curate experimental metadata (antibody, cell type, treatment) into a predefined JSON schema.

- Hub Ingestion: Use the

epi-uploadcommand-line tool to validate, index, and transfer processed files and metadata to the target Data Hub.

Signaling Pathways and System Workflow

Diagram Title: EpiExplorer Live Query Data Flow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents & Materials for Epigenomic Profiling

| Item | Function/Benefit in Epigenomics Research | Example Vendor/Catalog |

|---|---|---|

| Tn5 Transposase (Tagmented) | Enzyme for simultaneous fragmentation and adapter tagging in ATAC-Seq; enables rapid library prep. | Illumina (20034197) |

| Magnetic Protein A/G Beads | For immunoprecipitation of antibody-bound chromatin complexes in ChIP experiments. | Thermo Fisher (26162) |

| Anti-H3K27ac Antibody | Validated antibody to specifically pull down chromatin marked with this active enhancer histone modification. | Abcam (ab4729) |

| Bisulfite Conversion Kit | Chemical treatment for converting unmethylated cytosines to uracil while leaving methylated cytosines intact for WGBS. | Zymo Research (D5001) |

| PCR-Free Library Prep Kit | Minimizes amplification bias during next-generation sequencing library construction for superior quantification. | Illumina (20040891) |

| Cell Lysis Buffer (with Protease Inhibitors) | For effective nuclear extraction while preserving protein-DNA interactions and preventing degradation. | Active Motif (15202446) |

| Size Selection Beads | SPRI bead-based cleanup for precise selection of DNA fragment sizes (e.g., 150-300 bp for ChIP-Seq). | Beckman Coulter (B23318) |

| High-Sensitivity DNA Assay Kit | Fluorometric quantification of low-concentration DNA libraries prior to sequencing. | Agilent (5067-4626) |

Key Experiment: Live Cohort Differential Analysis

This protocol exemplifies a core use case within the EpiExplorer thesis: real-time comparative epigenomics.

Experimental Protocol: Live Differential Chromatin Accessibility Analysis

- Cohort Definition: Using the Query Builder, select two cohorts (e.g., Treatment vs. Control) from the ATAC-Seq Hub by filtering on metadata fields.

- Region of Interest Selection: Either input a genomic coordinate (e.g., chr1:50,000,000-55,000,000) or select a feature from a linked gene annotation track.

- Analysis Execution: Initiate the built-in differential analysis pipeline. This triggers the following automated steps on the server:

- Read Count Aggregation: The engine extracts read counts from normalized bigWig files across all samples in each cohort for the specified region(s).

- Statistical Testing: A DESeq2 model (v1.40.0) is applied in-memory, using the negative binomial distribution to test for significant (adjusted p-value < 0.1) differences in accessibility.

- Result Compilation: Log2 fold changes, p-values, and mean accessibility signals are tabulated.

- Visualization & Interpretation: Results are instantly displayed:

- A table of significant differential peaks is shown in the Dashboard.

- The Visualization Canvas simultaneously updates to show aggregated ATAC-Seq signal tracks for each cohort, aligned with gene models, allowing for immediate visual validation.

Diagram Title: Differential Analysis Workflow in EpiExplorer

The modular architecture of EpiExplorer, centered on specialized Data Hubs and a responsive interface, directly enables the thesis of live epigenomic exploration. By decoupling data management from analysis and visualization, it provides a scalable, robust framework for scientists to interrogate large-scale datasets interactively, accelerating the transition from data to biological insight and therapeutic discovery.

The EpiExplorer research initiative is a framework for the live exploration of large, multi-modal epigenomic datasets to identify regulatory drivers of disease and potential therapeutic targets. Its core thesis posits that dynamic, integrated analysis of public reference epigenomes and proprietary experimental data—such as ChIP-seq, ATAC-seq, and DNA methylation arrays—will accelerate hypothesis generation and validation. This technical guide details the foundational step of this paradigm: the robust loading and computational harmonization of disparate epigenomic tracks, enabling their seamless interrogation within platforms like the EpiExplorer interactive dashboard.

Quantitative Landscape of Public Epigenomic Repositories

The volume and diversity of public epigenomic data have grown exponentially, providing a critical baseline for integration. Key quantitative metrics as of recent surveys are summarized below.

Table 1: Scale and Scope of Major Public Epigenomic Data Resources

| Resource | Primary Consortia | Estimated Datasets | Key Assays | Primary Tissue/Cell Types |

|---|---|---|---|---|

| ENCODE | ENCODE | > 15,000 | ChIP-seq, ATAC-seq, DNase-seq, RNA-seq | > 800 cell lines, tissues, primary cells |

| Roadmap Epigenomics | IHEC | ~ 10,000 | Histone Mods, DNAme, RNA-seq | > 100 primary human tissues & cells |

| Cistrome DB | Cistrome Project | ~ 50,000 | ChIP-seq, DNase-seq | Human, mouse; focus on TFs & chromatin |

| GEO / SRA | NCBI | > 1,000,000 (omic-inclusive) | All high-throughput assays | Pan-disease, pan-organism |

Core Methodologies for Data Loading and Harmonization

Protocol: Unified Data Ingestion Pipeline

This protocol describes the automated pipeline for fetching and initially processing tracks for EpiExplorer.

Metadata Curation & Querying:

- For public data, execute programmatic queries via APIs (e.g., ENCODE's

search, GEO'sEntrez). Use controlled vocabulary (e.g.,assay_title: "ChIP-seq",target: "H3K27ac",biosample_ontology.term_name: "hepatocyte"). - For private data, enforce a strict metadata schema mirroring public standards (assay, target, biosample, replicate, processing pipeline version) upon upload to the local EpiExplorer data lake.

- For public data, execute programmatic queries via APIs (e.g., ENCODE's

File Retrieval & Validation:

- Download processed data files (preference: bigWig for signal, narrowPeak/broadPeak for intervals,

.md5for checksums). - Validate file integrity and coordinate reference genome assembly (e.g., hg38) using tools like

CrossMapor liftover chains, standardizing all tracks to a single assembly.

- Download processed data files (preference: bigWig for signal, narrowPeak/broadPeak for intervals,

Normalization & Signal Transformation:

- For peak files: Convert all to a unified BED format. Apply

bedtools mergeto create a consensus peak set for cross-track comparisons. - For signal tracks: Apply a scaling factor to Reads Per Genome Coverage (RPGC) or transform to 1x depth coverage. Use tools like

bamCoveragefrom deepTools with parameters--normalizeUsing RPGC --effectiveGenomeSize 2913022398(for hg38).

- For peak files: Convert all to a unified BED format. Apply

Protocol: Cross-Dataset Batch Effect Harmonization

To enable direct quantitative comparison between public and private tracks, address technical variability.

Reference Peak Set Generation:

- Input: All peak files (public and private) for a given assay (e.g., ATAC-seq) across similar biosamples.

- Method: Use

bedtools multiinterfollowed bybedtools mergeto create a universal, non-redundant genomic interval set.

Signal Extraction & Quantile Normalization:

- Extract raw signal counts from bigWig files for each interval in the reference peak set using

bigWigAverageOverBed. - Assemble into a matrix (intervals x samples). Apply quantile normalization (

preprocessCoreR package) to force the empirical distribution of signal intensities to be identical across all tracks. - Output normalized bigWig files for downstream visualization and analysis in EpiExplorer.

- Extract raw signal counts from bigWig files for each interval in the reference peak set using

Visualizing the Integration Workflow

Diagram 1: EpiExplorer Data Harmonization Pipeline

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 2: Key Reagents and Computational Tools for Epigenomic Integration

| Item/Tool | Category | Function in Integration |

|---|---|---|

| CrossMap / liftOver | Software Tool | Converts genomic coordinates between different assembly versions (e.g., hg19 to hg38). |

| deepTools (bamCoverage, bigWigCompare) | Software Suite | Generates normalized, comparable signal tracks from aligned sequencing files (BAM). |

| BEDOPS / bedtools | Software Suite | Performs fast, scalable operations (merge, intersect, coverage) on genomic interval files. |

| R/Bioconductor (preprocessCore, rtracklayer) | Software Environment | Implements advanced normalization algorithms and facilitates import/export of genomic tracks. |

| Reference Genome FASTA (hg38/mm39) | Data Resource | The foundational sequence against which all tracks are aligned for consistent analysis. |

| Blacklist Regions File | Data Resource | A set of genomic regions with anomalous signals to be excluded during peak calling and analysis. |

| Consensus Peak Set | Derived Data | A unified set of genomic intervals enabling direct, locus-specific comparison across all integrated tracks. |

| Quantile Normalization Algorithm | Computational Method | Removes technical batch effects by making signal distributions identical across datasets. |

Methodology in Action: Step-by-Step Workflows for Multi-omics Analysis with EpiExplorer

EpiExplorer is a web-based platform designed for the live exploration of large-scale epigenomic datasets. Its interface is structured to facilitate intuitive navigation, real-time data interrogation, and advanced visualization for researchers investigating mechanisms of gene regulation in health and disease. The UI is logically divided into interconnected panels, each serving a specific function in the analytical workflow.

Key Panels and Functional Layout

The main workspace is organized into four primary panels, as detailed in Table 1.

Table 1: Core Interface Panels of EpiExplorer

| Panel Name | Primary Function | Key User Actions | Output/Visualization |

|---|---|---|---|

| Dataset Navigator & Metadata | Browse, select, and filter available epigenomic datasets (e.g., ChIP-seq, ATAC-seq, WGBS). | Select project, cell type, assay, and genomic region. Apply quality filters (e.g., p-value, Q-score). | Lists curated datasets with summary statistics (sample size, peaks, coverage). |

| Genomic Coordinates & Feature Input | Define the genomic region or set of genes/loci for analysis. | Enter coordinates (chr:start-end), upload BED files, or search by gene symbol. | Interactive genome browser preview; list of submitted features. |

| Visualization & Analytics Dashboard | Configure and render multi-track epigenomic data visualizations and plots. | Select tracks, set color schemes, adjust scaling (linear/log), enable overlays. | Integrated Genome Viewer (IGV)-like track display; correlation heatmaps; aggregate plots. |

| Results & Statistics Panel | Display quantitative results, statistical tests, and export options. | Run differential analysis, enrichment tests (GREAT, LOLA). Export figures/data. | Tables of significant peaks/hits; p-value/Q-value summaries; PDF/CSV export links. |

Detailed Controls and Visualization Settings

Precise control over data rendering is critical for accurate interpretation. Key settings are summarized in Table 2.

Table 2: Critical Visualization Controls and Settings

| Control Category | Specific Setting | Default Value | Technical Impact on Data Display |

|---|---|---|---|

| Track Rendering | Data Normalization | Reads Per Million (RPM) | Enables comparison of signal intensity across samples with different sequencing depths. |

| Y-axis Scale | Linear | Direct representation of signal height. Switching to log scale can highlight low-abundance features. | |

| Track Height | 80 px | Determines the vertical space allocated per data track. Adjustable from 50-200 px. | |

| Color Encoding | Signal Colormap | Viridis (sequential) | Maps signal intensity to color; optimized for perceptual uniformity and colorblind accessibility. |

| Categorical Palette | Set3 (qualitative) | Distinguishes discrete groups (e.g., cell types, conditions) with high contrast. | |

| Overlay Opacity | 70% | Controls transparency when multiple tracks or annotations are overlapped for comparison. | |

| Interaction & Querying | Click-to-Query | Enabled | Clicking any data point (peak) retrieves its genomic coordinates, nearest gene, and linked external DB IDs. |

| Dynamic Zoom | 1 kb - 1 Mb | Smooth zooming via scroll or slider; automatically re-fetches data at appropriate resolution. | |

| Region Highlighting | Brush tool | Allows manual selection of a sub-region within the viewport for focused statistical analysis. |

Protocol: Live Exploration of Differential Methylation Regions (DMRs)

Objective: To identify and visualize differentially methylated regions between two cellular conditions (e.g., diseased vs. healthy) using whole-genome bisulfite sequencing (WGBS) data within EpiExplorer.

Step-by-Step Methodology:

Dataset Selection:

- In the Dataset Navigator, apply filters:

Assay = "WGBS",Project = "BLUEPRINT Epigenome". - Select two comparative groups:

Cell Type: CD4+ T-cells,Condition: Acute Myeloid Leukemia (AML)andCondition: Healthy Donor. - Load the pre-processed methylation beta-value tracks for 5 samples per condition. EpiExplorer automatically retrieves mean methylation levels per 100bp bin.

- In the Dataset Navigator, apply filters:

Region Definition:

- In the Genomic Coordinates panel, input a gene locus of interest:

Gene Symbol = "DNMT3A". The system resolves tochr2:25,300,000-25,500,000.

- In the Genomic Coordinates panel, input a gene locus of interest:

Visual Configuration & Statistical Testing:

- In the Visualization Dashboard, add the 10 WGBS tracks. Set colormap to

RdYlBu(diverging) to intuitively represent methylation (blue) vs. hypomethylation (red). - Enable the "Statistical Overlay" tool. Select

Test = "Linear Model"accounting for sample group. SetFDR (Q-value) cutoff = 0.01andminimum methylation difference = 0.2. - Execute the test. Significant DMRs are highlighted as translucent bars across the tracks.

- In the Visualization Dashboard, add the 10 WGBS tracks. Set colormap to

Result Interpretation and Export:

- The Results Panel populates a table listing all DMRs within the viewport. Columns include: genomic coordinates, mean β (AML), mean β (Healthy), difference, p-value, and Q-value.

- Select a significant DMR (e.g.,

chr2:25,345,600-25,346,200). Click "Export Region View" to generate a publication-ready PNG (300 DPI) of the configured tracks and highlights.

Title: DMR Analysis Workflow in EpiExplorer

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents for Epigenomic Profiling Experiments

| Reagent / Kit Name | Provider | Primary Function in Epigenomics |

|---|---|---|

| NEBNext Ultra II DNA Library Prep Kit | New England Biolabs | High-efficiency library preparation for ChIP-seq, ATAC-seq, and WGBS, enabling input from low-yield immunoprecipitations. |

| Illumina TruSeq Methylation EPIC Kit | Illumina | Array-based profiling of >850,000 CpG sites across the human genome, covering enhancers and gene bodies. |

| Cell Signaling Technology Magnetic Beads (Protein A/G) | CST | For chromatin immunoprecipitation (ChIP), used to isolate protein-DNA complexes with specific antibodies (e.g., for H3K27ac, H3K9me3). |

| Diagenode Bioruptor Pico | Diagenode | Ultrasonic shearing device for consistent chromatin fragmentation to optimal sizes (200-600 bp) for ChIP-seq. |

| Zymo Research EZ DNA Methylation-Lightning Kit | Zymo Research | Rapid bisulfite conversion of unmethylated cytosines in genomic DNA for downstream WGBS or targeted sequencing. |

| 10x Genomics Single Cell ATAC-seq Kit | 10x Genomics | Enables high-throughput profiling of chromatin accessibility in thousands of single nuclei, identifying cell-type-specific regulatory elements. |

| Active Motif CUT&RUN Assay Kit | Active Motif | Enzyme-targeted cleavage under native conditions for mapping protein-DNA interactions with low background and high resolution. |

Within the broader thesis of live exploration of large epigenomic datasets with EpiExplorer research, the initial workflow for importing and visualizing DNA methylation data is foundational. This guide details the technical procedures for handling two primary data types: array-based data from platforms like Illumina's Infinium MethylationEPIC (5-base chemistry) and sequencing-based data from Whole-Genome Bisulfite Sequencing (WGBS). Efficient import and immediate visualization are critical for hypothesis generation and quality assessment in drug development and basic research.

Illumina Infinium Array Data (5-Base)

The current Illumina EPIC v2.0 array interrogates over 935,000 CpG sites. Data is typically delivered as an IDAT file pair (Red and Green channel) per sample.

Import Protocol:

- File Structure: Organize IDAT files in a single directory, optionally with a sample sheet (CSV) linking IDAT base names to phenotypic data.

- R/Bioconductor Method (minfi package):

- Quality Control: Generate quality control plots (e.g., log median intensity) to identify failed arrays.

Normalization: Apply a normalization method (e.g.,

preprocessQuantile,preprocessNoob) to correct for technical variation.Extraction: Obtain beta values (methylation proportion: M/(M+U+100)) or M-values (log2 ratio of methylated/unmethylated) for downstream analysis.

Whole-Genome Bisulfite Sequencing (WGBS) Data

WGBS provides single-base resolution methylation data. Processed data is often represented in a BED-like format or as a tab-delimited matrix of methylation percentages.

Import Protocol:

- Common Input Formats:

- Bismark Covariance File: A per-sample file with columns:

chr,start,end,methylation%,count methylated,count unmethylated. - MethylKit Object or Tabix-indexed file: For efficient large-scale access.

- Bismark Covariance File: A per-sample file with columns:

- R/Bioconductor Method (methylKit):

- Filtering & Normalization: Filter by coverage and normalize read depths across samples.

Table 1: Comparison of Primary DNA Methylation Profiling Methods

| Feature | Illumina Infinium EPIC v2.0 | Whole-Genome Bisulfite Sequencing (WGBS) |

|---|---|---|

| Genome Coverage | ~935,000 pre-selected CpG sites (~3% of total CpGs) | All ~28 million CpGs in human genome (theoretical) |

| Resolution | Single CpG site | Single-base pair |

| Typical Read/Coverage Depth | High signal-to-noise per probe | 20-30x recommended for robust % methylation calls |

| Sample Throughput | High-throughput, 96-plex per array | Lower throughput, higher cost per sample |

| Cost per Sample (Approx.) | $150 - $300 | $1,000 - $3,000+ |

| Best For | Population studies, clinical biomarker screening, high-sample-size cohorts | Discovery, regulatory element analysis, non-CpG methylation, novel biomarker identification |

| Key Data Output | Beta value (0-1) or M-value | Methylated/Unmethylated read counts, % methylation |

Mandatory Visualization: Workflow Diagrams

Core Data Import and Visualization Workflow

Title: DNA Methylation Data Import and Visualization Pipeline

EpiExplorer Live Exploration Integration

Title: EpiExplorer Live Analysis Integration Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Tools for DNA Methylation Analysis Workflows

| Item | Function/Description | Example Product/Kit |

|---|---|---|

| Bisulfite Conversion Kit | Chemically converts unmethylated cytosines to uracils, while leaving 5-methylcytosines unchanged. Critical first step for bisulfite-based methods. | Zymo Research EZ DNA Methylation-Lightning Kit, Qiagen Epitect Bisulfite Kit |

| DNA Methylation Array | Microarray slide containing probes for specific CpG sites. The core consumable for Illumina-based profiling. | Illumina Infinium MethylationEPIC v2.0 BeadChip |

| High-Fidelity Post-Bisulfite DNA Polymerase | PCR enzyme designed to amplify bisulfite-converted DNA (rich in uracil/thymine) with high accuracy and minimal bias. | TaKaRa EpiTaq HS, Qiagen HotStarTaq Plus |

| Methylated & Unmethylated DNA Controls | Genomic DNA standards (e.g., from human cell lines) treated to be fully methylated or unmethylated. Used to assess bisulfite conversion efficiency and assay specificity. | Zymo Research Human Methylated & Non-methylated DNA Set |

| Methylation-Specific qPCR Assays | Primers and probes designed to differentiate methylated and unmethylated alleles after bisulfite conversion. Used for validation of array/seq findings. | Thermo Fisher Scientific Methylight assays, Custom TaqMan assays |

| Genomic DNA Isolation Kit (Methylation-Sensitive) | Kit optimized for high-molecular-weight DNA extraction without introducing methylation artifacts. Often includes RNAse treatment. | QIAamp DNA Mini Kit, DNeasy Blood & Tissue Kit |

| Bioinformatics Software Suite | Packages for processing, normalizing, and statistically analyzing methylation data. Essential for the computational workflow. | R/Bioconductor (minfi, methylKit, DSS), SeqMonk, Bismark |

Experimental Protocols for Key Validation Steps

Protocol: Validation of DMRs by Pyrosequencing (Post-Discovery)

- Objective: Quantitatively validate differentially methylated regions (DMRs) identified from array or WGBS data in an extended sample set.

- Steps:

- Primer Design: Using software (e.g., PyroMark Assay Design), design one biotinylated PCR primer pair to amplify the bisulfite-converted region of interest. Ensure amplicon size < 200 bp.

- Bisulfite Conversion: Convert 500 ng of sample genomic DNA using a dedicated kit (see Toolkit).

- PCR Amplification: Perform PCR on converted DNA using the designed primers. Verify amplicon size on an agarose gel.

- Pyrosequencing Preparation: Bind 10-20 µL of biotinylated PCR product to Streptavidin Sepharose High Performance beads. Denature and wash to obtain a single-stranded template.

- Sequencing Run: Load template into a Pyrosequencer (e.g., Qiagen PyroMark Q48) with the appropriate sequencing primer and nucleotide dispensation order. The instrument measures light emitted upon nucleotide incorporation, proportional to the number of C or T bases incorporated at each CpG.

- Data Analysis: Use instrument software to calculate the percentage methylation at each interrogated CpG site within the amplicon by comparing C/T peak heights. Compare results to high-throughput discovery data.

This technical guide details a core workflow within the broader thesis on the live exploration of large epigenomic datasets using the EpiExplorer research framework. Comparative genomics across different human genome assemblies, such as the GRCh38 (hg38) reference and the complete telomere-to-telomere (T2T) CHM13 assembly, is fundamental for contextualizing epigenomic findings. Discrepancies in sequence, structure, and annotation between assemblies can significantly impact the interpretation of chromatin accessibility, histone modification, and DNA methylation data. This workflow ensures that epigenomic signals analyzed in EpiExplorer are accurately mapped and their biological relevance assessed against the most complete genomic context.

Core Data and Quantitative Comparisons

The primary differences between hg38 and T2T-CHM13 stem from the resolution of gaps and structural variants. The table below summarizes key quantitative metrics.

Table 1: Quantitative Comparison of hg38 and T2T-CHM13 Assemblies

| Metric | GRCh38 (hg38) | T2T-CHM13 (v2.0) | Impact on Epigenomic Analysis |

|---|---|---|---|

| Total Length | ~3.1 Gb | ~3.1 Gb | Overall coverage similar; T2T fills missing sequences. |

| Gap Count | 349 | 0 | Eliminates ambiguous mapping in pericentromeric, telomeric regions. |

| Resolved Bases | 2.9 Gb | 3.1 Gb | ~200 Mb of novel sequence available for epigenomic signal investigation. |

| Centromere Model | Represented by gap (3 Mb each) | Fully resolved alpha satellite arrays | Enables first-ever analysis of centromeric epigenetics. |

| Ribosomal DNA Arrays | Incomplete, single model | Fully resolved, 5 acrocentric chromosomes | Allows study of rDNA chromatin regulation. |

| Annotation (GENCODE v45) | ~60,000 genes | Lift-over available; de novo annotation ongoing | Critical for assigning epigenomic signals to correct gene isoforms. |

| Major Structural Variants | Partially represented | Fully resolved (e.g., 2q13/15, 17q21.31 inversions) | Corrects mislocalization of regulatory elements like enhancers. |

Experimental Protocols for Comparative Epigenomics

Protocol 3.1: Cross-Assembly Mapping and Liftover of Epigenomic Data

Purpose: To transfer epigenomic feature coordinates (e.g., ChIP-seq peaks, ATAC-seq regions) from hg38 to T2T-CHM13.

- Input: BED or BEDPE files of genomic intervals in hg38 coordinates.

- Liftover Tool: Use UCSC

liftOverwith an appropriate chain file (download from UCSC Genome Browser: hg38ToT2T-CHM13.v2.0.chain). - Command:

- Post-Processing: Analyze

unmapped.bedfeatures, which may reside in sequences novel to T2T. These require de novo alignment (see Protocol 3.2).

Protocol 3.2:De NovoAlignment of Raw Sequencing Data to T2T-CHM13

Purpose: To directly map sequencing reads to the T2T assembly for maximal accuracy, especially for novel sequences.

- Input: Raw FASTQ files from epigenomic assays (ChIP-seq, ATAC-seq, WGBS).

- Indexing: Create a Bowtie2 or BWA index for the T2T-CHM13 reference genome (FASTA file).

- Alignment: Align reads using an aligner suitable for the assay (e.g.,

bowtie2for ChIP-seq,bwa-mem2for WGBS). Use sensitive parameters for repetitive regions. - Post-Alignment: Sort, deduplicate, and create alignment indices (using

samtools). Generate bigWig files for visualization in EpiExplorer.

Protocol 3.3: Validation of Assembly-Specific Epigenomic Signals

Purpose: To confirm that epigenomic signals in discrepant regions are biologically real and not mapping artifacts.

- Target Identification: Identify regions with divergent signal coverage or peak calls between hg38 and T2T mappings (e.g., using

bedtoolsintersection). - PCR Primer Design: Design primers spanning the region of interest, ensuring specificity to the T2T sequence.

- Experimental Validation: Perform quantitative PCR (qPCR) or droplet digital PCR (ddPCR) on ChIP or input DNA from the original sample, quantifying enrichment specifically in the T2T-resolved locus.

- Analysis: Compare fold-enrichment between assemblies to validate the presence or absence of the epigenomic mark.

Visualization of Workflows and Relationships

Diagram 1: Comparative Genomics Workflow for EpiExplorer

Diagram 2: Mapping Artefact Resolution Between Assemblies

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Tools for Comparative Genomics Analysis

| Item | Function/Description | Example Product/Code |

|---|---|---|

| T2T-CHM13 Reference Genome | Complete, gap-free human genome assembly for alignment and annotation. | NCBI Assembly: GCA_009914755.4 (v2.0) |

| Liftover Chain File | File specifying genomic coordinate conversions between assemblies. | UCSC: hg38ToT2T-CHM13.v2.0.chain.gz |

| High-Fidelity DNA Polymerase | For accurate amplification of assembly-specific sequences during validation (Protocol 3.3). | Takara Bio: PrimeSTAR GXL DNA Polymerase |

| ddPCR Supermix | Enables absolute quantification of ChIP enrichment at specific loci without standard curves. | Bio-Rad: ddPCR Supermix for Probes (No dUTP) |

| ChIP-Grade Antibody | Validated antibody for the specific histone modification or transcription factor of interest. | Cell Signaling Technology, Active Motif, Abcam catalogues |

| Cross-Assembly Genome Browser | Visualization tool to simultaneously view data on hg38 and T2T-CHM13. | UCSC Genome Browser (t2t-hub), IGV |

| EpiExplorer Software Framework | Platform for live, integrative exploration of mapped epigenomic datasets across assemblies. | Custom framework as per thesis context |

This technical guide details a core workflow within the EpiExplorer research platform for the live exploration of large epigenomic datasets. The integration of ChIP-seq (Chromatin Immunoprecipitation Sequencing), ATAC-seq (Assay for Transposase-Accessible Chromatin sequencing), and Hi-C data provides a multi-dimensional view of chromatin states, enabling researchers to correlate transcription factor binding, chromatin accessibility, and 3D genomic architecture. This integrative analysis is critical for identifying functional regulatory elements and understanding gene regulation mechanisms in development, disease, and drug discovery contexts.

Table 1: Typical Sequencing Specifications and Outputs for Integrated Epigenomic Assays

| Assay | Recommended Sequencing Depth (Human Genome) | Key Output Metrics | Typical Resolution | Primary Use in Integration |

|---|---|---|---|---|

| ChIP-seq (Transcription Factor) | 20-50 million reads | Peak count, FRiP score, motif enrichment | 100-500 bp | Identifying protein-DNA binding sites. |

| ChIP-seq (Histone Mark) | 40-60 million reads | Broad domain or sharp peak calls, signal enrichment | 100-1000 bp | Defining chromatin states (e.g., enhancers, promoters). |

| ATAC-seq | 50-100 million reads | Open chromatin peak count, TSS enrichment score | <100 bp | Mapping accessible chromatin regions. |

| Hi-C (Mid-depth) | 500 million - 1 billion read pairs | Contact matrix, TAD boundaries, interaction scores | 5-25 kb | Mapping chromatin loops and topologically associating domains (TADs). |

Table 2: Key Software Tools for Integrative Analysis

| Tool Name | Primary Function | Input Data Types | Key Output |

|---|---|---|---|

| EpiExplorer (Platform Context) | Live visualization & overlay | Processed bigWig, BED, .hic | Unified browser view, correlation plots. |

| ChromHMM/SeGMent | Chromatin state segmentation | Multiple ChIP-seq, ATAC-seq tracks | Genome segmentation into discrete states. |

| FitHiC2/HiCExplorer | Significant interaction calling | Hi-C contact matrices | Significant chromatin loops, TADs. |

| bedtools | Genomic interval operations | BED, GFF, VCF files | Overlaps, intersections, merges of features. |

Experimental Protocols

Protocol 1: Standard ChIP-seq Library Preparation and Sequencing

Objective: Generate genome-wide maps of transcription factor binding or histone modifications.

- Crosslinking: Treat cells with 1% formaldehyde for 10 min at room temperature. Quench with 125 mM glycine.

- Cell Lysis & Chromatin Shearing: Lyse cells and isolate nuclei. Sonicate chromatin to 100-500 bp fragments using a Covaris ultrasonicator.

- Immunoprecipitation: Incubate sheared chromatin with 2-5 µg of target-specific antibody (e.g., H3K27ac, H3K4me3, or TF antibody) overnight at 4°C. Use Protein A/G magnetic beads for capture.

- Wash, Reverse Crosslink, & Purify: Wash beads stringently. Reverse crosslinks at 65°C overnight. Treat with RNase A and Proteinase K. Purify DNA using silica columns.

- Library Prep & Sequencing: Prepare sequencing library using kits (e.g., NEBNext Ultra II). Amplify with 8-12 PCR cycles. Sequence on Illumina platform (2x 150 bp recommended).

Protocol 2: ATAC-seq Library Preparation (Omni-ATAC Protocol)

Objective: Map regions of open chromatin.

- Nuclei Isolation: Harvest 50,000-100,000 viable cells. Lyse with cold ATAC-seq Lysis Buffer (10 mM Tris-HCl pH 7.4, 10 mM NaCl, 3 mM MgCl2, 0.1% Igepal CA-630). Pellet nuclei.

- Tagmentation: Resuspend nuclei in Transposition Mix (25 µL 2x TD Buffer, 2.5 µL Transposase (Illumina Tn5), 22.5 µL Nuclease-free water). Incubate at 37°C for 30 min. Immediately purify using a MinElute PCR Purification Kit.

- Library Amplification: Amplify tagmented DNA with 1x NEBnext PCR master mix and barcoded primers (Ad1_noMX, Ad2.x). Determine cycle number via qPCR side reaction (typically 8-12 cycles).

- Cleanup & Sequencing: Purify library using SPRI beads. Quality check with Bioanalyzer. Sequence on Illumina platform (2x 75 bp sufficient).

Protocol 3: In-situ Hi-C Library Preparation

Objective: Capture genome-wide chromatin interactions.

- Crosslinking & Digestion: Crosslink cells with 2% formaldehyde. Lyse cells. Digest chromatin with a 4-cutter restriction enzyme (e.g., MboI or DpnII).

- Marking & Proximity Ligation: Fill restriction fragment overhangs with biotinylated nucleotides. Perform proximity ligation under dilute conditions to favor intra-molecular ligation.

- Reverse Crosslinking & Shearing: Reverse crosslinks and purify DNA. Shear DNA to 300-500 bp via sonication.

- Pull-down & Library Prep: Perform a streptavidin pull-down to enrich for biotinylated ligation junctions. Prepare a standard Illumina sequencing library from the pulled-down material.

- Sequencing: Sequence deeply on an Illumina HiSeq/X or NovaSeq platform (2x 150 bp recommended for paired-end reads).

Diagrams

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Kits for Featured Experiments

| Item Name | Vendor Examples (Illustrative) | Primary Function in Workflow |

|---|---|---|

| Formaldehyde (37%) | Thermo Fisher, Sigma-Aldrich | Crosslinking agent for ChIP-seq and Hi-C to fix protein-DNA and protein-protein interactions. |

| Protein A/G Magnetic Beads | MilliporeSigma, Pierce, Diagenode | Capture of antibody-bound chromatin complexes during ChIP-seq immunoprecipitation. |

| Specific Antibodies (e.g., H3K27ac, CTCF) | Active Motif, Abcam, Cell Signaling Technology | Target-specific recognition of histone modifications or transcription factors for ChIP-seq. |

| Illumina Tn5 Transposase | Illumina (Nextera Kit) | Simultaneous fragmentation and adapter tagging of accessible genomic DNA in ATAC-seq. |

| NEBNext Ultra II DNA Library Prep Kit | New England Biolabs | High-efficiency library preparation from low-input ChIP-seq or ATAC-seq DNA. |

| DpnII / MboI Restriction Enzyme | New England Biolabs | Genome digestion for in-situ Hi-C, defining the baseline resolution of contact maps. |

| Biotin-14-dATP | Thermo Fisher | Labeling of digested DNA ends in Hi-C to allow enrichment of ligation junctions. |

| Streptavidin C1 Magnetic Beads | Thermo Fisher | Pulldown of biotinylated Hi-C ligation products prior to library preparation. |

| SPRIselect Beads | Beckman Coulter | Size selection and clean-up of DNA libraries across all protocols. |

| Qubit dsDNA HS Assay Kit | Thermo Fisher | Accurate quantification of low-concentration DNA samples (e.g., post-ChIP). |

Within the broader thesis on the live exploration of large epigenomic datasets with EpiExplorer research, the identification of candidate biomarkers and regulatory elements represents a critical translational objective. This process moves beyond cataloging epigenetic variation to pinpointing functional components with diagnostic, prognostic, or therapeutic potential. By analyzing disease cohorts against matched controls, researchers can isolate epigenomic features—such as differentially methylated regions (DMRs), accessible chromatin regions, or histone modification marks—that are strongly associated with disease phenotype, progression, or treatment response. This technical guide outlines the integrated computational and experimental pipeline for robust discovery and validation.

Core Analytical Pipeline in EpiExplorer

The live exploration within EpiExplorer facilitates a multi-step analytical journey. The workflow is designed for iterative hypothesis generation and testing.

Cohort Data Integration & Quality Control

- Data Harmonization: Raw sequencing reads (e.g., from WGBS, ATAC-seq, ChIP-seq) from public repositories (GEO, ENCODE, IHEC) and proprietary cohorts are processed through a uniform pipeline (e.g., nf-core/methylseq, nf-core/atacseq) for consistency.

- QC Metrics: Key metrics are summarized in Table 1.

Table 1: Essential QC Metrics for Epigenomic Datasets

| Assay | Key Metric | Target Value | Purpose |

|---|---|---|---|

| WGBS/EWAS | Bisulfite Conversion Rate | >99% | Ensures accurate methylation calling |

| ATAC-seq | Fraction of Reads in Peaks (FRiP) | >20% | Indicates signal-to-noise ratio |

| ChIP-seq | Cross-Correlation (NSC / RSC) | NSC>1.05, RSC>0.8 | Assesses enrichment and library quality |

| All | PCR Duplication Rate | <50% | Identifies over-amplification artifacts |

| All | Mitochondrial Read Fraction (ATAC) | <20% | Indicates cell integrity during assay |

Differential Analysis & Candidate Identification

- Statistical Frameworks: Use tools like

DSS(for methylation),DESeq2/limma(for count data from ATAC/ChIP), ordiffBindfor peak-based analyses. - Candidate Thresholding: Combine statistical significance (FDR < 0.05) with effect size (e.g., |Δβ| > 0.1 for methylation, log2FC > 1 for accessibility).

Functional Annotation & Prioritization

- Genomic Context: Annotate candidates to gene promoters, enhancers (using chromatin state maps), or CTCF sites.

- Integration with GWAS: Overlap with disease-associated SNPs from GWAS catalog to identify potential regulatory quantitative trait loci (QTLs).

- Pathway Enrichment: Use

clusterProfilerorGREATto link candidate regions to biological pathways.

Diagram Title: EpiExplorer Candidate Identification Workflow

Experimental Validation Protocols

Candidate loci from computational analysis require orthogonal validation.

Protocol: Targeted Bisulfite Sequencing (for DMRs)

- Objective: Validate methylation status of candidate CpGs in an extended cohort.

- Method: Design PCR primers (using MethPrimer) flanking the DMR. Treat genomic DNA (500 ng) with sodium bisulfite (EZ DNA Methylation-Lightning Kit). Amplify target region with bisulfite-converted DNA as template. Purify PCR product and submit for Sanger or next-generation sequencing.

- Analysis: Use quantitative tools like

QUMAorBiQ Analyzerto calculate methylation percentages per CpG and compare between cohorts via t-test.

Protocol: Chromatin Accessibility by qPCR (ATAC-qPCR)

- Objective: Validate differential chromatin accessibility of candidate regions.

- Method: Perform standard ATAC-seq library prep (Omni-ATAC protocol) but stop prior to library amplification. Use the transposed DNA as template for quantitative PCR with SYBR Green. Design primers within the candidate accessible region and a control region of stable accessibility.

- Analysis: Calculate ΔΔCq values. The fold-change in accessibility is given by 2^(-ΔΔCq).

Protocol: Functional Validation via CRISPR Inhibition (CRISPRi)

- Objective: Assess the regulatory function of a candidate enhancer on its putative target gene.

- Method: Design sgRNAs targeting the candidate region. Lentivirally transduce a dCas9-KRAB repressor construct and sgRNAs into a relevant cell line. Include a non-targeting sgRNA control.

- Readout: Measure expression of the putative target gene via RT-qPCR (72 hrs post-transduction) and assess phenotypic consequences (e.g., proliferation, differentiation).

Diagram Title: CRISPRi Functional Validation Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents for Biomarker Discovery & Validation

| Item | Function & Application | Example Product/Kit |

|---|---|---|

| Bisulfite Conversion Kit | Converts unmethylated cytosines to uracil, enabling methylation detection at single-base resolution. Essential for validating DMRs. | EZ DNA Methylation-Lightning Kit (Zymo Research) |

| ATAC-seq Kit | Provides all reagents for tagmentation and library preparation to assay chromatin accessibility from nuclei. | Illumina Tagment DNA TDE1 Kit or Omni-ATAC reagents |

| CRISPR/dCas9 System | Enables targeted epigenetic perturbation (activation/interference) for functional validation of regulatory elements. | dCas9-KRAB Lentiviral Particle (e.g., Sigma) & sgRNA vectors |

| Nucleic Acid Stabilizer | Preserves RNA/DNA and epigenetic marks in clinical samples immediately upon collection, critical for cohort integrity. | PAXgene Blood DNA/RNA Tubes (Qiagen) |

| Methylation-Specific qPCR Assay | Allows rapid, quantitative validation of methylation status at specific loci in large sample cohorts. | MethylLight (TaqMan-based) or SYBR Green-based assays |

| Chromatin Immunoprecipitation (ChIP) Kit | Validates specific histone modifications or transcription factor binding at candidate regions. | Magna ChIP A/G Chromatin IP Kit (MilliporeSigma) |

| High-Sensitivity DNA/RNA Kits | Quantifies and assesses quality of input material from limited clinical samples (e.g., biopsies). | Qubit dsDNA HS / RNA HS Assay Kits (Thermo Fisher) |

Integration with Multi-Omics for Biomarker Qualification

True biomarker qualification requires cross-omics concordance. EpiExplorer facilitates this by enabling overlay of epigenomic candidates with transcriptomic (RNA-seq) and proteomic (e.g., Olink, mass spectrometry) data from the same cohorts.

Table 3: Multi-Omics Correlation Strengthens Biomarker Candidates

| Epigenomic Finding | Correlative Transcriptomic Signal | Supporting Proteomic/Serum Signal | Strength as Biomarker |

|---|---|---|---|

| Hypomethylation in Gene Body | Increased expression of the same gene | Elevated protein product in tissue lysate | High (mechanistically linked) |

| Gain of H3K27ac at Enhancer | Increased expression of linked target gene(s) | N/A (may be indirect) | Medium |

| Hypermethylation at miRNA Promoter | Decreased expression of that miRNA | Altered levels of known protein targets of the miRNA | Very High (multi-layer regulation) |

The live exploration capabilities of platforms like EpiExplorer transform static epigenomic cohort data into a dynamic resource for biomarker and regulatory element discovery. By integrating rigorous computational pipelines with structured experimental validation protocols, researchers can efficiently translate statistical associations into biologically and clinically meaningful insights, accelerating the path towards diagnostic and therapeutic applications.

Troubleshooting and Optimization: Resolving Common Issues and Maximizing Performance in EpiExplorer

This whitepaper, framed within the broader research context of live exploration of large epigenomic datasets with the EpiExplorer platform, details technical strategies to overcome performance limitations endemic to genomic data science. Efficient data handling is not merely an IT concern but a critical enabler for hypothesis generation and validation in epigenomics research and drug development.

Quantitative Analysis of Current Challenges

Recent surveys and benchmarks highlight the scale of the data challenge in modern epigenomics.

Table 1: Scale of Contemporary Epigenomic Datasets (2024)

| Data Type | Typical Size per Sample | Common Cohort Size | Aggregate Dataset Size |

|---|---|---|---|

| Whole-Genome Bisulfite Sequencing (WGBS) | 80-100 GB | 100-1000 samples | 8 TB - 100 TB |

| ATAC-seq (paired-end) | 15-25 GB | 500-10,000 samples | 7.5 TB - 250 TB |

| ChIP-seq (Histone Marks) | 10-20 GB | 500-5,000 samples | 5 TB - 100 TB |

| Hi-C (High-Resolution) | 200-300 GB | 50-200 samples | 10 TB - 60 TB |

Table 2: Performance Bottlenecks in Interactive Exploration

| Bottleneck Type | Typical Latency (Unoptimized) | Target Latency (Optimized) | Primary Impact |

|---|---|---|---|

| Full Dataset I/O (Sequential Read) | 30-120 minutes | 2-5 minutes | Batch analysis |

| Range Query (e.g., 1Mb genomic region) | 10-45 seconds | < 500 ms | Interactive browsing |

| Multi-sample Aggregation | 20-90 seconds | < 1 second | Cohort comparison |

| Visualization Rendering (Complex tracks) | 5-15 seconds | < 200 ms | User experience |

Core Methodologies for Performance Optimization

Experimental Protocol: Benchmarking Data Storage Formats

Objective: To compare the query performance of different file formats for storing epigenomic feature data (e.g., peaks, methylation calls). Protocol:

- Data Preparation: Select a representative WGBS dataset (e.g., 100 samples, ~10TB raw data). Process into methylation calls (BED-like format).

- Format Conversion: Convert the aggregated calls into four test formats: Plain TSV, BGZF-compressed TSV, HDF5 with genomic coordinate indexing, and Zarr with chunked compression.

- Indexing: Apply appropriate indexing (e.g., Tabix for BGZF, hierarchical indices for HDF5/Zarr).

- Query Benchmark: Execute 1000 random range queries of varying sizes (1kb, 100kb, 1Mb) against each format.

- Metrics: Measure latency from query initiation to data retrieval completion. Record I/O throughput and CPU utilization.

Experimental Protocol: Evaluating In-Memory Data Architectures

Objective: To assess frameworks for holding aggregated data in RAM for interactive client-server applications like EpiExplorer. Protocol:

- Framework Selection: Test Apache Arrow (PyArrow), Redis, and DuckDB.

- Workload Simulation: Load a ~500 GB dataset of chromatin accessibility scores (ATAC-seq signal) for 1,000 samples across the genome into each system.

- Operation Suite: Perform a standardized series of operations: a) Filtering by genomic region, b) Aggregating signal per sample group, c) Calculating correlation matrices between samples for a region.

- Measurement: Record query latency, memory footprint, and data serialization speed for real-time updates.

Strategic Architecture & Implementation

Diagram Title: EpiExplorer High-Performance Architecture

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for High-Performance Epigenomic Data Exploration

| Tool / Reagent | Category | Primary Function in Workflow |

|---|---|---|

| Zarr Format | Data Storage | Enables chunked, compressed, and parallel I/O for multi-dimensional genomic data, crucial for cloud-native access. |

| Apache Arrow | In-Memory Format | Provides a standardized, columnar memory layout for zero-copy data sharing between processes (e.g., server and viz engine). |

| Tabix | Indexing Utility | Creates positional indexes for BGZF-compressed files (like BED, GFF, VCF), enabling sub-second range queries. |

| TileDB | Database Engine | A purpose-built array storage manager for sparse and dense genomic data with built-in versioning and efficient updates. |

| Dask / Ray | Parallel Computing | Frameworks for parallelizing data analysis across clusters, allowing large dataset operations to be scaled out. |

| Gosling | Visualization Grammar | A declarative grammar for scalable, interactive genomic visualizations in the browser, reducing client-side rendering load. |

| Intel ISA-L | Optimization Library | Provides optimized compression algorithms (e.g., for CRAM format) to accelerate I/O performance on supported hardware. |

Optimized Data Flow for Live Query

Diagram Title: Live Query Data Flow

Implementation of the strategies and architectures outlined—leveraging columnar storage, intelligent caching, chunked data formats, and parallel computation—directly addresses the critical performance bottlenecks in epigenomic research. This enables platforms like EpiExplorer to facilitate true live exploration of ultra-large datasets, accelerating the pace of discovery in functional genomics and therapeutic development.

Within the thesis on live exploration of large epigenomic datasets using the EpiExplorer research platform, robust data visualization is paramount. This technical guide addresses common track display errors and graphical artifacts that impede accurate interpretation of complex epigenomic data. We provide a systematic framework for diagnosing, troubleshooting, and resolving these issues to ensure the fidelity of scientific visualizations critical for research and drug development.

EpiExplorer facilitates the interactive interrogation of epigenomic datasets, including ChIP-seq, ATAC-seq, and DNA methylation data across multiple cell lines and conditions. The scale (often terabytes) and complexity of these datasets introduce unique visualization challenges. Artifacts such as track misalignment, incorrect scaling, color banding, and rendering glitches can lead to erroneous biological conclusions, directly impacting downstream analysis in biomarker discovery and therapeutic target identification.

Common Artifacts and Their Root Causes

A summary of frequent visualization errors, their potential impact, and primary causes is presented below.

Table 1: Common Graphical Artifacts in Epigenomic Data Visualization

| Artifact Type | Visual Manifestation | Primary Cause | Potential Impact on Research |

|---|---|---|---|

| Track Misalignment | Genomic feature tracks (e.g., peaks, genes) do not align with reference genome coordinates. | Incorrect coordinate system (0 vs. 1-based), index file corruption, asynchronous data streaming. | False co-localization claims, incorrect annotation of regulatory elements. |

| Incorrect Data Scaling | Signal tracks appear flattened or disproportionately spiky. | Improper normalization (RPKM, CPM), integer overflow, incorrect Y-axis auto-scaling logic. | Misestimation of differential enrichment, poor replicate correlation. |

| Color Banding / Inaccuracy | Discontinuous color gradients in heatmaps or uniform regions of unexpected color. | Faulty color mapping of continuous values, limited color depth (8-bit), GPU shader errors. | Misinterpretation of chromatin state or methylation levels. |

| Render Clipping | Top of peak signals appear truncated. | Fixed y-axis maximum, data values exceeding predefined clamp. | Underestimation of peak height and significance. |

| Tile Fetching Artifacts | "Checkerboard" pattern or blank sections in genomic browser view at certain zoom levels. | Network latency in fetching data tiles, server-side rendering errors, corrupted cache. | Incomplete view of genomic region, missing critical features. |

Experimental Protocols for Diagnosis and Validation

Protocol: Validating Track Coordinate Integrity

Objective: To confirm that visualized data aligns with the correct genomic positions. Materials: EpiExplorer instance, source BED/BigWig files, independent genome browser (e.g., IGV). Method:

- Select a genomic locus with a known, unambiguous feature (e.g., a highly conserved peak).

- Note the chromosome and base-pair coordinates in EpiExplorer.

- Load the same source data file into IGV and navigate to the identical coordinates.

- Quantitatively compare the visualized features' start/end positions and summit.

- Repeat across 3 distinct genomic loci (e.g., promoter, intergenic, enhancer region). Validation: Positions should match within the tools' resolution limits. A systematic offset indicates a coordinate system error.

Protocol: Quantifying Rendering Fidelity for Quantitative Tracks

Objective: To ensure the visualized signal height accurately represents underlying quantitative values.

Materials: BigWig signal file, bigWigToWig utility, statistical software (R/Python).

Method:

- Export raw values for a specific genomic region (e.g., chr1:10,000-15,000) using