Decoding Complexity: A Practical Guide to AI-Driven Epigenetic and Non-Coding RNA Analysis

This article provides a comprehensive guide for biomedical researchers on leveraging artificial intelligence (AI) to analyze epigenetic modifications (e.g., DNA methylation, histone marks) and non-coding RNA (ncRNA) data.

Decoding Complexity: A Practical Guide to AI-Driven Epigenetic and Non-Coding RNA Analysis

Abstract

This article provides a comprehensive guide for biomedical researchers on leveraging artificial intelligence (AI) to analyze epigenetic modifications (e.g., DNA methylation, histone marks) and non-coding RNA (ncRNA) data. It explores foundational concepts, detailing how AI models like deep learning uncover regulatory patterns in these complex datasets. The guide covers practical methodologies, from data preprocessing to model application for biomarker discovery and therapeutic target identification. It addresses common analytical challenges, offering troubleshooting and optimization strategies for robust results. Finally, it examines validation frameworks and compares leading AI tools and pipelines, equipping scientists with the knowledge to integrate AI effectively into their epigenomics and transcriptomics research for advancing drug development and precision medicine.

The AI-Epigenetics Nexus: Understanding the Basics and Core Opportunities

Application Notes

DNA Methylation Arrays

Purpose: Genome-wide profiling of DNA methylation at single-nucleotide resolution, primarily focused on CpG islands. Used to identify epigenetic changes in development, disease (e.g., cancer), and in response to environmental factors. Key Platforms: Illumina Infinium MethylationEPIC v2.0 BeadChip (~935,000 CpG sites), covering >90% of CpG islands. AI Integration: Machine learning models (e.g., convolutional neural networks) are used to predict methylation states from sequence data, correct for batch effects, and identify epialleles associated with clinical phenotypes for biomarker discovery.

ChIP-seq (Chromatin Immunoprecipitation Sequencing)

Purpose: Maps protein-DNA interactions genome-wide, primarily for transcription factors (TFs) and histone modifications. Essential for understanding gene regulatory networks and chromatin states. Key Metrics: Sequencing depth of 20-50 million reads for histone marks, 50-100 million for TFs. Peak calling algorithms (e.g., MACS2) identify enriched regions. AI Integration: Deep learning tools (e.g., DeepBind, BPNet) predict TF binding specificity from sequence and learn de novo motifs. AI assists in integrating multi-omics ChIP-seq data to construct regulatory networks.

ATAC-seq (Assay for Transposase-Accessible Chromatin Sequencing)

Purpose: Identifies regions of open chromatin, inferring regulatory element activity (promoters, enhancers). Rapid, sensitive, and requires low cell input (500-50,000 cells). Key Metrics: Typical sequencing: 50-100 million paired-end reads. Peaks represent transposase-accessible regions. AI Integration: AI models (e.g., based on autoencoders) denoise ATAC-seq data, predict chromatin accessibility from sequence, and integrate with TF motifs to infer activity states. Used in single-cell ATAC-seq analysis for cell type identification.

ncRNA Sequencing (Non-coding RNA Sequencing)

Purpose: Discovery and quantification of non-coding RNAs (miRNAs, lncRNAs, piRNAs, etc.). Used to profile expression and investigate roles in gene silencing, imprinting, and development. Workflow: Includes size selection for small RNAs (<200 nt) or ribosomal RNA depletion for long ncRNAs. Requires specialized libraries (e.g., adapters for 3’/5’ ligation for miRNAs). AI Integration: AI pipelines classify ncRNA types, predict novel ncRNAs from sequencing data, and construct competing endogenous RNA (ceRNA) networks by integrating mRNA and miRNA expression data.

Table 1: Key Characteristics of Epigenomic and ncRNA Profiling Technologies

| Data Type | Primary Application | Typical Resolution | Key Output | Common Sequencing Depth | Sample Input | Key AI Analysis Tasks |

|---|---|---|---|---|---|---|

| DNA Methylation Array | CpG methylation profiling | Single CpG site | Beta-values (0-1, % methylation) | N/A (Array-based) | 50-500 ng DNA | Batch correction, differential methylation calling, epigenetic clock prediction |

| ChIP-seq | Protein-DNA interaction mapping | 50-300 bp (peak regions) | Peak files (BED), signal tracks | 20-100M reads | 1-10 µg chromatin (Histones) 5-50 µg (TFs) | De novo motif discovery, peak calling, multi-omics integration |

| ATAC-seq | Open chromatin profiling | ~100 bp (nucleosome-free) | Peak files (BED), insert size plot | 50-100M paired-end reads | 500-50,000 nuclei | Chromatin state prediction, footprinting, integration with gene expression |

| ncRNA-seq | Non-coding RNA expression | Single nucleotide | Count matrix, novel transcripts | 20-50M reads (small RNA) 50-100M (lncRNA) | 1 µg - 100 ng total RNA | Novel ncRNA prediction, miRNA target prediction, ceRNA network modeling |

Experimental Protocols

Protocol: Infinium MethylationEPIC BeadChip Array

Materials: Sodium bisulfite conversion kit (e.g., EZ DNA Methylation Kit), Infinium MethylationEPIC v2.0 Kit, iScan System. Procedure:

- Bisulfite Conversion: Treat 500 ng genomic DNA with sodium bisulfite, converting unmethylated cytosines to uracil.

- Whole-Genome Amplification: Amplify converted DNA.

- Fragmentation & Precipitation: Fragment amplified product, isopropanol precipitate, and resuspend.

- Hybridization: Apply resuspended DNA to BeadChip, incubate at 48°C for 16-24 hours.

- Single-Base Extension & Staining: Fluorescently label nucleotides incorporated by extension.

- Scanning: Image BeadChip on iScan scanner. Data analyzed with Illumina GenomeStudio or R/Bioconductor (minfi package).

Protocol: Standard ChIP-seq for Histone Modifications

Materials: Formaldehyde, glycine, sonicator, specific antibody for target histone mark (e.g., H3K27ac), Protein A/G beads, library prep kit. Procedure:

- Crosslinking: Treat cells with 1% formaldehyde for 10 min at RT. Quench with 125 mM glycine.

- Chromatin Preparation: Lyse cells, isolate nuclei. Sonicate chromatin to 200-500 bp fragments (validated by gel).

- Immunoprecipitation: Incubate chromatin with antibody overnight at 4°C. Add beads, incubate, wash.

- Elution & Reverse Crosslinking: Elute complexes, add RNase A and Proteinase K, incubate at 65°C overnight.

- DNA Purification: Purify DNA with spin columns.

- Library Prep & Sequencing: Prepare sequencing library (end repair, A-tailing, adapter ligation, PCR). Sequence on Illumina platform (50-75 bp single-end).

Protocol: Standard ATAC-seq

Materials: Transposase (Tn5), Digitonin, Nuclei buffer, NEBNext High-Fidelity PCR Master Mix, AMPure XP beads. Procedure:

- Cell Lysis & Nuclei Preparation: Lyse cells in cold lysis buffer (10 mM Tris-HCl, pH 7.4, 10 mM NaCl, 3 mM MgCl2, 0.1% IGEPAL, 0.1% Tween-20, 0.01% Digitonin). Pellet nuclei.

- Tagmentation: Resuspend nuclei in transposase reaction mix (Illumina Nextera or homebrew Tn5). Incubate at 37°C for 30 min. Immediately purify with MinElute column.

- PCR Amplification: Amplify tagmented DNA with barcoded primers for 10-12 cycles.

- Library Purification & QC: Purify with AMPure XP beads. Check fragment distribution (1 nucleosome ~200 bp, 2 nucleosomes ~400 bp) on Bioanalyzer.

- Sequencing: Sequence paired-end (2x50 bp) on Illumina system.

Protocol: Small RNA Sequencing (for miRNA)

Materials: TRIzol, Small RNA isolation kit, T4 RNA Ligase, RT-PCR kit, High Sensitivity DNA Assay kit. Procedure:

- RNA Isolation: Extract total RNA with TRIzol. Enrich small RNAs (<200 nt) using size-selection columns or gels.

- 3’ Adapter Ligation: Ligate pre-adenylated 3’ adapter using T4 RNA Ligase 2, truncated.

- 5’ Adapter Ligation: Ligate 5’ RNA adapter using T4 RNA Ligase 1.

- Reverse Transcription & PCR Amplification: Reverse transcribe with RT primer containing index sequences. Amplify cDNA for 12-15 cycles.

- Size Selection & Purification: Run gel to excise library inserts (140-160 bp for miRNAs). Purify.

- QC & Sequencing: Validate library on Bioanalyzer. Sequence single-end 50 bp on Illumina.

Visualization: Pathways and Workflows

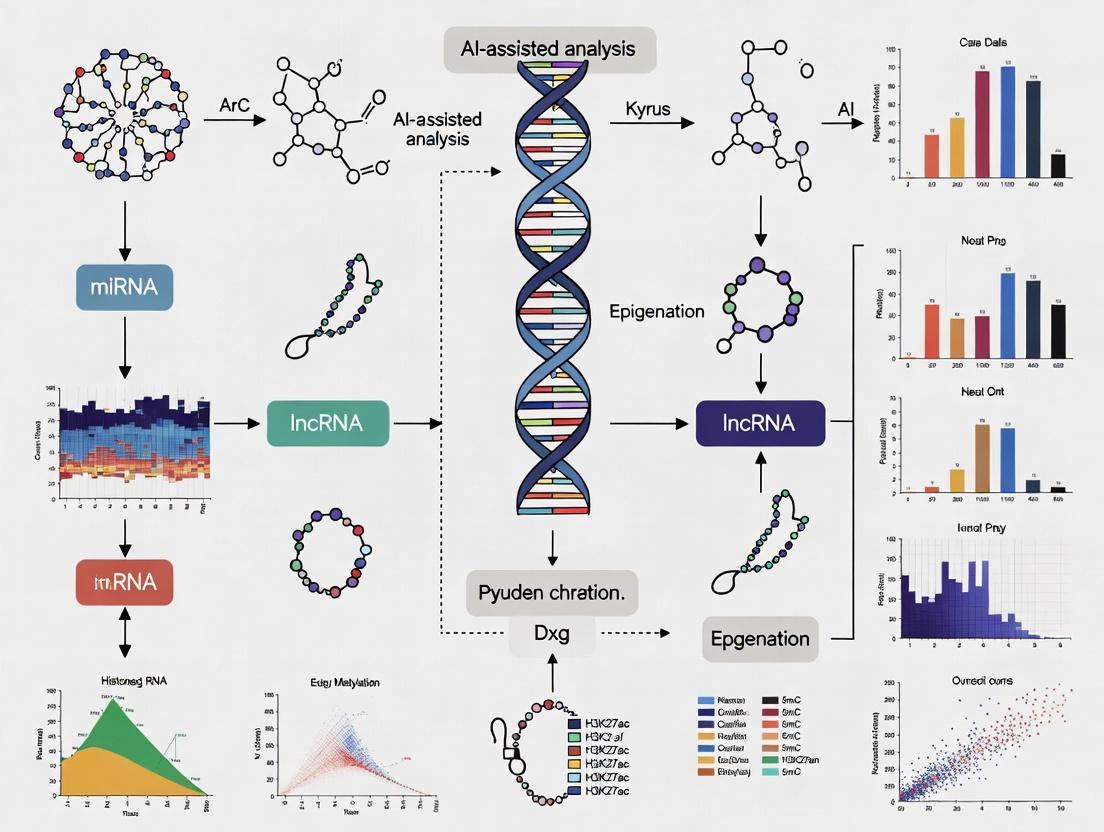

Title: AI-Assisted Multi-Omics Analysis Workflow

Title: ATAC-seq Experimental Workflow

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagent Solutions for Featured Experiments

| Technology | Essential Material/Reagent | Function & Brief Explanation |

|---|---|---|

| DNA Methylation Array | Sodium Bisulfite | Converts unmethylated cytosine to uracil, enabling differentiation of methylated/unmethylated bases during array probing. |

| Infinium BeadChip | Microarray containing millions of probes for CpG sites. Hybridization target for bisulfite-converted DNA. | |

| ChIP-seq | Crosslinking Agent (Formaldehyde) | Crosslinks proteins to DNA in living cells, preserving in vivo interactions for immunoprecipitation. |

| Validated ChIP-grade Antibody | High-specificity antibody against target protein (TF or histone mark) to immunoprecipitate DNA fragments. | |

| Magnetic Protein A/G Beads | Binds antibody-protein-DNA complexes for isolation and washing. | |

| ATAC-seq | Hyperactive Tn5 Transposase | Enzyme that simultaneously fragments ("tagments") DNA and adds sequencing adapters in open chromatin regions. |

| Cell Permeabilizer (Digitonin) | Gently lyses plasma membrane while leaving nuclear membrane intact for clean nuclei preparation. | |

| ncRNA-seq (small RNA) | 3' & 5' RNA Adapters | Modified oligonucleotides ligated to RNA ends for cDNA synthesis and sequencing; designed for small RNA substrates. |

| Size Selection Beads (e.g., AMPure XP) | Magnetic beads used to select specific RNA or library fragment sizes (e.g., ~18-30 nt RNAs). |

Application Notes: AI Suitability of Epigenetic and ncRNA Data

Epigenetic modifications (DNA methylation, histone modifications, chromatin accessibility) and non-coding RNA (ncRNA) expression profiles generate complex, high-dimensional datasets. Their intrinsic characteristics align perfectly with the strengths of modern Artificial Intelligence (AI) and Machine Learning (ML) models.

Key Data Characteristics:

- High-Dimensionality: A single assay (e.g., ChIP-seq, ATAC-seq, small RNA-seq) can yield millions of data points per sample, with features vastly outnumbering samples.

- Non-Linearity: Interactions between epigenetic marks, ncRNAs, and genes are rarely linear or additive; they form complex regulatory networks.

- Hidden Patterns: Causal relationships and predictive signatures are often embedded within high-order interactions not discernible by traditional statistics.

AI/ML Advantages:

- Dimensionality Reduction: Autoencoders and t-SNE can project data into lower-dimensional spaces while preserving biological variance.

- Pattern Recognition: Deep learning (CNNs, RNNs) identifies spatially distributed chromatin states or temporal ncRNA expression patterns.

- Predictive Modeling: Ensemble models (Random Forests, XGBoost) can predict disease outcomes or drug response from integrated omics layers.

Table 1: Quantitative Comparison of Common Epigenetic & ncRNA Assays

| Assay Type | Typical Features per Sample | Data Format | Primary AI Model Applications |

|---|---|---|---|

| Whole-Genome Bisulfite Seq | ~28 Million CpG sites | Methylation ratio (0-1) | CNN for region classification, DNN for phenotype prediction |

| ChIP-seq (Histone Marks) | 50-100 Million reads | Read density peaks | CNN for motif discovery, RNN for sequential pattern learning |

| ATAC-seq | 50-100 Million reads | Accessibility peaks | Unsupervised clustering (autoencoders), feature selection |

| Small RNA-seq (miRNA) | 2000-3000 miRNAs | Counts per million | ML classifiers (SVM, RF) for diagnostic signatures |

| Single-Cell ATAC-seq | 50K-100K peaks per cell | Sparse binary matrix | Graph Neural Networks for cell state transitions |

Protocols for AI-Ready Data Generation

Protocol 2.1: Generating High-Dimensional DNA Methylation Data for Deep Learning

Objective: Prepare whole-genome bisulfite sequencing (WGBS) data suitable for training convolutional neural networks (CNNs) to classify cancer subtypes.

Materials & Reagents:

- Input: High-quality genomic DNA (≥1μg, 260/280 ≈ 1.8).

- Bisulfite Conversion: EZ DNA Methylation-Lightning Kit (Zymo Research).

- Library Prep: Accel-NGS Methyl-Seq DNA Library Kit (Swift Biosciences).

- Sequencing: Illumina NovaSeq 6000, 150bp paired-end, ≥30X coverage.

Procedure:

- Bisulfite Conversion: Treat 500ng DNA per manufacturer's protocol. Efficiency check (>99%) is mandatory via control oligonucleotides.

- Library Preparation: Construct libraries from converted DNA using unique dual-index adapters to enable multiplexing.

- Sequencing: Pool 12-16 libraries per lane. Target minimum 800 million paired-end reads per lane.

- Bioinformatic Preprocessing:

a. Alignment: Use

bismark(v0.24.0) withbowtie2against bisulfite-converted reference genome (hg38). b. Deduplication: Remove PCR duplicates usingdeduplicate_bismark. c. Extraction: Generate per-cytosine methylation reports usingbismark_methylation_extractor. d. Binning: Aggregate CpG methylation ratios in non-overlapping 100bp windows across the genome usingmethylKit(R). - AI-Ready Formatting: Export binned data as a 2D matrix (samples x genomic bins). Normalize values using quantile normalization. Split data into training (70%), validation (15%), and test (15%) sets.

Protocol 2.2: Profiling ncRNA Expression for Machine Learning Classifiers

Objective: Generate robust miRNA expression profiles from serum for training a random forest classifier to detect early-stage pancreatic ductal adenocarcinoma (PDAC).

Materials & Reagents:

- Sample: Human serum/plasma (200μL per patient).

- RNA Isolation: miRNeasy Serum/Plasma Advanced Kit (Qiagen).

- Library Prep: QIAseq miRNA Library Kit (Qiagen) with Unique Molecular Indexes (UMIs).

- Sequencing: Illumina NextSeq 550, 75bp single-end.

Procedure:

- RNA Isolation: Spike in 3.5μL of

miRNA Spike-In Kit(Qiagen) before extraction. Isolate total RNA per kit protocol. Elute in 20μL nuclease-free water. - Library Preparation: Use 5μL RNA per reaction. Follow QIAseq protocol for cDNA synthesis, adapter ligation, and PCR amplification (22 cycles). Include a no-template control.

- Sequencing: Pool libraries equimolarly. Sequence to a depth of 5-10 million reads per sample.

- Bioinformatic Preprocessing:

a. Demultiplexing & UMI Processing: Use

QIAseq miRNA Primary Pipeline(v1.0) for trimming, UMI deduplication, and alignment to miRBase. b. Quantification: Obtain raw UMI-collapsed counts per miRNA. c. Normalization: Apply DESeq2's median-of-ratios method to correct for library size. - Feature Engineering for ML: Filter miRNAs with less than 10 total counts. Perform variance-stabilizing transformation. Use

Borutapackage (R) for wrapper-based feature selection to identify top 50 predictive miRNAs for classifier training.

Visualizations

Diagram 1: AI Analysis Workflow for Multi-Omics Data

Diagram 2: miRNA-Gene Regulatory Network with AI Inference

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents for AI-Driven Epigenetics/ncRNA Research

| Item | Supplier (Example) | Function in AI-Oriented Protocol |

|---|---|---|

| EZ DNA Methylation-Lightning Kit | Zymo Research | Rapid, high-efficiency bisulfite conversion for WGBS, ensuring high-quality input for methylation CNNs. |

| QIAseq miRNA Library Kit | Qiagen | Incorporates UMIs to eliminate PCR duplicate bias, critical for accurate quantitative input to ML classifiers. |

| NEBNext Ultra II FS DNA Library Prep Kit | NEB | Fast, robust library prep for ChIP-seq/ATAC-seq, producing consistent read depth for cross-sample analysis. |

| 10x Genomics Chromium Single Cell ATAC Kit | 10x Genomics | Enables generation of single-cell chromatin accessibility data for graph-based neural network training. |

| TruSeq Small RNA Library Prep Kit | Illumina | Standardized, high-throughput library construction for ncRNA sequencing pipelines. |

| Cell-Free DNA Collection Tubes | Streck | Stabilizes blood samples for liquid biopsy epigenetics, ensuring reproducible input for diagnostic AI models. |

| SPRIselect Beads | Beckman Coulter | Size selection and cleanup for all NGS libraries, essential for uniform fragment distribution. |

| ERCC RNA Spike-In Mix | Thermo Fisher | External controls for RNA-seq normalization, improving technical variance correction prior to ML. |

Within a broader thesis on AI-assisted analysis of epigenetic and ncRNA data, the selection of a machine learning paradigm is foundational. Epigenomics, encompassing DNA methylation, histone modifications, chromatin accessibility, and ncRNA expression, generates complex, high-dimensional datasets. Supervised and unsupervised learning offer complementary approaches to extract biological insight, drive biomarker discovery, and identify therapeutic targets, directly impacting translational drug development.

Core Paradigms: Comparative Analysis

Table 1: Supervised vs. Unsupervised Learning in Epigenomic Analysis

| Aspect | Supervised Learning | Unsupervised Learning |

|---|---|---|

| Primary Goal | Predict a known label/outcome (e.g., disease state, survival). | Discover inherent patterns, clusters, or structures without pre-defined labels. |

| Typical Input | Feature matrix (e.g., methylation β-values) + Label vector (e.g., Tumor/Normal). | Feature matrix only. |

| Common Algorithms | Random Forests, Gradient Boosting (XGBoost), LASSO, Support Vector Machines (SVM), Neural Networks. | k-means, Hierarchical Clustering, Principal Component Analysis (PCA), Autoencoders, Self-Organizing Maps. |

| Key Epigenomic Applications | Diagnostic/prognostic classifier development, QTL mapping (eQTL, meQTL), drug response prediction. | Novel disease subtype discovery, cell type deconvolution, identifying novel regulatory modules. |

| Data Requirements | Large, high-quality labeled datasets; prone to overfitting with small n, high p data. | No labels needed; robust to label scarcity but results can be harder to validate biologically. |

| Output Interpretation | Direct link between features and outcome; feature importance scores. | Requires downstream bioinformatic validation to attach biological meaning to clusters/components. |

| Recent Use Case (2023-2024) | Predicting glioblastoma patient survival from multi-omic (methylation+expression) data (AUC ~0.87). | Identifying novel autoimmune disease subtypes from chromatin accessibility (ATAC-seq) maps. |

Application Notes & Detailed Protocols

Application Note 1: Supervised Learning for Methylation-Based Cancer Diagnosis

Objective: Train a classifier to distinguish colorectal carcinoma (CRC) from normal colon tissue using Illumina EPIC array methylation data.

Protocol:

- Data Acquisition & Preprocessing:

- Source public data (e.g., TCGA-COAD, GEO GSE199057). Normalize β-values using minfi or SeSAMe pipelines.

- Perform quality control: Remove probes with detection p-value > 0.01 in >5% samples, SNPs-associated probes, and cross-reactive probes.

- Handle missing values: Impute using impute package (k-nearest neighbors method).

- Differential Methylation Analysis: Use limma or DSS to select the top 10,000 most variably methylated probes (VMPs) or differentially methylated positions (DMPs) (FDR < 0.01). This reduces dimensionality.

Model Training & Validation:

- Split data (70/30) into training and hold-out test sets, stratifying by class label.

- Train a Random Forest Classifier (using scikit-learn):

- Input: Training data matrix (samples x 10,000 VMPs).

- Parameters: nestimators=1000, maxfeatures='sqrt', class_weight='balanced'.

- Perform 10-fold cross-validation on the training set to tune hyperparameters (e.g., max depth).

- Evaluate on the hold-out test set. Report Precision, Recall, F1-Score, and ROC-AUC.

Interpretation & Biomarker Extraction:

- Extract Gini importance scores from the trained Random Forest.

- Identify the top 50 most important CpG probes for classification.

- Annotate these probes to genes (e.g., using IlluminaHumanMethylationEPICanno.ilm10b4.hg19) and perform pathway over-representation analysis (e.g., via g:Profiler).

Table 2: Example Performance Metrics (Synthetic Data Representative of Recent Studies)

| Model | Test Accuracy | ROC-AUC | Key Top-Feature Example | Biological Relevance |

|---|---|---|---|---|

| Random Forest | 96.7% (±2.1) | 0.99 | cg10673833 (SEPT9 gene) | Known blood-based CRC biomarker. |

| XGBoost | 97.5% (±1.8) | 0.99 | cg17520407 (VIM gene) | Involved in epithelial-mesenchymal transition. |

| LASSO Logistic | 94.2% (±2.5) | 0.97 | cg25500086 (EYA4 gene) | Frequently methylated in CRC. |

Supervised Learning Workflow for Epigenomic Classification

Application Note 2: Unsupervised Learning for Discovery of Disease Subtypes

Objective: Identify novel molecular subtypes of Systemic Lupus Erythematosus (SLE) using unsupervised clustering of histone modification (H3K27ac) ChIP-seq data from patient peripheral blood mononuclear cells (PBMCs).

Protocol:

- Data Processing & Feature Construction:

- Process raw ChIP-seq FASTQ files: Align to hg38 with Bowtie2, call peaks with MACS2.

- Create a consensus peak set across all samples using DiffBind.

- Generate a count matrix (samples x consensus peaks) of normalized read counts (e.g., counts per million - CPM).

- Dimensionality Reduction: Apply Principal Component Analysis (PCA) to the top 25,000 most variable peaks. Retain the top 20 principal components (PCs) for downstream clustering.

Clustering & Subtype Discovery:

- Apply k-means Clustering (using scikit-learn) on the top 20 PCs.

- Determine optimal cluster number (k) by evaluating the elbow method (within-cluster sum of squares) and silhouette score across a range of k (2-10).

- For robust discovery, also apply hierarchical clustering (Ward's linkage) and compare results.

- Validate cluster stability using clusterboot (bootstrapping) or by assessing consensus across multiple algorithms.

- Apply k-means Clustering (using scikit-learn) on the top 20 PCs.

Biological Characterization:

- Perform differential H3K27ac analysis between clusters (e.g., with DESeq2).

- Annotate subtype-specific super-enhancers to nearby genes. Perform pathway analysis on these genes.

- Correlate clusters with clinical variables (e.g., disease activity index, renal involvement) using chi-square or ANOVA tests.

Table 3: Example Clustering Results (Synthetic Data Representative of Recent Studies)

| Cluster (Subtype) | % of Cohort | Key Epigenetic Feature | Enriched Pathway (FDR < 0.05) | Clinical Correlation |

|---|---|---|---|---|

| C1: Interferon-High | 35% | High H3K27ac at IRF/STAT target genes | Antiviral Response, Type I IFN Signaling | Higher SLEDAI score (p=0.003) |

| C2: Metabolic | 25% | High H3K27ac at metabolic gene loci | Oxidative Phosphorylation, Fatty Acid Metabolism | Associated with anti-Ro antibodies (p=0.02) |

| C3: Inactive | 40% | Low global H3K27ac signal | None Significant | Lower serum dsDNA titers (p=0.01) |

Unsupervised Learning Workflow for Subtype Discovery

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Reagents & Tools for AI-Epigenomics Research

| Item | Function in Protocol | Example Product/Resource |

|---|---|---|

| Methylation Array Kit | Genome-wide CpG methylation profiling from DNA. | Illumina Infinium MethylationEPIC v2.0 Kit |

| ChIP-seq Kit | Enrichment of DNA bound by specific histone modifications. | Cell Signaling Technology ChIP-IT High Sensitivity Kit |

| ATAC-seq Kit | Mapping chromatin accessibility in nuclei. | 10x Genomics Chromium Next GEM Single Cell ATAC v2 |

| Bisulfite Conversion Kit | Converts unmethylated cytosine to uracil for methylation sequencing. | Zymo Research EZ DNA Methylation-Lightning Kit |

| ncRNA Library Prep Kit | Construction of sequencing libraries for small/long ncRNAs. | Takara Bio SMARTer smRNA-Seq Kit |

| Multi-Omic Database | Source of public data for training/validation. | TCGA, GEO, ENCODE, Roadmap Epigenomics |

| Analysis Software Suite | Integrated environment for preprocessing epigenomic data. | nf-core/methylseq, nf-core/chipseq, Galaxy Epigenomics |

| Cloud Compute Credit | Essential for running intensive AI training on large datasets. | AWS Credits for Research, Google Cloud Research Credits |

In the era of multi-omics data, the transition from raw epigenetic and non-coding RNA (ncRNA) data to biological insight is a central challenge. This document, framed within a thesis on AI-assisted analysis, defines core analytical goals and provides practical protocols for researchers and drug development professionals. AI methods are now indispensable for parsing the complexity of histone modifications, DNA methylation, and ncRNA interactions to derive testable hypotheses and biomarkers.

The primary computational goals in epigenetic and ncRNA research can be categorized, with their associated data types and common AI/statistical approaches summarized below.

Table 1: Common Analytical Goals in Epigenetic & ncRNA Research

| Analytical Goal | Primary Data Types | Key AI/Statistical Methods | Typical Output |

|---|---|---|---|

| Biomarker Detection | DNA methylation arrays, miRNA-seq, circRNA expression | Differential expression analysis (e.g., DESeq2, limma), Feature selection (LASSO, Random Forest), Deep learning (Autoencoders) | A shortlist of candidate biomarkers (e.g., hypermethylated genes, dysregulated miRNAs) with diagnostic/prognostic power. |

| Regulatory Network Inference | ChIP-seq, ATAC-seq, RNA-seq (coding & ncRNA), Hi-C | Correlation networks (WGCNA), Bayesian networks, GENIE3, Graph Neural Networks (GNNs) | A directed or undirected graph modeling regulatory interactions (e.g., transcription factor -> miRNA -> mRNA). |

| Functional Enrichment & Pathway Analysis | Gene/feature lists from differential analysis | Over-representation analysis (ORA), Gene Set Enrichment Analysis (GSEA), Ingenuity Pathway Analysis (IPA) | Significantly enriched biological pathways, GO terms, or disease associations. |

| Dimensionality Reduction & Clustering | Multi-omics matrices (methylation, expression) | PCA, t-SNE, UMAP, Variational Autoencoders (VAEs), Consensus Clustering | Discovery of novel disease subtypes or cellular states. |

Detailed Experimental Protocols

Protocol 1: AI-Assisted Biomarker Detection from Methylation and miRNA Data

Objective: To identify a robust, multi-modal biomarker signature for disease classification.

Materials & Workflow:

- Data Acquisition: Obtain matched DNA methylation (450k/EPIC array) and small RNA-seq data from case and control cohorts (minimum n=30 per group).

- Preprocessing:

- Methylation: Perform quality control (minfi R package), normalization (SWAN), and β-value calculation. Filter probes (remove cross-reactive, SNP-associated).

- miRNA-seq: Process raw reads with FastQC, adaptor trimming (Cutadapt), alignment (Bowtie2 to miRBase), and quantification (featureCounts). Normalize counts (TPM or DESeq2's median of ratios).

- Differential Analysis:

- For methylation, identify differentially methylated positions (DMPs) using

limma(adjusted p-value < 0.05, |Δβ| > 0.1). - For miRNA, identify differentially expressed miRNAs using

DESeq2(adjusted p-value < 0.05, |log2FC| > 1).

- For methylation, identify differentially methylated positions (DMPs) using

- Feature Selection & Integration:

- Concatenate top DMPs and DE miRNAs into a unified feature matrix.

- Apply LASSO logistic regression (

glmnetR package) with 10-fold cross-validation to select a parsimonious, non-redundant feature set predictive of disease status.

- Validation: Assess biomarker panel performance on an independent validation cohort using AUC-ROC analysis.

Protocol 2: Inferring a ceRNA Regulatory Network

Objective: To construct a competing endogenous RNA (ceRNA) network involving lncRNAs, circRNAs, and mRNAs.

Materials & Workflow:

- Data Acquisition: RNA-seq data (including ribosomal RNA-depleted) from relevant tissue/cell lines to capture lncRNA, circRNA, and mRNA expression.

- Expression Quantification:

- mRNA/lncRNA: Align to reference genome (STAR), quantify expression (StringTie).

- circRNA: Identify and quantify using dedicated tools (CIRI2, CIRCexplorer2).

- Candidate Interaction Prediction:

- Identify shared miRNA response elements (MREs) using databases (miRanda, TargetScan) or tools (SpongeScan).

- Calculate expression correlations (Pearson) between candidate ceRNA pairs (e.g., lncRNA-mRNA) across samples.

- Network Construction & AI Enhancement:

- Build an initial network where nodes are RNAs and edges represent significant shared miRNAs and positive expression correlation (p < 0.01).

- Refine the network using a Graph Neural Network (GNN) to prune false-positive edges and predict novel interactions based on topological features.

- Functional Validation: Select key hub nodes for experimental validation via siRNA knockdown and subsequent qPCR of predicted partners.

Visualizing Workflows and Relationships

AI-Driven Biomarker Discovery Pipeline

ceRNA Network Core Hypothesis

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Kits for Epigenetic & ncRNA Analysis

| Item | Function | Example Application |

|---|---|---|

| Methylation-Specific PCR (MSP) Kit | Amplifies DNA sequences based on methylation status at CpG islands. | Validation of differentially methylated regions identified from array/seq data. |

| miRNA Mimics & Inhibitors | Synthetic RNAs that increase or decrease functional activity of specific miRNAs. | Gain/loss-of-function experiments to validate miRNA-mRNA regulatory pairs. |

| ChIP-Grade Antibodies | High-specificity antibodies for histone modifications (H3K27ac, H3K9me3) or transcription factors. | Chromatin Immunoprecipitation to map regulatory element activity. |

| 4sU Labeling Reagents (e.g., 4-thiouridine) | Metabolic label for newly transcribed RNA, enabling nascent RNA capture. | Studying dynamic changes in ncRNA transcription upon perturbation. |

| CRISPR/dCas9 Epigenetic Editor Systems | dCas9 fused to modifiers (DNMT3A, TET1) for targeted DNA methylation/demethylation. | Functional validation of epigenetic regulatory elements. |

| circRNA-Specific cDNA Synthesis Kit | Contains random hexamers and exonuclease to degrade linear RNA, enriching for circular transcripts. | Accurate quantification of circRNA expression levels via qRT-PCR. |

| Multi-omics Integration Software (e.g., MOFA+) | Statistical framework for discovering latent factors across omics data types. | Unsupervised discovery of coordinated epigenetic and transcriptional programs. |

Within the broader thesis on AI-assisted analysis in epigenetic and non-coding RNA (ncRNA) research, establishing a robust computational foundation is paramount. This document details the essential bioinformatics skills and computational resources required to perform reproducible, scalable, and insightful AI-driven analyses. The integration of AI, particularly machine learning (ML) and deep learning (DL), into the study of DNA methylation, histone modifications, and ncRNA interactions demands a specialized toolkit and proficiency.

Core Bioinformatics Skills Prerequisites

Proficiency in the following areas is non-negotiable for researchers embarking on AI-assisted epigenetic and ncRNA analysis.

| Skill Category | Specific Competencies | Application in Epigenetic/ncRNA AI Analysis |

|---|---|---|

| Programming & Statistics | Python (NumPy, pandas, scikit-learn, PyTorch/TensorFlow), R (tidyverse, limma, DESeq2), Statistical inference (p-values, multiple testing correction) | Data preprocessing, feature engineering, implementing ML/DL models, differential analysis, result visualization. |

| Data Wrangling | Shell scripting (Bash), Regular Expressions, File format conversion (FASTQ, BAM, BED, Wig, BigWig) | Managing sequencing pipelines, batch processing, extracting relevant genomic regions, preparing input tensors for AI models. |

| Domain Knowledge | Understanding of key epigenetic marks (5mC, H3K27ac, etc.), ncRNA biogenesis & classes (miRNA, lncRNA, circRNA), Genomic coordinate systems | Informed feature selection, biologically relevant model architecture design, and accurate interpretation of AI model outputs. |

| ML/DL Fundamentals | Concepts of overfitting/underfitting, cross-validation, hyperparameter tuning, CNN/RNN architectures, embedding layers | Training models to predict enhancer regions, classify ncRNA functions, or impute missing chromatin accessibility data. |

| Version Control & Reproducibility | Git, GitHub/GitLab, Conda/Docker/Singularity, Workflow languages (Nextflow, Snakemake) | Maintaining code, sharing analyses, creating reproducible computational environments for complex AI pipelines. |

The scale of genomic data necessitates appropriate hardware and cloud strategies.

Quantitative Resource Comparison

| Resource Type | Minimum Viable Specs | Recommended for Active Research | Large-Scale/Production Specs |

|---|---|---|---|

| Local Workstation | 16 GB RAM, 4-core CPU, 1 TB HDD | 64-128 GB RAM, 12-16 core CPU, NVIDIA GPU (8+ GB VRAM), 2 TB SSD | Cluster node: 512GB+ RAM, 32+ cores, multiple high-end GPUs (e.g., A100/H100), high-speed parallel filesystem. |

| Cloud Compute (e.g., AWS, GCP) | Spot instances for batch jobs (e.g., r5.large) | On-demand GPU instances (e.g., g4dn.xlarge, p3.2xlarge) for model training. | Managed services (AWS SageMaker, GCP Vertex AI) for hyperparameter tuning and scalable DL training on multi-GPU setups. |

| Storage | 5-10 TB network-attached storage (NAS) | 50-100 TB scalable block or object storage (e.g., AWS S3, GCP Cloud Storage) with data lifecycle policies. | Petabyte-scale object storage with integrated metadata databases for cohort-level data (e.g., TCGA, ENCODE). |

| Memory/Data Handling | In-memory processing of single epigenomic assays (e.g., one ChIP-seq). | In-memory processing of multiple sample matrices for integrative analysis. | Use of chunking, memory-mapping (e.g., Zarr, TileDB) and out-of-core computation for genome-wide multi-omics data. |

Protocol: Setting Up a Reproducible AI Analysis Environment

Objective: Create a containerized environment for an AI analysis pipeline targeting differential methylation analysis.

Materials:

- Computer with Linux OS or Windows Subsystem for Linux (WSL2).

- Docker or Singularity installed.

- Git installed.

Procedure:

- Clone Pipeline Repository:

Build Docker Image from Provided Dockerfile:

The Dockerfile includes OS setup, Python/R dependencies, and key bioinformatics tools (bwa, samtools, deepTools).

Run Container with Data and Output Mounts:

Execute Initial Workflow Script Inside Container:

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in AI-Assisted Analysis |

|---|---|

| Reference Genome & Annotation (e.g., GRCh38.p14, GENCODE v44) | Provides the coordinate system and gene models for aligning sequencing reads and annotating AI-predicted genomic features. |

| Public Epigenomic Datasets (e.g., ENCODE, Roadmap Epigenomics, TCGA) | Serve as essential training data, validation benchmarks, and sources for transfer learning in AI model development. |

| Curation Databases (e.g., miRBase, lncRNAdb, GWAS Catalog) | Provide ground-truth associations for supervised learning tasks (e.g., linking miRNAs to target genes or epigenetic variants to diseases). |

| Specialized Software (e.g., Bismark for BS-seq, MACS3 for ChIP-seq peak calling, Seurat for single-cell) | Generate the standardized, high-quality input features (e.g., methylation counts, chromatin peaks, cell clusters) required for AI model training. |

| ML/DL Frameworks (e.g., PyTorch-Geometric for graph-based models on interaction networks, Selene for sequence-based DL) | Offer specialized libraries building upon core frameworks to model the unique structures of epigenetic and ncRNA data. |

| Hyperparameter Optimization Platforms (e.g., Weights & Biases, MLflow) | Track experiments, manage model versions, and systematically optimize complex AI model parameters across computational runs. |

Protocol: An AI Workflow for Integrating ncRNA and Chromatin Data

Objective: Predict enhancer-derived lncRNA activity using a convolutional neural network (CNN) integrating histone modification ChIP-seq and ATAC-seq data.

Materials:

- Processed ChIP-seq (H3K27ac, H3K4me1) and ATAC-seq signal tracks (BigWig format) from cell type of interest.

- Annotation of known enhancers and lncRNA TSS (BED format).

- Workstation/Server with NVIDIA GPU, CUDA drivers, and PyTorch installed.

Procedure:

- Feature Matrix Generation:

- Label Preparation: Annotate each enhancer region with binary label (1/0) based on evidence of lncRNA transcription from overlapping CAGE data or GRO-cap.

CNN Model Training (Python Script Excerpt):

Model Evaluation: Perform k-fold cross-validation and assess performance using AUROC and AUPRC metrics on a held-out test set.

Visualizations

AI-Assisted Epigenomics Analysis Workflow

Skills & Resources Converge for Robust Analysis

AI Models Decipher Epigenetic-ncRNA Crosstalk

From Raw Data to Biological Insight: AI Workflows and Real-World Applications

Within the broader thesis on AI-assisted analysis in epigenetic and non-coding RNA (ncRNA) research, a robust and standardized computational pipeline is foundational. This protocol details the critical pre-analytical steps required to transform raw, heterogeneous sequencing and array-based data into a structured, normalized, and feature-engineered dataset suitable for downstream AI/ML modeling. The goal is to ensure biological signals are maximized while technical artifacts and noise are minimized.

Data Preprocessing: Quality Control and Cleaning

The initial step involves assessing raw data quality and performing necessary filtering.

For Sequencing Data (e.g., ChIP-seq, ATAC-seq, RNA-seq for ncRNAs)

Protocol: Adapter Trimming and Quality Filtering using FastQC and Trimmomatic

- Quality Assessment: Run

FastQCon raw FASTQ files to generate reports on per-base sequence quality, adapter contamination, and GC content. - Trimming: Execute

Trimmomaticin paired-end or single-end mode as required. - Post-trimming QC: Re-run

FastQCon trimmed files to confirm improvement.

For Microarray Data (e.g., Methylation 450k/EPIC arrays)

Protocol: Background Correction and Detection P-value Filtering using minfi (R/Bioconductor)

- Load Data: Read IDAT files into R using

minfi::read.metharray.exp. - Background Correction: Apply

minfi::preprocessNoobfor normalization and background correction. - Probe Filtering: Remove probes with a detection p-value > 0.01 in more than 5% of samples. Remove cross-reactive probes and probes overlapping SNPs.

Table 1: Standard QC Metrics and Thresholds for Epigenetic/ncRNA Data

| Data Type | QC Metric | Tool | Recommended Threshold | Action upon Failure |

|---|---|---|---|---|

| All NGS | Read Quality (Q-score) | FastQC | Q30 > 70% of bases | More aggressive trimming or exclude sample |

| All NGS | Adapter Content | FastQC | < 5% after trimming | Re-trim with specific adapter file |

| ChIP-seq | % Reads in Peaks (FRiP) | MACS2 | > 1% (broad), >5% (sharp) | Indicates poor enrichment; exclude sample |

| RNA-seq | Mapping Rate | STAR/HiSAT2 | > 70% | Check sequencing adapter or reference genome |

| Methylation Array | Probe Detection p-value | minfi | p < 0.01 in >95% samples | Exclude probe from analysis |

Title: Preprocessing & QC Workflow for Multi-Omics Data

Data Normalization: Mitigating Technical Variability

Normalization corrects for systematic technical differences (e.g., sequencing depth, batch effects) to enable accurate biological comparison.

For ncRNA Sequencing (e.g., miRNA, lncRNA expression)

Protocol: TMM Normalization using edgeR (R/Bioconductor)

- Create DGEList: Load count matrix into a

DGEListobject. - Calculate Normalization Factors:

calcNormFactors(object, method = "TMM")applies the Trimmed Mean of M-values method to scale library sizes. - Output: The normalized log2-counts-per-million (logCPM) can be extracted with

cpm(dge_object, log = TRUE).

For Chromatin Accessibility/Histone Mark Data (Peak Counts)

Protocol: Counts per Million (CPM) or DESeq2 Median-of-Ratios

- Simple CPM:

normalized_counts = (raw_counts / total_reads_per_sample) * 1,000,000. - Robust Normalization: Use

DESeq2::vst()orDESeq2::rlog()transformation, which includes a median-of-ratios normalization and variance stabilizing transformation ideal for downstream analysis.

For DNA Methylation Beta-Values

Protocol: Intra- and Inter-Array Normalization with wateRmelon (R)

- Subset-quantile Within Array Normalization (SWAN): Apply

wateRmelon::swan()to correct for technical differences between Infinium I and II probe types. - Batch Correction: Use

sva::ComBat()orlimma::removeBatchEffect()on M-values (logit transformation of Beta-values) to adjust for processing batch or slide.

Table 2: Normalization Techniques by Data Type

| Data Type | Primary Method | Tool/Package | Key Assumption | Output |

|---|---|---|---|---|

| ncRNA-seq Counts | TMM / RLE | edgeR, DESeq2 | Most features are not differentially expressed. | logCPM, vst/rlog values |

| ChIP-seq/ATAC-seq Peak Counts | CPM / DESeq2 | edgeR, DESeq2 | Total signal per sample varies. | CPM, normalized counts |

| DNA Methylation (Array) | SWAN, BMIQ | minfi, wateRmelon | Probe type bias is technical. | Batch-corrected Beta/M-values |

| All (Batch Effect) | ComBat, limma | sva, limma | Batch effect is orthogonal to biology. | Batch-adjusted matrix |

Feature Engineering: Deriving Biologically Meaningful Predictors

Feature engineering creates new input variables to improve AI model performance and interpretability.

From Genomic Coordinates to Genomic Context

Protocol: Annotate Peaks/Regions with ChIPseeker (R/Bioconductor)

- Load Data: Import BED files of called peaks.

- Annotation:

annotatePeak(peak_file, tssRegion=c(-3000, 3000), TxDb=TxDb.Hsapiens.UCSC.hg38.knownGene)assigns each peak to genomic features (promoter, intron, exon, intergenic). - Create Features: Generate binary or proportional features: "Peak in Promoter of Gene X", "Number of peaks within 50kb of TSS".

Creating Combinatorial Epigenetic Signals

Protocol: Define Enhancer-like Regions from H3K27ac and H3K4me1

- Intersect Peaks: Use

bedtools intersectto find genomic regions with both H3K27ac and H3K4me1 peaks, excluding regions with H3K4me3 (promoter mark). - Quantify Activity: Count reads in these predicted enhancer regions for each sample to form an "enhancer activity" matrix.

ncRNA-gene Interaction Features

Protocol: Predict miRNA-mRNA Interactions using multiMiR

- Get Target Lists: For a miRNA of interest, use

multiMiR::get_multimir()to retrieve validated and predicted mRNA targets from multiple databases. - Integrate with Expression: For a given sample, create a feature like "mean expression of confirmed targets of miRNA-X".

Table 3: Examples of Engineered Features for AI/ML Input

| Feature Category | Example Feature | Construction Method | Potential Biological Meaning |

|---|---|---|---|

| Genomic Context | "Promoter Accessibility Score" | Mean ATAC-seq signal ±2kb from all TSS. | Transcriptional potential |

| Combinatorial | "Active Enhancer Count" | Number of H3K27ac+/H3K4me1+/H3K4me3- regions. | Regulatory landscape complexity |

| Interaction | "miRNA Regulatory Burden" | Sum of expression of a miRNA's predicted targets. | miRNA activity level |

| Dimensionality Reduction | "PC1 of Methylation" | First principal component of top variable CpGs. | Major source of methylation variation |

Title: Feature Engineering Pathways for AI Model Input

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Toolkit for Epigenetic/ncRNA Data Analysis Pipelines

| Item / Solution | Function / Purpose | Example (Provider) |

|---|---|---|

| NGS Library Prep Kit | Prepares DNA/RNA for sequencing with adapters. | KAPA HyperPrep Kit (Roche), NEBNext Ultra II (NEB) |

| Methylation Array Kit | Processes bisulfite-converted DNA for array analysis. | Infinium MethylationEPIC Kit (Illumina) |

| ChIP-grade Antibody | Specifically immunoprecipitates target histone mark or protein. | Anti-H3K27ac (Abcam, Cst), Anti-H3K4me3 (Millipore) |

| Bisulfite Conversion Kit | Converts unmethylated cytosine to uracil for methylation analysis. | EZ DNA Methylation Kit (Zymo Research) |

| Small RNA Isolation Kit | Enriches for miRNAs and other small ncRNAs. | mirVana miRNA Isolation Kit (Thermo Fisher) |

| Cross-linking Reagent | Fixes protein-DNA interactions for ChIP-seq. | Formaldehyde (37%), DSG (Disuccinimidyl glutarate) |

| RNase Inhibitor | Prevents degradation of RNA during ncRNA experiments. | Recombinant RNase Inhibitor (Takara) |

| Size Selection Beads | Cleans up and selects desired fragment sizes post-library prep. | SPRIselect Beads (Beckman Coulter) |

Within the thesis "AI-Assisted Integrative Analysis of Epigenetic and Non-Coding RNA Data for Novel Therapeutic Target Discovery," selecting the correct deep learning architecture is paramount. Epigenetic marks (e.g., DNA methylation, histone modifications) and ncRNA (e.g., miRNA, lncRNA) expression form a complex, dynamic, and interconnected regulatory system. This document provides application notes and protocols for applying Convolutional Neural Networks (CNNs), Recurrent Neural Networks (RNNs), and Graph Neural Networks (GNNs) to specific data modalities within this research, ensuring biologically meaningful and computationally efficient model selection.

Application Notes & Protocols

CNNs for Genomic Sequence Data (e.g., Predicting Transcription Factor Binding Sites)

CNNs excel at extracting local, translation-invariant features from one-hot encoded DNA sequences, making them ideal for cis-regulatory element prediction.

Protocol: CNN-based Prediction of Chromatin Accessibility from DNA Sequence

- Objective: Train a CNN to predict ATAC-seq or DNase-seq peaks (binary classification) using only the underlying genomic sequence (±500bp around peak summit).

- Input Data Preparation:

- Obtain peak coordinates (.bed files) from your epigenetic assay.

- Extract corresponding genomic sequences from a reference genome (e.g., GRCh38) using

bedtools getfasta. - Generate negative control sequences by randomly sampling genomic regions not in peaks, matched for GC content and length.

- One-hot encode sequences: 'A'→[1,0,0,0], 'C'→[0,1,0,0], 'G'→[0,0,1,0], 'T'→[0,0,0,1], 'N'→[0,0,0,0].

- Model Architecture (Example):

- Input Layer: (1000, 4) tensor.

- Conv Layer 1: 64 filters, kernel size=12, activation='relu'.

- MaxPooling1D: pool size=4.

- Conv Layer 2: 32 filters, kernel size=6, activation='relu'.

- GlobalMaxPooling1D.

- Dense Layer: 32 units, activation='relu', dropout=0.3.

- Output Layer: 1 unit, activation='sigmoid' (binary classification).

- Training: Use binary cross-entropy loss, Adam optimizer. Validate on a held-out chromosome.

Table 1: Quantitative Performance Benchmark of CNN Architectures on Human ENCODE DNase-seq Data

| Architecture | Test AUC | Test Accuracy | Params (M) | Primary Use Case |

|---|---|---|---|---|

| DeepSEA (Baseline) | 0.925 | 0.872 | ~50 | Broad chromatin feature prediction |

| 1D-CNN (Protocol) | 0.912 | 0.861 | ~0.8 | Rapid, focused peak prediction |

| Hybrid CNN-BiLSTM | 0.928 | 0.878 | ~12 | Capturing weak long-range dependencies |

| Dilated CNN | 0.918 | 0.865 | ~5.2 | Modeling wider sequence context efficiently |

RNNs/LSTMs for Longitudinal Time-Series Data (e.g., Cellular Differentiation)

RNNs, particularly Long Short-Term Memory (LSTM) networks, model sequential dependencies, ideal for pseudo-time series of epigenetic states during dynamic processes.

Protocol: LSTM for Modeling ncRNA Expression Dynamics During Cell Differentiation

- Objective: Model the temporal progression of lncRNA expression from single-cell RNA-seq data ordered along a pseudo-time trajectory.

- Input Data Preparation:

- Perform pseudo-time ordering on scRNA-seq data using tools like Monocle3 or PAGA.

- Extract expression matrices for high-variance lncRNAs across ordered cells.

- Create sequential windows (length t) of expression vectors. Each sample is a sequence of t time points, each a vector of lncRNA expression values. The target can be the next time point's expression (regression) or a later phenotypic state (classification).

- Model Architecture (Many-to-One for Classification):

- Input Layer: Shape (

sequence_length,num_lncRNAs). - Masking Layer: To handle any missing data points.

- LSTM Layer 1: 128 units, returnsequences=True.

- Dropout: 0.2.

- LSTM Layer 2: 64 units, returnsequences=False.

- Dense Layer: 32 units, activation='relu'.

- Output Layer:

num_classesunits, activation='softmax'.

- Input Layer: Shape (

- Training: Use categorical cross-entropy loss, Adam optimizer. Sequence length (t) is a critical hyperparameter.

Table 2: LSTM Performance on Simulated scRNA-seq Time-Series of Myeloid Differentiation

| Target Prediction Task | Sequence Length (t) | Model | Mean Absolute Error (Reg.) / F1-Score (Class.) |

|---|---|---|---|

| Next-step expression (Reg.) | 5 | LSTM | 0.084 (Expression, scaled) |

| Final cell fate (Class.) | 10 | Stacked LSTM | 0.91 |

| Final cell fate (Class.) | 10 | Simple RNN | 0.82 |

| Final cell fate (Class.) | 10 | Temporal CNN | 0.89 |

GNNs for Molecular Interaction Networks (e.g., ncRNA-Gene-Protein Pathways)

GNNs operate on graph-structured data, perfect for modeling heterogeneous biological networks involving ncRNAs, genes, proteins, and diseases.

Protocol: GNN for Predicting Novel miRNA-Disease Associations

- Objective: Train a GraphSAGE model on a heterogeneous network to rank potential miRNA-disease links.

- Graph Construction:

- Nodes: miRNA nodes, gene/protein nodes, disease nodes (from databases like miRBase, STRING, DisGeNET).

- Edges: miRNA-gene (targeting, from TarBase), gene-gene (PPI, from STRING), gene-disease (association, from DisGeNET). Edge types are recorded.

- Features: Node features can be miRNA sequence k-mer frequencies, gene GO term vectors, disease MeSH term vectors.

- Model Architecture (Heterogeneous GraphSAGE):

- Sampling: For each target node, sample a fixed-size neighborhood (e.g., 10 neighbors per hop, 2 hops).

- Aggregation: For each node, aggregate feature information from its sampled neighbors using a mean aggregator, separately for each edge type.

- Update: Combine the node's current features with the aggregated neighbor features via a learnable weight matrix and non-linearity.

- Prediction: After K GraphSAGE layers, obtain node embeddings. For a (miRNA, disease) pair, concatenate their embeddings and pass through an MLP for binary classification.

- Training: Use negative sampling (random miRNA-disease pairs) and binary cross-entropy loss.

Table 3: GNN Model Comparison on the HMDD v3.2 miRNA-Disease Association Dataset

| Model Type | AUC | AP | Key Advantage for Epigenetics/ncRNA |

|---|---|---|---|

| GraphSAGE (Protocol) | 0.886 | 0.812 | Inductive; handles unseen nodes |

| GAT (Graph Attention) | 0.879 | 0.798 | Learns importance of different neighbors |

| Matrix Factorization (Baseline) | 0.832 | 0.741 | Simple, but cannot use network topology |

| GCN (Transductive) | 0.880 | 0.805 | Simpler but less flexible on new graphs |

Mandatory Visualization

Diagram 1: AI Model Selection Workflow for Epigenetic/ncRNA Data

Diagram 2: GNN Protocol for miRNA-Disease Association Prediction

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Reagents & Tools for AI-Ready Epigenetic/ncRNA Data Generation

| Reagent/Tool | Provider/Example | Function in Context |

|---|---|---|

| ATAC-seq Kit | Illumina Tagmentase TDE1, 10x Genomics Chromium Next GEM | Profiles chromatin accessibility, generating sequence data for CNN models. |

| scRNA-seq Kit | 10x Genomics Chromium Single Cell 3', Parse Biosciences Evercode | Captures transcriptome (incl. ncRNA) of single cells, enabling pseudo-time series for RNNs. |

| CUT&Tag Kit | Cell Signaling Technology, EpiCypher | Maps histone modifications or TF binding with low input, providing precise genomic coordinates. |

| MirCury LNA miRNA PCR | Qiagen | Validates expression levels of specific miRNAs predicted by GNN models. |

| ChIRP RNA Kit | MilliporeSigma | Identifies genomic binding sites of specific lncRNAs, defining edges for network graphs. |

| Methylation Array | Illumina Infinium MethylationEPIC | Provides genome-wide CpG methylation quantitative data for time-series or integrative analysis. |

| Graph Database | Neo4j, Amazon Neptune | Stores and queries heterogeneous biological network data for efficient GNN preprocessing. |

| DL Framework | PyTorch Geometric, TensorFlow/Keras | Implements CNN, RNN, and GNN models with GPU acceleration and pre-built layers. |

Application Notes

Thesis Context: Within the broader investigation of AI-assisted epigenetic and non-coding RNA (ncRNA) analysis, deep learning (DL) models applied to DNA methylation data offer a transformative approach for molecular subtyping. This enables precise stratification of heterogeneous diseases, which is critical for developing targeted therapies and understanding disease mechanisms.

Current State: Recent studies (2023-2024) demonstrate that convolutional neural networks (CNNs) and transformer-based architectures have become dominant for analyzing high-dimensional methylation array data (e.g., Illumina EPIC arrays). These models directly learn from β-values or M-values to identify complex, non-linear patterns associated with clinical subtypes in cancers, neurological disorders, and autoimmune diseases.

Key Findings:

- Performance: DL models consistently outperform traditional machine learning (e.g., Random Forests, SVM) and conventional bioinformatics pipelines (e.g., based on differential methylation regions) in subtype prediction accuracy, particularly for solid tumors with high cellular heterogeneity.

- Data Efficiency: Hybrid architectures combining autoencoders for dimensionality reduction with supervised classifiers show promise in scenarios with limited sample sizes (<500 samples).

- Interpretability: Post-hoc explainable AI (XAI) methods, such as SHAP and integrated gradients, are now routinely applied to identify CpG loci and genomic regions most influential to the model's decision, linking predictions to biological pathways.

Quantitative Comparison of Recent DL Architectures for Methylation-Based Subtyping:

Table 1: Performance comparison of selected deep learning models (2023-2024).

| Model Architecture | Primary Disease Focus (Study) | Input Data | Reported Accuracy | Key Advantage |

|---|---|---|---|---|

| 1D-CNN + Attention | Glioblastoma Multiforme (GBM) | EPIC array β-values | 94.2% | Captures local CpG dependencies. |

| MethylNet | Pan-Cancer (TCGA) | 450K/EPIC array M-values | 91.7% (avg.) | Incorporates biological hierarchy. |

| Transformer Encoder | Colorectal Cancer (CRC) | EPIC array β-values | 96.5% | Models long-range genomic interactions. |

| Hybrid AE + Classifier | Breast Cancer Subtypes | Reduced-dimension features | 93.8% | Effective for smaller datasets (N~300). |

Protocols

Protocol 1: End-to-End Deep Learning Pipeline for Methylation Subtype Prediction

I. Data Acquisition & Preprocessing

- Source Data: Download DNA methylation β-value matrices (IDAT files processed via

minfiorSeSAMe) from public repositories (e.g., GEO, TCGA) or generate in-house. - Quality Control: Remove probes with detection p-value > 0.01 in >5% of samples, cross-reactive probes, and probes on sex chromosomes for non-sex-specific studies.

- Normalization: Perform intra-array normalization (e.g., BMIQ) to correct for Type-I/II probe design bias.

- Missing Value Imputation: Use k-nearest neighbors (KNN) imputation (

sklearn.impute.KNNImputer) for missing β-values. - Data Partitioning: Split data into Training (70%), Validation (15%), and held-out Test (15%) sets, ensuring balanced subtype representation via stratified splitting.

II. Model Architecture & Training (Example: 1D-CNN)

- Input: Vector of ~700,000 β-values per sample (aligned to a consistent probe ordering). Add a channel dimension (1, N_probes) for 1D convolution.

- Architecture:

- Layer 1: 1D Convolution (filters=128, kernelsize=7, activation='relu')

- Layer 2: MaxPooling1D(poolsize=3)

- Layer 3: 1D Convolution (filters=64, kernel_size=5, activation='relu')

- Layer 4: GlobalAveragePooling1D()

- Layer 5: Dense(units=32, activation='relu')

- Output Layer: Dense(units=

n_subtypes, activation='softmax')

- Training: Use Adam optimizer (lr=1e-4), categorical cross-entropy loss, batch size=32, for up to 200 epochs with early stopping (patience=20) on validation loss.

III. Model Interpretation

- Apply XAI: Compute SHAP values (

DeepExplainerfromshaplibrary) using a background of 100 randomly selected training samples. - Identify Top Probes: Extract the top 1000 CpG probes with the highest mean absolute SHAP values.

- Functional Enrichment: Annotate top probes to genes and perform pathway enrichment analysis (e.g., via

gomethinmissMethylR package) to derive biological insights.

Protocol 2: Validation via Independent Cohort & Biological Corroboration

- Technical Validation: Apply the trained model to an independent, publicly available methylation dataset for the same disease. Assess concordance of predicted subtypes with reported clinical/molecular labels (Cohen's Kappa).

- Biological Validation:

- For each predicted subtype, perform differential methylation analysis (limma on M-values) to identify subtype-specific hyper/hypo-methylated regions.

- Integrate with matched transcriptomic data (if available) to validate inverse correlation between promoter methylation and gene expression for key subtype marker genes.

- Perform gene set variation analysis (GSVA) to link subtypes to known oncogenic or immune pathways.

Visualizations

Title: DNA Methylation Deep Learning Analysis Workflow

Title: 1D-CNN Architecture for Methylation Data

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions & Materials.

| Item | Supplier/Example | Function in Protocol |

|---|---|---|

| Illumina Infinium MethylationEPIC v2.0 BeadChip Kit | Illumina | Genome-wide profiling of >935,000 CpG methylation sites. Primary data generation. |

| minfi R/Bioconductor Package | Open Source | Comprehensive pipeline for reading, QC, normalization, and preprocessing of IDAT files. |

| SeSAMe R/Bioconductor Package | Open Source | Alternative pipeline offering improved precision and accuracy for methylation data processing. |

| TensorFlow/PyTorch with CUDA | Google / Meta | Deep learning frameworks for building and training custom neural network models. |

| SHAP (SHapley Additive exPlanations) Library | Open Source | Post-hoc model interpretation to identify influential CpG sites for predictions. |

| missMethyl R/Bioconductor Package | Open Source | Performs gene set enrichment analysis for methylation data, correcting for probe bias. |

| Reference Methylome (e.g., leukocyte, placenta) | Public Repositories | Used as a normalization baseline or control in certain analysis pipelines. |

| Genomic DNA Bisulfite Conversion Kit | Zymo Research, Qiagen | Essential pre-array step converting unmethylated cytosines to uracil, preserving methylated ones. |

Within the broader thesis of AI-assisted analysis of epigenetic and ncRNA data, identifying functional lncRNA-miRNA-mRNA (ceRNA) networks represents a critical application. These networks, where long non-coding RNAs (lncRNAs) act as molecular sponges for miRNAs, thereby modulating mRNA expression, are pivotal in regulating cellular processes and disease pathogenesis. AI models, particularly graph neural networks (GNNs) and multimodal deep learning, are now essential for integrating multi-omics data (e.g., transcriptomic, epigenetic, and clinical data) to deconvolute these complex, context-specific interactions and prioritize them for experimental validation and therapeutic targeting.

Key Quantitative Data & AI Performance

Table 1: Performance Metrics of AI Models in ceRNA Network Prediction (2023-2024 Benchmarks)

| Model Type | Data Sources Integrated | Average Precision (AP) | AUC-ROC | Key Limitation Addressed |

|---|---|---|---|---|

| Graph Neural Network (GNN) | Expression, sequence, known interactions | 0.78 | 0.89 | Captures topological network features. |

| Multimodal DNN | Expression, epigenetic marks (H3K27ac), RBP motifs | 0.82 | 0.91 | Integrates regulatory layers beyond expression. |

| Ensemble Model (RF+GNN) | Expression, clinical outcome, miRNA targets | 0.85 | 0.93 | Reduces false positives via consensus. |

| Transformer-based | Single-cell RNA-seq, spatial transcriptomics | 0.80 | 0.90 | Models cell-type-specific networks. |

Table 2: Source Databases for AI-Driven ceRNA Network Construction

| Database | Data Type | Primary Use in AI Pipeline | Update Frequency |

|---|---|---|---|

| starBase, miRBase | miRNA-target interactions (CLIP-seq) | Ground truth for training/validation | Biannual |

| LNCipedia, NONCODE | lncRNA sequences & annotations | Feature extraction | Annual |

| TCGA, GEO | Disease vs. normal expression profiles | Context-specific network inference | Continuous |

| ENCODE, Roadmap | Epigenetic chromatin states | Filter for functional lncRNA promoters | As available |

Experimental Protocol: Validation of AI-Predicted ceRNA Axis

This protocol details the functional validation of a specific AI-predicted lncRNA-miRNA-mRNA axis in a cellular model.

A. Materials: The Scientist's Toolkit

| Research Reagent / Solution | Function in Protocol |

|---|---|

| Lipofectamine 3000 | Transfection reagent for oligonucleotides. |

| LNATM GapmeRs (Anti-sense Oligos) | For efficient and specific knockdown of nuclear lncRNA. |

| miRNA Mimics & Inhibitors | To ectopically increase or decrease specific miRNA activity. |

| Dual-Luciferase Reporter Assay System | To test direct miRNA binding to wild-type/mutant lncRNA or mRNA 3'UTR. |

| qPCR Assays (TaqMan) | For quantitative measurement of lncRNA, miRNA, and mRNA levels. |

| RIPA Lysis Buffer | For total protein extraction for downstream western blot. |

| CCK-8 Cell Viability Assay | To assess phenotypic impact of network perturbation. |

B. Step-by-Step Methodology

Step 1: In Silico Prediction & Prioritization

- Input matched transcriptomic datasets (e.g., tumor/normal) into a pre-trained GNN model (e.g., ceNPN).

- Extract top-ranked candidate networks based on correlation, regulatory potential score, and association with clinical phenotype.

- Prioritize one axis (e.g., LINC01234 / miR-567 / MYC mRNA) for validation.

Step 2: Functional Perturbation in Cell Culture

- Culture relevant cell line (e.g., HeLa, MCF-7).

- Transfect cells in separate wells using:

- Condition A: Negative control siRNA.

- Condition B: LINC01234 GapmeR.

- Condition C: miR-567 mimic.

- Condition D: miR-567 inhibitor.

- Condition E: Co-transfection of LINC01234 GapmeR + miR-567 inhibitor.

- Harvest cells 48-72 hours post-transfection for analysis.

Step 3: Molecular Validation (qPCR & Western Blot)

- Isolate total RNA and protein from all conditions.

- Perform qPCR to quantify:

- LINC01234 levels (confirm knockdown).

- Mature miR-567 levels (confirm mimic/inhibitor efficacy).

- MYC mRNA levels. Expected Result: MYC mRNA should decrease with miR-567 mimic and increase with LINC01234 knockdown; the latter should be rescued by co-transfection with miR-567 inhibitor.

- Perform Western Blot for MYC protein to confirm changes at the functional level.

Step 4: Direct Interaction Validation (Luciferase Assay)

- Clone wild-type (WT) and miRNA-response-element (MRE)-mutated fragments of LINC01234 and the MYC 3'UTR into a psiCHECK-2 luciferase reporter vector.

- Co-transfect HEK293T cells with:

- Either WT or Mutant reporter vector.

- Either miR-567 mimic or negative control.

- Measure Renilla/Firefly luciferase activity 24h later. Expected Result: miR-567 mimic should reduce luciferase activity only for the WT reporters, indicating specific binding.

Step 5: Phenotypic Assay

- Seed cells transfected as in Step 2 into 96-well plates.

- At 0, 24, 48, and 72 hours, add CCK-8 reagent and measure absorbance at 450nm to assess proliferation changes resulting from network perturbation.

AI & Experimental Workflow Visualizations

Diagram 1: AI to bench workflow for ceRNA analysis.

Diagram 2: Functional ceRNA network mechanism & intervention.

Application Notes

This document provides a framework for integrating chromatin accessibility (ATAC-seq), non-coding RNA (e.g., miRNA, lncRNA), and transcriptomic (RNA-seq) data to construct regulatory networks. This integrated approach, central to an AI-assisted analysis thesis, moves beyond single-omics correlations to infer causal regulatory hierarchies, identifying master regulators in disease states like oncology or neurodegeneration.

Core Application: Identifying convergent multi-omics signatures for biomarker discovery and therapeutic target validation. For instance, an oncogenic locus may show open chromatin (epigenetic), overexpression of a resident lncRNA (ncRNA), and concomitant upregulation of a proximal mRNA (gene expression). AI/ML models, such as multi-modal deep learning, are trained on these layered datasets to predict novel driver events and patient stratification patterns.

Key Insights:

- Concordant Signals: A transcriptionally active region typically exhibits open chromatin, enhancer-associated ncRNA transcription, and high mRNA output.

- Discordant Signals (Regulatory Potential): Open chromatin with low mRNA expression may indicate a silenced but primed state, often mediated by repressive ncRNAs (e.g., Xist, certain miRNAs).

- AI Integration: Neural networks can weight these concordant/discordant signals from disparate omics layers to score the functional impact of non-coding genetic variants.

Table 1: Representative Multi-Omics Signatures and Interpretations

| Epigenetic Signal (ATAC-seq/ChIP-seq) | ncRNA Signal (RNA-seq/smallRNA-seq) | Gene Expression (RNA-seq) | Integrated Interpretation |

|---|---|---|---|

| Peak at gene promoter | High lncRNA expression from enhancer | High mRNA expression | Active gene transcription; lncRNA may be enhancer RNA (eRNA). |

| Peak at distal enhancer | High miRNA expression | Low mRNA of predicted target | Potential miRNA-mediated repression of a target gene. |

| Loss of peak (closed chromatin) | Low expression of activating lncRNA | Low mRNA expression | Silenced or inactivated genomic locus. |

| Peak at novel intergenic region | Novel unannotated transcript | N/A | Discovery of novel regulatory element or non-coding gene. |

Protocols

Protocol 1: Concurrent Multi-Omics Profiling from a Single Biological Sample

Objective: To generate matched epigenetic, ncRNA, and total RNA datasets from a limited sample (e.g., patient biopsy, sorted cells), minimizing batch effects.

Materials:

- Nuclei Isolation Buffer: (10 mM Tris-HCl pH 7.4, 10 mM NaCl, 3 mM MgCl2, 0.1% IGEPAL CA-630). For extracting intact nuclei for ATAC-seq and nuclear RNA.

- Tri-Reagent (or equivalent): For simultaneous isolation of total RNA, small RNAs, and DNA/proteins.

- Tagment DNA TDE1 Enzyme (Illumina) or Tn5 Transposase: For ATAC-seq library construction.

- RNA Stabilization Reagent (e.g., RNAlater): For immediate stabilization of RNA profiles.

- Size-Selection Beads (SPRI): For library clean-up and selection of appropriate fragment sizes (e.g., miRNA vs. transcriptome).

Procedure:

- Sample Lysis & Fractionation: Homogenize tissue/cells in Tri-Reagent. Separate organic and aqueous phases per manufacturer's instructions. RNA is in the aqueous phase. DNA and proteins are in the interphase/organic phase.

- Epigenetic (ATAC-seq) Library Prep from Nuclei: a. From a parallel aliquot of fresh sample, isolate intact nuclei using Nuclei Isolation Buffer. b. Perform tagmentation on 50,000 nuclei using the Tagment DNA TDE1 Enzyme (37°C, 30 min). c. Purify tagmented DNA and amplify with indexed primers for 8-12 PCR cycles. Clean up with SPRI beads.

- ncRNA & Transcriptome Library Prep: a. Precipitate RNA from the aqueous phase (Step 1). b. Perform rRNA depletion for the total RNA fraction to enrich for mRNA and lncRNA. c. For the small RNA fraction, use a size-selection protocol (<200 nt) followed by adaptor ligation. d. Construct strand-specific libraries for both fractions.

- Sequencing: Pool libraries and sequence on an appropriate platform (e.g., Illumina NovaSeq). Recommended depths: ATAC-seq (50M reads), total RNA-seq (30M reads), small RNA-seq (10M reads).

Protocol 2: AI-Assisted Integrative Analysis Workflow

Objective: To computationally integrate the three data types using a supervised deep learning model to predict gene expression levels from epigenetic and ncRNA features.

Materials/Software:

- Compute Infrastructure: High-performance computing cluster or cloud (Google Cloud, AWS) with GPU acceleration.

- Containerization: Docker/Singularity images for reproducibility.

- Key Python Packages:

Scanpy(for ATAC-seq),STAR&featureCounts(for RNA-seq),PyTorchorTensorFlowfor model building,MultiOmicsGraphfor integration.

Procedure:

- Data Preprocessing & Alignment:

a. ATAC-seq: Align reads to reference genome (hg38). Call peaks using

MACS2. Create a cell (or sample) x peak matrix. b. RNA-seq: Align reads usingSTAR. Quantify gene/transcript levels withSalmon. Create separate matrices for mRNA and ncRNA (lncRNA, miRNA). - Dimensionality Reduction & Feature Linking: a. Reduce each matrix using PCA (for mRNA) or LSI (for ATAC-seq). b. Link Regulatory Elements to Genes: Use a distance-based approach (e.g., +/- 500kb from TSS) or chromatin interaction data (Hi-C) to assign ATAC-seq peaks to target genes. Assign miRNAs to genes via TargetScan/miRanda databases.

- Multi-Input Neural Network Model Training: a. Define model architecture with three input branches: (i) Epigenetic features (peak intensities), (ii) ncRNA features (expression levels), (iii) Context features (distance, conservation). b. Train the model to predict normalized mRNA expression levels (output). Use 80% of samples for training, 20% for validation. c. Apply SHAP (SHapley Additive exPlanations) analysis to interpret feature importance from the integrated model, identifying key predictive peaks/ncRNAs.

Diagrams

Workflow: Multi-Omics Data Generation & AI Analysis

Regulatory Network Inferred from Multi-Omics Data

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for Multi-Omics Integration

| Item | Function in Multi-Omics Integration |

|---|---|

| Single-Cell Multiome Kits (e.g., 10x Genomics Multiome ATAC + GEX) | Enables simultaneous profiling of chromatin accessibility and transcriptome (including ncRNAs) from the same single cell, providing intrinsic layer matching. |

| Tn5 Transposase (Tagmentase) | The core enzyme for ATAC-seq, fragmenting accessible DNA and adding sequencing adaptors in one step. Critical for low-input epigenomic profiling. |

| Ribonuclease Inhibitors & RNAlater | Preserves the native RNA landscape, including labile ncRNAs like eRNAs and miRNAs, during sample processing for accurate downstream correlation. |

| Methylated DNA Immunoprecipitation (MeDIP) Kits | For capturing DNA methylation data, another key epigenetic layer that can be integrated with ATAC-seq and RNA data. |

| Crosslinking Chromatin Immunoprecipitation (ChIP) Kits | For targeted profiling of histone modifications (H3K27ac, H3K4me3) to annotate active enhancers/promoters identified in ATAC-seq peaks. |

| Strand-Specific Total RNA Library Prep Kits | Essential for accurately distinguishing sense from antisense transcription, crucial for lncRNA and enhancer RNA annotation. |

| Small RNA Size-Selection Beads (SPRI) | To cleanly isolate the <200 nt fraction containing miRNAs, piRNAs, and other small regulatory RNAs from total RNA. |

| Synthetic Spike-In Controls (e.g., from SIRV, ERCC) | Added to samples before library prep to normalize technical variation across different omics assays and batches, improving integration accuracy. |

Navigating Pitfalls: Solving Common Challenges in AI-Epigenomics Analysis

The integration of Artificial Intelligence (AI) in biomedical research, particularly for analyzing epigenetic modifications (e.g., DNA methylation, histone marks) and non-coding RNA (ncRNA) expression profiles, presents a dual challenge of data scarcity (limited patient samples, costly sequencing) and high dimensionality (thousands to millions of genomic features per sample). This article, framed within a thesis on AI-assisted epigenetic and ncRNA analysis, details practical techniques and protocols to address these issues, enabling robust biomarker discovery and therapeutic target identification in drug development.

Table 1: Dimensionality Challenge in Common Assays

| Assay Type | Typical Features per Sample | Common Sample Size (N) | Feature-to-Sample Ratio |

|---|---|---|---|

| Whole-Genome Bisulfite Seq (WGBS) | ~28 Million CpG sites | 50-100 | ~280,000:1 |

| miRNA-Seq (e.g., miRBase v22) | 2,654 mature miRNAs | 30-200 | ~13:1 to 88:1 |

| ChIP-Seq (Transcription Factors) | 50,000 - 200,000 peaks | 20-50 | ~1,000:1 to 10,000:1 |

| Single-cell ATAC-Seq | 50,000 - 200,000 accessible regions | 1,000-10,000 cells | ~5:1 to 200:1 |

Table 2: Impact of Dimensionality Reduction Techniques on Data Structure

| Technique Category | Primary Goal | Typical Reduction (Input -> Output) | Suitability for Small N |

|---|---|---|---|

| Feature Selection (Filter) | Remove low-variance/noise | 50,000 -> 5,000 features | High |

| Feature Extraction (PCA) | Create uncorrelated components | 5,000 -> 50 components | Medium |

| Autoencoders (Non-linear) | Learn compressed representation | 1,000,000 -> 1,000 latent vars | Low (requires large N) |

| Manifold Learning (UMAP/t-SNE) | Preserve local structure for viz | 50,000 -> 2 dimensions | Medium |

Application Notes & Detailed Protocols

Protocol 3.1: Variance-Stabilizing and Low-Variance Filtering for ncRNA-seq Data

Aim: Reduce feature space by removing uninformative miRNAs/lncRNAs prior to differential expression analysis.

Materials: Processed count matrix (e.g., from featureCounts), R/Python environment.

Procedure:

- Calculate Metrics: For each ncRNA feature, compute:

- Mean expression across all samples.

- Variance and/or coefficient of variation (CV).

- Percentage of zeros.

- Apply Filters: Set empirical thresholds (e.g., retain features with mean count > 5, CV > 0.1, and expressed in >20% of samples).

- Validate: Assess the impact by comparing the variance explained in PCA pre- and post-filtering. Retain a log of removed features. Note: This protocol is critical for scarce data to prevent overfitting in downstream classifiers.

Protocol 3.2: Principal Component Analysis (PCA) for Methylation Array Data

Aim: Extract major sources of variation from high-dimensional β-value matrices (e.g., Illumina EPIC array: ~850k CpG sites). Materials: Beta-value matrix (rows=CpGs, columns=samples), cleaned of NA values and batch-corrected. Procedure:

- Selection: Apply Protocol 3.1 to filter low-variance CpG probes (e.g., interquartile range < 0.05).

- Standardization: Center and scale each remaining probe to mean=0, variance=1 (

scale=TRUEin R'sprcomp). - Decomposition: Perform singular value decomposition (SVD) on the standardized matrix.

- Component Selection: Use the elbow method on a scree plot and calculate cumulative variance explained. Retain components explaining >80-90% of variance.

- Interpretation: Correlate top loading probes for key PCs with genomic annotations (e.g., promoter, enhancer) to infer biological drivers.

Protocol 3.3: Autoencoder-Based Non-Linear Reduction for Integrated Multi-Omics