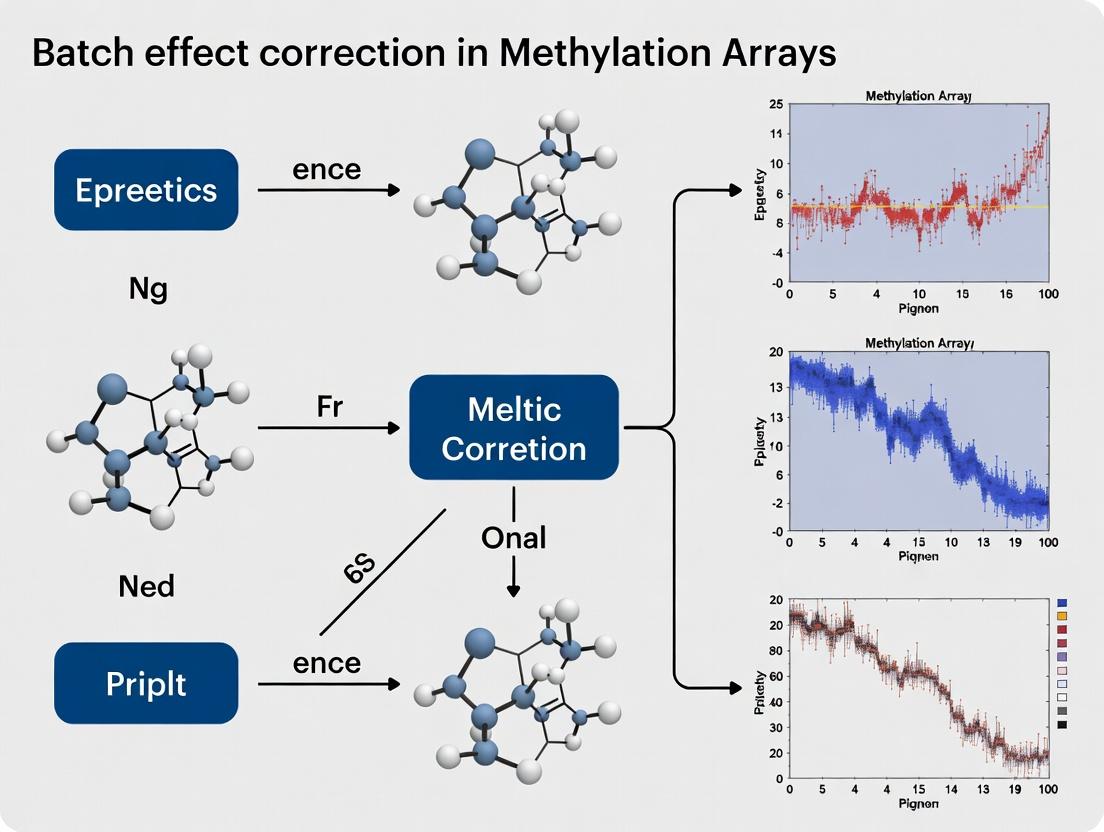

Batch Effect Correction in Methylation Arrays: A Comprehensive Guide for Accurate Epigenomic Analysis

This article provides a complete roadmap for researchers and bioinformaticians to identify, correct, and validate batch effects in DNA methylation array data.

Batch Effect Correction in Methylation Arrays: A Comprehensive Guide for Accurate Epigenomic Analysis

Abstract

This article provides a complete roadmap for researchers and bioinformaticians to identify, correct, and validate batch effects in DNA methylation array data. We begin by defining batch effects and their sources in Illumina Infinium platforms (EPIC, 450K). We then detail current methodological approaches, from ComBat and SVA to Reference-Based methods, and their implementation in R/Bioconductor. The guide addresses common troubleshooting scenarios, optimization strategies for complex designs, and rigorous validation techniques. Finally, we compare leading tools (minfi, sva, limma, meffil) and their performance. This synthesis empowers researchers to produce robust, reproducible methylation data for biomarker discovery and translational research.

What Are Batch Effects in Methylation Data? Sources, Detection, and Impact on Biomarker Discovery

Troubleshooting Guides & FAQs

Q1: After processing multiple Infinium MethylationEPIC arrays over several months, my PCA shows a strong separation by processing date, not by phenotype. Is this a batch effect? A1: Yes, this is a classic technical batch effect. Variation introduced by processing date (reagent lots, technician, humidity) is often stronger than true biological signal. Immediate steps:

- Visualize: Generate PCA plots colored by processing date, array slide, row, and position.

- Quantify: Use the

svapackage in R to estimate surrogate variables or calculate the median pairwise distance between samples from different batches. - Act: Apply a correction method like ComBat or limma's

removeBatchEffectbefore downstream differential methylation analysis.

Q2: My negative control probes show high intensity in some samples from one batch. What does this indicate? A2: Elevated negative control probe intensity suggests a background fluorescence issue specific to that batch, often due to:

- Hybridization buffer problems (contamination, improper preparation).

- Staining reagent degradation.

- Scanner calibration drift for that specific run. Troubleshooting Protocol:

- Re-examine the raw IDAT files for the affected batch using

minfi::getQC. - Compare the mean intensities of negative control probes (Type I and II) across batches in a table.

- If the issue is isolated, consider reprocessing the affected samples or applying background correction specifically tuned for that batch (e.g., using

noobnormalization with batch-specific parameters).

Q3: How can I distinguish a biological batch effect (e.g., age, cell type heterogeneity) from a technical one? A3: Correlation with known covariates is key.

- Regress out known biology: For age, regress out methylation age (calculated via Horvath's clock) and see if batch signal remains.

- Use reference datasets: If available, compare with public datasets processed in other labs. Persistent sample clustering by your lab ID suggests technical bias.

- Cell type deconvolution: Use a tool like

EpiDISHto estimate cell proportions. If your "batch" variable correlates strongly with a specific cell type proportion, it may be a biological confounder rather than a technical artifact. See Table 1.

Q4: ComBat corrected my data, but now some CpGs show negative beta values or values >1. Is this expected? A4: No, this is not expected for methylation beta values (0-1 range). ComBat, in its default empirical Bayes mode, assumes a normal distribution and can over-correct, producing non-physical values.

- Solution: Use the

ComBatfunction with the argumentprior.plots=TRUEto check the prior fit. Consider using themean.only=TRUEoption if the prior is poorly estimated. Alternatively, use a model-based method designed for proportional data, such as a Beta regression-based correction.

Key Experimental Protocols for Batch Effect Investigation

Protocol 1: Systematic Monitoring of Technical Variation Using Control Probes

- Objective: Quantify batch-specific technical noise.

- Steps:

- Extract data for all control probes (staining, hybridization, extension, specificity, non-polymorphic) from IDATs using

minfi::getProbeInfo(type = "Control"). - For each control probe type, calculate the median intensity per sample.

- Perform ANOVA with "Batch" as the main factor. A significant p-value indicates batch-dependent performance for that control metric.

- Create a table of control probe metrics by batch (see Table 2).

- Extract data for all control probes (staining, hybridization, extension, specificity, non-polymorphic) from IDATs using

Protocol 2: Experimental Design for Batch Effect Minimization

- Objective:

- Steps:

- Randomize: Assign cases and controls equally across all batches (arrays, plates, processing days).

- Balance: Ensure distributions of key biological covariates (e.g., sex, age) are similar across batches.

- Include Replicates: Split a small number of technical replicate samples (e.g., a pooled reference) across all batches to directly measure batch variation.

- Record Metadata: Meticulously log all potential batch variables (detailed in Scientist's Toolkit).

Data Presentation

Table 1: Distinguishing Technical vs. Biological Variation Sources

| Variation Source | Example in Methylation Studies | Typical Signal in PCA | Correlation with Known Covariates | Correctable via Statistical Methods? |

|---|---|---|---|---|

| Technical Batch Effect | Array processing date, Scanner ID, Technician | Strong separation by batch, often PC1/PC2 | Low correlation with biology (age, sex, phenotype) | Yes (e.g., ComBat, SVA) |

| Biological "Batch" | Different cell type proportions, Collection site | May separate on later PCs, more diffuse clustering | High correlation with measured biology (e.g., neutrophil count) | Must be modeled, not "removed" (include as covariate) |

| Environmental Latent Factor | Unknown clinical sub-phenotype, unmeasured exposure | Unclear separation, increases overall variance | May be uncorrelated with recorded metadata | Can be estimated (e.g., RUV, PEER factors) |

Table 2: Example Control Probe Analysis for Batch Diagnostics

| Batch ID | Sample Count | Median Neg. Ctrl Intensity (AU) | Staining Ctrl Variation (CV%) | Bisulfite Conversion I Ctrl (Beta Value) |

|---|---|---|---|---|

| Batch202301 | 12 | 412 ± 24 | 3.2 | 0.95 ± 0.01 |

| Batch202302 | 12 | 648 ± 87 | 8.5 | 0.92 ± 0.04 |

| Batch202303 | 12 | 430 ± 31 | 3.5 | 0.94 ± 0.02 |

AU: Arbitrary Fluorescence Units; CV: Coefficient of Variation.

Mandatory Visualizations

Flowchart: Batch Effect Diagnosis & Correction

Workflow: From Sample to Analysis-Ready Data

The Scientist's Toolkit: Essential Reagents & Materials

| Item | Function in Methylation Array Study | Critical for Batch Effect Mitigation? |

|---|---|---|

| Infinium MethylationEPIC Kit | Contains all array-specific reagents for hybridization, extension, staining. | YES. Use kits from the same manufacturing lot for a study to avoid major reagent batch effects. |

| Bisulfite Conversion Kit | Converts unmethylated cytosines to uracil while leaving methylated cytosines intact. | YES. Conversion efficiency must be consistent. Monitor via control probes. Use single kit lot. |

| Universal BeadChips | The physical microarray. | YES. Balance cases/controls across chips and within chips (rows/columns). |

| Twinjector or Multidispenser | For precise reagent delivery during processing. | YES. Calibration variance can cause batch effects. Use same instrument or validate across them. |

| iScan System | Scans the fluorescent signals from the array. | YES. Scanner calibration can drift. Regular maintenance and using the same scanner is ideal. |

| Pooled Reference DNA | A homogenized DNA sample from multiple sources. | YES. Run as inter-batch technical replicates to directly quantify batch variation. |

| Detailed Lab Metadata Log | Records technician, date, time, equipment ID, reagent lot numbers for every step. | CRITICAL. Essential for diagnosing and modeling batch effects. |

Troubleshooting Guides & FAQs

Q1: How can I determine if my Infinium MethylationEPIC array data is affected by chip-specific batch effects?

A: Run a preliminary Principal Component Analysis (PCA) on the raw beta values, colored by the Chip_ID variable. A strong clustering of samples by chip indicates a chip effect. Quantify this using the sva package in R to estimate the proportion of variance explained by the Chip_ID factor. If it exceeds 5-10% of total variance, correction is necessary. The experimental protocol involves: 1) Loading idat files into R using minfi. 2) Extracting beta values with getBeta(preprocessNoob()). 3) Performing PCA on the 10,000 most variable CpG sites. 4) Calculating variance contributions using model.matrix() and sva::sva.

Q2: We processed samples across multiple 96-well plates. What is the best method to diagnose a plate-based batch effect?

A: Use boxplots of the median intensity values (both methylated and unmethylated channels) grouped by Plate_ID. A systematic shift in median intensities per plate is diagnostic. Additionally, perform a batch-level Differential Methylation (DM) analysis using limma, treating Plate as the sole covariate. A high number of statistically significant CpGs (FDR < 0.05) for this artificial factor confirms the effect.

Q3: Samples processed on different dates show differential variability. How do I distinguish this from biological signal?

A: Processing date (Process_Date) effects often manifest as increased within-group variability. Analyze this by: 1) Calculating the standard deviation of beta values for technical replicate controls (if available) across dates. 2) Applying the meanSdPlot function from the vsn package to visualize the relationship between mean methylation and standard deviation, stratified by date. Date effects show as distinct trend lines. 3) Use the Levene's test for homogeneity of variances across dates.

Q4: What is a "row effect" on an Illumina chip, and how do I test for it?

A: A row effect is a systematic positional bias across the rows of the physical chip. To test, assign each sample a Row coordinate (e.g., 1-8). Calculate the average beta value per row across all samples for a subset of invariant control probes. Use ANOVA to test for row-wise differences. Visually, a heatmap of control probe intensities ordered by row will show striping patterns.

Q5: What is the recommended order of operations for correcting these multiple, nested batch effects?

A: The consensus is to apply within-array normalization (e.g., NOOB) first, followed by inter-array normalization (e.g., BMIQ or SWAN). Then, apply a model-based batch effect correction tool like ComBat (from the sva package) or removeBatchEffect (from limma), specifying all relevant technical factors (Chip, Row, Plate, Processing Date) simultaneously in the model. Never correct for batches sequentially.

Data Tables

Table 1: Common Batch Sources and Their Diagnostic Signatures

| Source | Primary Diagnostic | Typical Affected Metric | Common Correction Method |

|---|---|---|---|

| Chip (Array) | PCA clustering by Chip_ID | Global mean intensity, >10% variance | ComBat, Limma's removeBatchEffect |

| Plate | Boxplot shift in median intensity | Signal intensity distribution | Plate-mean centering, ComBat |

| Processing Date | Increased variance, PCA cluster by date | Probe-wise variance, dye bias | Batch-mean standardization, SVA |

| Row (Position) | ANOVA on control probes by row | Control probe intensity gradient | Within-array normalization, spatial smooth |

Table 2: Variance Explained by Batch Factors in a Representative Study

| Batch Factor | Median Variance Explained (%) (Range) | Number of Significant CpGs (FDR<0.05) |

|---|---|---|

| Processing Date | 15.2% (4.8 - 32.1) | 45,782 |

| Chip ID | 8.7% (2.1 - 18.5) | 12,450 |

| Plate ID | 6.3% (1.5 - 14.9) | 8,921 |

| Row Position | 1.8% (0.5 - 5.2) | 1,205 |

Experimental Protocols

Protocol 1: Diagnosing Batch Effects with PCA and Variance Partitioning

- Data Import: Use

minfi::read.metharray.expto load IDAT files and sample sheet. - Normalization: Apply

preprocessNoobfor within-array background correction and dye bias equalization. - Filtering: Remove probes with detection p-value > 0.01 in any sample, and cross-reactive probes.

- PCA: Extract beta matrix. Select the top 10,000 most variable CpGs (by standard deviation). Perform PCA using

prcomp(). - Visualization: Plot PC1 vs. PC2, coloring points by each potential batch variable (

Chip,Plate,Date). - Variance Estimation: Use the

variancePartition::fitExtractVarPartModelfunction to quantify the percentage of variance attributable to each technical factor and biological variables of interest.

Protocol 2: Implementing ComBat for Simultaneous Multi-Factor Batch Correction

- Prepare Model Matrices: Create a model matrix for your biological variables of interest (e.g.,

~ Disease_Status + Age + Gender). Create a separate variable or data frame for batches (e.g.,cbind(Chip, Plate, Process_Date)). Some implementations require combining these into a single factor. - Run ComBat: Use

sva::ComBat(dat = beta_matrix, batch = combined_batch_factor, mod = biological_model_matrix, par.prior = TRUE, prior.plots = FALSE). - Validate: Re-run PCA on the

ComBat-adjusted beta matrix. Clustering by batch factors should be diminished, while biological clustering should be preserved or enhanced. - Note: For nested designs (e.g., plates within processing dates), consider using the

neuroCombatorHarmonypackages which handle complex designs better.

Visualizations

Title: Batch Effect Diagnosis and Correction Workflow

Title: Relationship Map of Batch Effect Causes and Impacts

The Scientist's Toolkit

Table 3: Essential Research Reagents & Computational Tools for Batch Analysis

| Item | Function & Purpose |

|---|---|

R/Bioconductor minfi Package |

Primary package for importing IDAT files, performing quality control, and implementing standard normalization methods (e.g., NOOB, SWAN). |

sva (Surrogate Variable Analysis) Package |

Contains the ComBat function for empirical Bayes batch correction and tools for estimating surrogate variables for unknown batch effects. |

limma Package |

Provides the removeBatchEffect function for linear model-based adjustment and is essential for downstream differential methylation analysis. |

| Infinium MethylationEPIC v2.0 Manifest File | Provides the necessary probe annotation information, including genomic coordinates, probe type, and flags for cross-reactive or polymorphic probes. |

| Control Probe Data (from IDAT) | Used to monitor staining, hybridization, extension, and specificity steps; critical for diagnosing row, column, and chip-wide intensity artifacts. |

| Reference Samples (e.g., NA12878, 1000 Genomes) | Commercially available standardized DNA samples run across batches/platesto serve as a technical baseline for identifying and measuring batch drift. |

variancePartition or PVCA R Package |

Quantifies the percentage contribution of each batch and biological variable to total observed variance in the dataset. |

| BMIQ or ENmix Normalization Scripts | Advanced algorithms for correcting the type-I/type-II probe design bias, which can interact with batch effects. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During differential methylation analysis, my P-value histograms show a strong peak near 1, but I also have many significant hits. Could this indicate a batch effect?

A: Yes, this pattern is a classic signature of a pervasive batch effect. The peak near 1 suggests many non-significant tests (as expected under the null), but the concurrent high number of significant hits indicates a violation of the test's assumptions. Batch effects inflate the variance, leading to both false positives (inflation) and false negatives (loss of power). To diagnose:

- Perform PCA on your beta/M-values and color points by batch.

- Statistically test for batch association with principal components (e.g., PERMANOVA).

- Check negative control probes (like housekeeping gene probes) for systematic differences between batches.

Q2: My SVA/ComBat-corrected data shows good batch mixing in PCA, but my validation by pyrosequencing fails. What went wrong?

A: Over-correction is a likely culprit. When using unsupervised methods like SVA, there is a risk of removing biological signal along with batch noise, especially if the biological signal of interest is weakly expressed or correlated with batch. Solution: Use a supervised or reference-based correction method. If possible, include Control Probe PCA (CPPCA) or housekeeping probes known to be invariant across your conditions as a guide. Always retain a set of biologically validated positive control loci to assess signal preservation post-correction.

Q3: After applying RUVm, my differential methylation results seem attenuated. Is this normal?

A: Partial attenuation can be expected and desirable if initial results were inflated by batch. However, excessive attenuation suggests the negative control probes used by RUVm may be correlated with your phenotype. Troubleshooting Steps:

- Re-evaluate your negative controls: Ensure the "negative control" probes or samples you provided truly have no association with the biological factor of interest.

- Tune parameters: Adjust the

kparameter (number of factors of unwanted variation) incrementally. Use diagnostic plots (RLE plots, PCA) to find thekthat removes batch structure without flattening biological clusters. - Validation: Use an orthogonal method (e.g., bisulfite pyrosequencing on a subset of loci) on samples from different batches to confirm true signal remains.

Q4: I am integrating public 450K and EPIC array data. Which normalization should I apply first?

A: A two-stage correction is critical. First, handle the technical differences between the platforms, then correct for study-specific batch effects.

- Platform Harmonization: Use

minfi::preprocessFunnormorwateRmelon::dasenseparately on each platform dataset. These methods include within-array normalization crucial for combining data from different array versions. - Probe Filtering: Remove probes with design differences, cross-reactive probes, and probes containing SNPs. Use the

minfi::getAnnotationpackage for updated lists. - Batch Effect Correction: On the combined, harmonized data, apply inter-batch correction using

sva::ComBatorlimma::removeBatchEffect, specifying both "platform" and "study batch" as factors.

Data Presentation: Key Quantitative Impacts of Batch Effects

Table 1: Simulated Impact of Batch Effect on False Discovery Rate (FDR)

| Batch Effect Strength (Cohen's d) | Nominal FDR (5%) | Actual FDR (Uncorrected) | Actual FDR (ComBat-Corrected) | Power Loss (Uncorrected) |

|---|---|---|---|---|

| None (d = 0.0) | 5.0% | 5.1% | 5.2% | 0% |

| Mild (d = 0.5) | 5.0% | 18.7% | 5.8% | 12% |

| Moderate (d = 1.0) | 5.0% | 42.3% | 6.5% | 35% |

| Severe (d = 1.5) | 5.0% | 68.9% | 7.1% | 62% |

Note: Simulation based on 10,000 probes, 20 cases/20 controls across 2 batches. True differentially methylated probes (DMPs) = 10%.

Table 2: Comparison of Common Batch Effect Correction Methods for Methylation Arrays

| Method (Package) | Type | Key Input Required | Pros | Cons |

|---|---|---|---|---|

ComBat (sva) |

Model-based | Batch variable | Robust, handles small batches, preserves mean-variance trend. | Assumes parametric distributions, risk of over-correction. |

Remove Batch Effect (limma) |

Linear model | Batch and model design matrices | Fast, simple, integrates with limma pipeline. |

Does not adjust for variance inflation, only mean shifts. |

RUVm (missMethyl) |

Factor analysis | Negative control probes/samples | Effective for unknown batch factors, flexible. | Choice of controls and k factors is critical and difficult. |

RefFreeEWAS (RefFreeEWAS) |

Factor analysis | None (unsupervised) | No need for control probes, models cell mixture. | Computationally intensive, can be unstable. |

Experimental Protocols

Protocol 1: Diagnosing Batch Effects with PCA and Density Plots

Objective: To visually and statistically identify the presence of batch effects in methylation β-value or M-value matrices.

Materials: Normalized β-value matrix, Sample sheet with batch and phenotype info, R with minfi, ggplot2, factoextra.

Method:

- Subset to Most Variable Probes: Select the top 10,000-50,000 probes with the highest standard deviation across all samples to focus on informative variation.

- Perform PCA: Use

prcomp()on the transposed matrix (samples as rows, probes as columns). Center the data (center=TRUE). Scale if using M-values (scale.=TRUE). - Visualize: Create a PCA scatter plot (PC1 vs. PC2) coloring points by

Batchand shaping points byPhenotype. - Statistical Test: Use PERMANOVA (

vegan::adonis2) to test if batch explains a significant proportion of variance in the distance matrix. - Density Plot: Plot the density of P-values from a simple linear model (phenotype ~ methylation) for each probe. A large left skew or a peak near 1 suggests problematic distribution.

Protocol 2: Applying ComBat Correction with Variance Stabilization

Objective: To remove batch effects while preserving biological signal using the ComBat empirical Bayes framework.

Materials: M-value matrix (recommended over β-values), Batch vector, Optional: Model matrix for biological covariates.

Method:

- Prepare Data: Convert β-values to M-values using

log2(β/(1-β)). Ensure the matrixdata.mandbatchvector are aligned. - Define Model: If you have biological covariates (e.g., age, sex) to preserve, create a model matrix

mod <- model.matrix(~phenotype+age, data=pData). For simple preservation of group means, usemod <- model.matrix(~phenotype). - Run ComBat: Use

sva::ComBat(dat=data.m, batch=batch, mod=mod, par.prior=TRUE, prior.plots=FALSE). - Diagnose: Repeat Protocol 1's PCA on the

corrected.mmatrix. Batch clustering should be diminished, while phenotypic groups should remain distinct. - Back-transform (optional): Convert corrected M-values back to β-values for interpretation:

corrected.beta <- 2^corrected.m / (2^corrected.m + 1).

Mandatory Visualizations

Diagram Title: The Cascade of Error from Uncorrected Batch Effects

Diagram Title: Batch Effect Correction Decision Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Methylation Array Batch Effect Management

| Item | Function & Relevance to Batch Correction |

|---|---|

| Infinium MethylationEPIC v2.0 Kit | Latest array platform. Using the most recent, consistent kit reduces batch variation at source. |

| Identical Reagent Lots | Using the same lots of amplification, hybridization, and staining kits for all samples in a study is the first and most critical defense against batch effects. |

| Control Samples (Reference DNA) | Commercially available reference genomic DNA (e.g., from cell lines like NA12878). Include aliquots from a single master stock in every batch to directly measure inter-batch variation. |

| Bisulfite Conversion Kit | High-efficiency, consistent kits (e.g., EZ DNA Methylation kits). Incomplete conversion is a major source of technical bias and must be uniform across batches. |

| Pyrosequencing Assays | Orthogonal validation technology. Essential for validating a subset of DMPs identified post-correction, confirming true signals are preserved. |

| Houskeeping/Invariant Probe Sets | Curated lists of probes (e.g., from housekeeping genes) expected to show minimal biological variation. Serve as negative controls for methods like RUVm. |

Troubleshooting Guides & FAQs

General Issues

Q1: My PCA plot shows strong separation, but I know my samples are biologically similar. How do I determine if this is a batch effect? A: First, color the PCA plot by the suspected batch variable (e.g., processing date, plate ID). If the separation aligns with these technical groups, it strongly suggests a batch effect. Next, generate a density plot of control probe intensities or global methylation beta values grouped by the same batch variable. Systematic shifts in the density distributions between batches confirm the issue. Correlate these findings with a hierarchical clustering dendrogram; if samples cluster more strongly by batch than by expected biological group, the evidence is conclusive.

Q2: The hierarchical clustering dendrogram is difficult to interpret because I have too many samples. What can I do? A: This is common in large cohort studies. We recommend two approaches: 1) Subset and Validate: Perform clustering on a random subset of samples from each suspected batch and biological group. If the batch-based clustering persists in multiple random subsets, it's reliable. 2) Use a Heatmap: Create a heatmap of the top most variable probes (e.g., 1000-5000) alongside the dendrogram. Color the sample annotation bar by batch and biological condition. The combined visualization makes patterns clearer.

Q3: My density plots of beta values overlap almost perfectly, but PCA still shows batch separation. What does this mean? A: This indicates that the batch effect is not causing a global shift in methylation levels, but is affecting a specific subset of probes. The PCA is sensitive to this structured variation. You should investigate the loadings of the principal components that separate batches. Probes with the highest absolute loading values are driving the separation. These are often associated with technical artifacts like those containing single nucleotide polymorphisms (SNPs) or being located in specific genomic regions susceptible to processing variability.

Q4: After applying batch correction, my PCA batch separation is reduced, but my hierarchical clustering still shows a batch-specific branch. Is the correction a failure? A: Not necessarily. Hierarchical clustering can be sensitive to residual, subtle technical variation. Evaluate the strength of the batch branch. If biological groups are now sub-clustering within it, the correction may be partially successful. Quantify the improvement using metrics like the silhouette score or a Principal Variance Components Analysis (PVCA) before and after correction. A reduction in the variance component attributed to batch is the key metric of success.

Technical & Software Issues

Q5: I get an error when trying to run PCA on my methylation beta value matrix due to missing values. How should I handle missing data? A: Methylation arrays typically have very few missing values if data processing is standard. However, if present, you have two main options: 1) Imputation: Use a method like k-nearest neighbors (KNN) imputation, which is suitable for this data type. 2) Probe Removal: Remove probes that have missing values across more than a defined threshold (e.g., 5%) of samples. For the remaining sporadic missing values, you can impute with the median beta value for that probe across all samples. Do not use mean imputation without careful consideration.

Q6: What distance metric and linkage method are most appropriate for hierarchical clustering of methylation data for batch inspection? A: For methylation beta values (ranging from 0 to 1), Euclidean distance is commonly used and is sensitive to batch effects that cause shifts in value. For correlation-based analysis, one minus the Pearson correlation is effective. For linkage, Ward's method often produces the most interpretable and compact clusters for detecting batch-driven groupings. Always validate by comparing the results from multiple distance/linkage combinations.

Experimental Protocols for Diagnostic Analysis

Protocol 1: Generating Diagnostic Plots for Batch Detection in Methylation Studies

Objective: To visually identify the presence of technical batch effects in methylation array data (e.g., Illumina EPIC/450K).

Materials: Processed methylation beta value matrix (samples x probes), sample metadata sheet including batch identifiers (plate, row, date, etc.) and biological phenotypes.

Software: R Statistical Environment with packages ggplot2, factoextra, stats, ComplexHeatmap.

Methodology:

- Data Preparation: Filter out cross-reactive and SNP-associated probes. Optionally, subset to the top 50,000 most variable probes to reduce noise.

- PCA Plot:

- Perform PCA on the transposed beta matrix (probes as variables).

- Extract the first 5-10 principal components (PCs).

- Plot PC1 vs. PC2, coloring points by

Batchand shaping points byBiological Group. - In parallel, create a scree plot to assess variance explained by each PC.

- Density Plot:

- Calculate the mean or median beta value per sample.

- Plot the distribution of this global metric using a smoothed density curve, grouped by

Batch. - Overlay all groups on the same plot for direct comparison.

- Hierarchical Clustering:

- Calculate a Euclidean distance matrix between samples using the top variable probes.

- Perform hierarchical clustering using Ward's linkage method.

- Plot the resulting dendrogram, coloring sample labels or using an adjacent annotation bar to indicate

BatchandBiological Group.

Interpretation: Concordant evidence across all three plots (PCA separation by batch, shifted density curves, and dendrogram branching by batch) confirms a significant batch effect requiring correction.

Protocol 2: Validating Batch Correction Using Visual Diagnostics

Objective: To assess the efficacy of a batch correction method (e.g., ComBat, limmasremoveBatchEffect`).

Materials: Original and batch-corrected methylation beta matrices, sample metadata.

Methodology:

- Parallel Visualization: Generate PCA, density, and hierarchical clustering plots as per Protocol 1 for both the original and corrected datasets.

- Comparative Metrics:

- Calculate the average silhouette width for batch labels before and after correction. A decrease indicates reduced batch clustering.

- Perform PVCA to quantify the percent variance attributable to batch and biological factors pre- and post-correction.

- Biological Integrity Check: Ensure that known biological differences (e.g., tumor vs. normal) are preserved or enhanced in the corrected data's visualizations.

Table 1: Common Causes of Batch Effects in Methylation Array Studies

| Cause | Typical Signature in PCA | Signature in Density Plots |

|---|---|---|

| Different Processing Dates | Strong separation along PC1/PC2 by date group. | Clear shift in global mean/median density peak. |

| Plate-to-Plate Variation | Clustering of all samples from the same plate. | Multiple distinct density peaks corresponding to plates. |

| Array Position (Row) | Gradient of samples along a PC corresponding to row number. | Subtle, ordered shift in density distributions. |

| Technician | Grouping by operator, often interacting with date. | Similar to date signature, may be less pronounced. |

Table 2: Recommended Actions Based on Diagnostic Results

| Visual Pattern | Suggested Action | Next Step |

|---|---|---|

| Strong batch signal in all plots | Proceed with batch correction. | Choose a model-based method (e.g., ComBat) that includes biological covariates. |

| Batch signal only in PCA | Investigate probe-specific effects. | Check loadings of critical PCs; consider removing problematic probes (e.g., SNP-rich). |

| No batch signal, strong biological signal | No batch correction needed. | Proceed with downstream biological analysis. |

| Correction removes biological signal | Correction is too aggressive. | Re-run correction with weaker priors or using a different method (e.g., SVA). |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Methylation Array Batch Analysis

| Item | Function in Batch Analysis |

|---|---|

| Illumina Methylation BeadChip (EPIC/850K) | Platform for genome-wide methylation profiling; source of raw beta values. |

| Control Probe Data | Provides intensity metrics for technical monitoring (staining, hybridization) across batches. |

| Sample Sentinel (Duplicates) | Identically prepared samples distributed across plates/batches to directly measure technical variation. |

| Reference Standard DNA (e.g., GM12878) | Commercially available, well-characterized genomic DNA run as an inter-batch calibrator. |

| Bisulfite Conversion Kit | Critical pre-processing step; variation here is a major source of batch effects. Use the same kit lot. |

| Normalization Controls (e.g., SeSaMe method standards) | Spike-in controls for whole-genome bisulfite sequencing that can be adapted for array normalization. |

R/Bioconductor Packages (minfi, sva, limma) |

Software tools for data import, QC, visualization, and batch correction. |

Diagrams

Diagram 1: Batch Effect Diagnostic Workflow

Diagram 2: Post-Correction Validation Pathway

Exploratory Data Analysis (EDA) Using R/Bioconductor Packages (minfi, methylumi)

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During the read.metharray.exp function call in minfi, I encounter the error: "Error in .checkAssayNames(sourceDir, array) : Could not find any IDAT files in the specified directory." What are the common causes?

A: This error indicates the function cannot locate the required .idat files. Verify the following:

- Directory Path: Ensure the path is correct and uses forward slashes (

/) or double backslashes (\\) in Windows. - File Naming:

minfiexpects standard Illumina filenames (e.g.,200514310001_R01C01_Grn.idat). Do not rename files. - File Presence: Confirm the directory contains both

_Grn.idatand_Red.idatfile pairs. - Working Directory: Use

getwd()andlist.files()in R to confirm your session is in the correct location.

Q2: After loading data with methylumi, my beta-value or M-value distributions appear severely skewed or show extreme bimodality when plotted with densityPlot. Is this a technical artifact?

A: Extreme, uniform skewing often indicates a batch effect or processing batch. This is a critical observation for your thesis. Proceed as follows:

- Color by Batch: Use the

densityPlotfunction'ssampGroupsargument to color distributions by known batch variables (e.g., processing date, slide). - PCA Investigation: Perform PCA (

prcompon M-values) and color the PC1 vs. PC2 plot by the same batch variables. Clustering by batch confirms the effect. - Protocol: The experimental protocol to diagnose this is below (see Key Diagnostic Protocol).

Q3: When using minfi::preprocessQuantile, the function fails with "Error in approxfun: need at least two non-NA values to interpolate." What does this mean?

A: This error typically stems from an underlying issue with the data object, often related to poor quality probes.

- Detect P-values: Ensure you have calculated detection p-values (

minfi::detectionP) and filtered out probes (e.g.,p > 0.01) in a significant number of samples before normalization. - Check Dimensions: Use

dim(getBeta(object))anddim(getM(object))before and after filtering to ensure data remains. - Recommended Filtering Protocol:

Q4: My dataset combines 450K and EPIC arrays. How do I handle this in EDA to avoid platform-specific confounding?

A: You must restrict analysis to the common probe set.

- Methodology: Use the

minfipackage to automatically manage this.

- EDA Imperative: In subsequent PCA, always include a shape or color for "Array Type" to ensure any observed variance isn't confounded by platform.

Key Diagnostic Protocol for Batch Effect Detection in Methylation EDA

Aim: To visually and statistically assess the presence of technical batch effects prior to correction, a core thesis methodology.

Materials: A normalized (or raw) matrix of M-values or Beta-values from minfi or methylumi. Sample metadata table with batch covariates (e.g., Slide, Array, Date, Processing Batch).

Procedure:

- Data Preparation: Generate an M-value matrix (

M_matrix) from your normalized object. Ensure sample names in the matrix column names match the row names in your metadata (meta_df). - Principal Component Analysis (PCA):

- Visualization: Create a PCA plot of the first two principal components, coloring points by a potential batch variable (e.g.,

meta_df$Slide). - Quantitative Assessment: Perform PERMANOVA using the

veganpackage to test if batch grouping explains a significant portion of variance:

Quantitative Data from Batch Effect Diagnosis

Table 1: Example Output from PERMANOVA on Simulated Data Before Batch Correction

| Batch Factor (Covariate) | Df | Sum of Squares | R² (Variance Explained) | F Value | Pr(>F) |

|---|---|---|---|---|---|

| Processing Date | 2 | 145.8 | 0.32 | 18.25 | 0.001 |

| Slide ID | 5 | 89.4 | 0.19 | 8.91 | 0.001 |

| Residual | 42 | 224.5 | 0.49 | - | - |

| Total | 49 | 459.7 | 1.00 | - | - |

Table 2: Key Research Reagent Solutions

| Item/Reagent | Function in Methylation EDA Workflow |

|---|---|

| minfi R/Bioconductor Package | Primary toolkit for importing, preprocessing, normalizing, and analyzing Illumina methylation array data. |

| methylumi R/Bioconductor Package | Alternative package for data input and basic analysis; sometimes used for legacy pipeline compatibility. |

| Illumina Methylation BeadChip (450K/EPIC) | The microarray platform that generates the raw intensity data (.idat files) for genome-wide methylation profiling. |

| RGChannelSet Object (minfi) | Data class holding raw red and green channel intensities; the starting point for most minfi pipelines. |

| GenomicRatioSet Object (minfi) | Data class holding processed methylation data (Beta or M-values) with genomic coordinates, used for downstream EDA and analysis. |

| sva R Package | Contains the ComBat function, a standard tool for empirical batch effect correction using an empirical Bayes framework. |

Visualization of Workflows

Title: Methylation EDA & Batch Effect Management Workflow

Title: Confounding in Methylation Data Analysis

Step-by-Step Methods: Implementing Batch Correction Algorithms for EPIC and 450K Arrays

Troubleshooting Guides and FAQs

FAQ 1: My model-based correction (e.g., ComBat) is removing biological signal along with batch effects. How can I diagnose and prevent this?

- Answer: This is often due to confounding between your biological variable of interest and the batch variable. Before correction, create a visualization (e.g., PCA plot colored by batch and by condition) to check for severe confounding. If present, consider using the

svapackage'snum.svorleek.svfunctions to estimate the number of surrogate variables (SVs) that are independent of your primary variable. Use these SVs, rather than a simple batch term, in your ComBat model to preserve biological signal.

FAQ 2: When using Surrogate Variable Analysis (SVA), how do I determine the optimal number of factors to include?

- Answer: The optimal number is data-dependent. Use the permutation-based approach implemented in the

svafunctionnum.sv()or the asymptotic approach inleek.sv(). Compare the results from both methods. Start with the lower estimated number to avoid over-correction. Validate by assessing the reduction in batch clustering in post-correction PCA plots while maintaining separation by biological groups of interest.

FAQ 3: For Reference-Based Correction (e.g., RUVm), I am unsure how to select appropriate negative control probes. What are the criteria?

- Answer: Negative control probes should be probes whose methylation levels are a priori expected not to be associated with the biological conditions of interest. Common choices include:

- Infinium I-type probe replicates.

- Housekeeping probes known to be universally unmethylated.

- Probes with the lowest standard deviation across a large public dataset (e.g., from the GEO database), suggesting minimal biological variability. Performance should be validated by checking if the estimated factors correlate with known batch variables but not with your primary biological variable.

FAQ 4: After applying any correction method, how do I quantitatively assess its success?

- Answer: Use a combination of visual and quantitative metrics, as summarized in the table below.

Table 1: Quantitative Metrics for Batch Effect Correction Assessment

| Metric | Calculation Method | Target Outcome Post-Correction | Interpretation Guide |

|---|---|---|---|

| Principal Component (PC) Variance | Variance explained by top PCs before/after correction. | Reduced variance in batch-associated PCs. | A significant drop in PC1/PC2 variance tied to batch indicates success. |

| Pooled Within-Batch Variance | Mean variance within each batch. | Should remain stable or decrease slightly. | A large increase suggests over-correction and signal loss. |

| Between-Batch Variance (ANOVA) | Perform ANOVA for each probe using batch as the factor. | Median F-statistic and number of significant probes should drastically decrease. | Measures the residual batch effect strength. |

| Silhouette Width (Batch) | Measures cluster cohesion/separation for batch labels. | Score should approach 0 or become negative. | Positive scores indicate residual batch-driven clustering. |

| Preservation of Biological Signal | ANOVA or t-test statistic for the primary biological variable. | Should remain significant and stable. | A large decrease indicates removal of biological signal. |

Experimental Protocols

Protocol 1: Implementing a Model-Based Correction Workflow Using ComBat

- Data Preparation: Load your normalized beta or M-values matrix. Prepare a model matrix for your biological variables of interest (e.g., disease status, treatment). Prepare a separate batch covariate vector.

- Confounding Check: Generate a PCA plot from the uncorrected data, coloring points by batch and separately by primary biological condition. Assess the degree of overlap.

- ComBat Execution: Use the

ComBatfunction from thesvapackage in R. Input the data matrix, batch vector, and the model matrix of biological covariates (modparameter). Choosepar.prior=TRUEfor empirical Bayes shrinkage unless the dataset is very small. - Validation: Generate a post-correction PCA plot and calculate metrics from Table 1. Compare the reduction in batch association with the preservation of biological group differences.

Protocol 2: Surrogate Variable Analysis (SVA) for Unmodeled Factors

- Define Models: Create a full model matrix (

mod) that includes your known variables (e.g., phenotype, age). Create a null model matrix (mod0) that includes only intercept or covariates you wish to adjust for (but not the primary variable). - Estimate SVs: Use the

svafunction from thesvapackage, passing the data matrix,mod, andmod0. Use the number of factors (n.sv) estimated bynum.sv(). - Incorporate SVs: The estimated surrogate variables can be added as covariates to your linear model for differential analysis (e.g., in

limma). Alternatively, they can be used as themodparameter in a subsequentComBatrun to remove batch effects while protecting biological signal.

Protocol 3: Reference-Based Correction Using RUVm

- Identify Control Probes: Obtain a set of negative control probes (see FAQ 3). The

missMethylpackage provides functions to access Infinium I replicate probes. - Perform Differential Analysis (Initial): Run a first-pass differential methylation analysis (e.g., using

limma) with only your biological variable of interest to obtain a list of p-values for all probes. - Define Empirical Controls: Select the least significant probes (e.g., top 5000-10000 probes by p-value) from step 2 as empirical negative controls.

- Apply RUVm: Use the

RUVfitandRUVadjfunctions from theRUVmethylpackage. Specify the data, your biological variable design matrix, and the set of control probes (from step 1 or 3). - Iterate: The results from step 4 provide a better estimate of non-differential probes. The process can be repeated once to refine the empirical controls.

Visualization Diagrams

Diagram 1: Logical Decision Flow for Paradigm Selection

Diagram 2: SVA/ComBat Hybrid Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Methylation Batch Correction |

|---|---|

R/Bioconductor sva Package |

Implements ComBat and Surrogate Variable Analysis (SVA) for estimating and adjusting for batch effects and unmeasured confounders. |

R/Bioconductor RUVmethyl Package |

Provides reference-based methods (RUVm) for batch correction using negative control probes. |

R/Bioconductor limma Package |

Standard for fitting linear models to methylation data post-correction for differential analysis. Essential for creating empirical controls in RUVm. |

R/Bioconductor missMethyl Package |

Provides utilities for accessing Infinium I and II probe annotations and selecting negative control probes for RUVm. |

| Infinium MethylationEPIC/850K BeadChip | The standard array platform. Knowledge of its probe design (I-type vs. II-type) is critical for selecting technical control probes. |

| Public Repository Data (e.g., GEO, TCGA) | Serves as a source for identifying stable control probes or as a potential reference dataset for harmonization methods. |

| Housekeeping Gene Probe Lists | Probes in universally unmethylated genomic regions (e.g., active promoters of housekeeping genes) can serve as negative controls for RUVm. |

Applying ComBat and ComBat-seq (sva Package) for Empirical Bayes Adjustment

Troubleshooting Guides and FAQs

Q1: My ComBat-adjusted beta values from my Illumina MethylationEPIC array data are outside the theoretical range of 0-1. What went wrong and how do I fix it?

A1: ComBat, as implemented in the sva package, is designed for general continuous data. When applied directly to beta or M-values, it does not constrain the output range.

- Solution 1 (Recommended): Apply ComBat to M-values (logit transformation of beta values), as they are continuous and unbounded. After adjustment, convert the adjusted M-values back to beta values using

exp(adjusted_M) / (1 + exp(adjusted_M)). - Solution 2: Use the

limmapackage'snormalizeBetweenArraysfunction with thebetamethod, which is designed specifically for bounded beta values, or consider specialized methylation-aware batch correction tools likeFunnormorBMIQin conjunction withComBat.

Q2: When using ComBat-seq for adjusting count data from my RNA-seq experiment, I receive an error about "negative counts" or the model fails to converge. What are the causes? A2: ComBat-seq uses a negative binomial model. Errors can arise from:

- Extreme batch effects or outliers: Some counts may be driven to negative values during the parametric adjustment.

- Low-count genes: Genes with many zero or very low counts across samples can destabilize the model.

- Solution: Filter low-count genes more stringently (e.g., require >10 counts in at least one batch) before running ComBat-seq. If convergence fails, try increasing the

mean.onlyparameter toTRUEif the batch effect is assumed to be additive. As a last resort, consider switching toComBat(for log-transformed normalized counts) which is more robust for highly sparse data.

Q3: How do I choose between parametric and nonparametric empirical Bayes adjustment in ComBat, and what does the "mean.only" option do?

A3: This choice is governed by the par.prior and mean.only parameters in the ComBat function.

par.prior=TRUE: Uses a parametric empirical Bayes framework, assuming the batch effect priors follow a distribution. It is faster and recommended for datasets with many samples (>20).par.prior=FALSE: Uses a nonparametric empirical Bayes framework. It is more flexible with few assumptions but requires more samples and is computationally slower. Use if the parametric assumption seems violated.mean.only=TRUE: Assumes the batch effect is only additive (affects the mean) and not multiplicative (does not affect the variance). This simplifies the model and can be more stable when batch sizes are very small. Use if you have reason to believe variance is consistent across batches.

Q4: After running ComBat, my PCA plot still shows strong batch clustering. Did the correction fail? A4: Not necessarily. Residual technical clustering can persist. You must check:

- Biological vs. Technical Signal: Ensure the PCA is colored by known biological factors (e.g., disease state). The correction may have successfully preserved biological signal that correlates with batch.

- Unmoderated Covariates: You may have unaccounted covariates in your model. Use the

modelparameter inComBatto specify a design matrix preserving your biological variables of interest. - Severity of Batch Effect: For extremely strong batch effects, ComBat may only reduce, not eliminate, the clustering. Quantify the reduction using metrics like the Percent Variance Explained (PVE) by batch before and after correction.

Q5: What is the difference between using ComBat on beta values versus M-values in methylation analysis, and which is statistically more appropriate? A5: M-values are statistically more appropriate for linear modeling.

- Beta Values: Bounded between 0 and 1 (methylation proportion). Their distribution is heteroscedastic and not normal.

- M-Values: Logit-transformed beta values. They are continuous, unbounded, and more closely satisfy the assumptions of homogeneity of variance and normality underlying ComBat's linear model.

- Recommendation: Always apply ComBat to M-values for methylation data. Correcting on the beta scale can distort the data structure and lead to inferior statistical performance in downstream differential methylation analysis.

Experimental Protocols

Protocol 1: Standard ComBat Adjustment for Methylation M-Values

- Preprocessing: Process raw IDAT files using

minfiorsesame. Generate beta and M-value matrices. - Quality Control: Remove poor-quality probes and samples. Perform functional normalization (

preprocessFunnorm) or Noob normalization. - Batch Identification: Define your batch variable (e.g., processing plate, array slide, date).

- Model Matrix: Define a model matrix (

mod) to preserve biological covariates (e.g., age, disease status) usingmodel.matrix(). - Run ComBat: Apply the

ComBatfunction from thesvapackage to the M-value matrix.

- Back-Conversion: Convert adjusted M-values to adjusted beta values for interpretation.

- Validation: Perform PCA and examine boxplots of control probes before and after correction.

Protocol 2: ComBat-seq for RNA-seq Count Data

- Data Input: Start with a raw count matrix (genes x samples). Do not log-transform or normalize.

- Filtering: Filter out lowly expressed genes. A common filter is to keep genes with a count >10 in at least

nsamples, wherenis the size of the smallest batch. - Define Batch and Model: Identify the batch variable and create a model matrix for biological covariates.

- Run ComBat-seq: Use the

ComBat_seqfunction.

- Post-correction Analysis: Use the adjusted counts for downstream differential expression analysis with tools like

DESeq2oredgeR. Note: These tools will still require their own normalization (e.g., median of ratios, TMM) after batch correction.

Data Presentation

Table 1: Comparison of ComBat and ComBat-seq Features

| Feature | ComBat (Standard) | ComBat-seq |

|---|---|---|

| Input Data Type | Continuous, Gaussian-like data (e.g., log-CPM, M-values, normalized expression) | Raw count data (Negative Binomial distribution) |

| Core Model | Linear Model with Empirical Bayes shrinkage | Negative Binomial Generalized Linear Model |

| Prior Estimation | Parametric (par.prior=TRUE) or Nonparametric (par.prior=FALSE) |

Parametric Empirical Bayes |

| Key Parameter | par.prior, mean.only |

group (for condition-specific adjustment) |

| Primary Use Case | Microarray data, normalized RNA-seq data, Methylation M-values | RNA-seq raw count data directly |

| Output | Adjusted continuous values | Adjusted integer counts |

Table 2: Impact of Batch Correction on Methylation Data (Simulated EPIC Array Data)

| Metric | Before ComBat (M-values) | After ComBat (M-values) |

|---|---|---|

| Median Absolute Deviation (MAD) by Batch | Batch A: 0.82, Batch B: 1.15 | Batch A: 0.89, Batch B: 0.91 |

| Percent Variance Explained (PVE) by Batch (in PCA) | 35% | 8% |

| PVE by Disease Status (in PCA) | 22% | 41% |

| Mean Correlation of Control Probes within Batch | 0.95 | 0.97 |

| Mean Correlation of Control Probes across Batches | 0.65 | 0.94 |

Mandatory Visualization

Title: Generalized Workflow for Empirical Bayes Batch Correction

Title: Decision Tree for Methylation Batch Correction Method

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Batch Effect Correction Experiments

| Item | Function in Experiment | Example/Note |

|---|---|---|

R/Bioconductor sva Package |

Core software suite containing the ComBat and ComBat-seq functions for empirical Bayes adjustment. |

Version 3.48.0 or later. Primary tool for execution. |

Methylation Array Analysis Package (minfi, sesame) |

Preprocessing raw methylation data (IDAT files), performing normalization, and extracting beta/M-value matrices. | minfi is standard for Illumina arrays. Essential for data preparation. |

RNA-seq Analysis Package (DESeq2, edgeR) |

For RNA-seq experiments: normalizing count data post-ComBat-seq and performing differential expression analysis. | ComBat-seq output is fed into these. |

| Reference Methylation Dataset (e.g., Control Probes) | A set of probes not expected to vary biologically (e.g., housekeeping, spike-ins) used to diagnose batch effects. | Used in boxplots/PCA to visually assess correction success. |

| Simulated Data Scripts | Code to generate data with known batch and biological effects to validate the correction pipeline. | Crucial for benchmarking and method development in a thesis. |

| High-Performance Computing (HPC) Cluster Access | Empirical Bayes methods on genome-wide data are computationally intensive and require adequate memory/CPU. | Necessary for processing large cohorts (n > 1000). |

Troubleshooting Guides & FAQs

Q1: I receive an error stating "object 'rgSet' not found" when trying to run preprocessFunnorm. What is the cause and how do I resolve it?

A: This error occurs because the Functional Normalization (preprocessFunnorm) function in minfi requires an object of class RGChannelSet as input, which is typically generated by the read.metharray.exp function. Ensure you have correctly read your IDAT files and performed noob background correction within the preprocessFunnorm call. The correct workflow is:

Q2: After running preprocessFunnorm, my beta value matrix contains many NAs. What went wrong?

A: The presence of excessive NAs often stems from failed probe filtering. Functional Normalization includes internal filtering for probes with low signal or detection p-value > 1e-16. To diagnose, check detection p-values before normalization:

Q3: How do I choose the optimal number of principal components (nPCs) for Functional Normalization in my experiment?

A: The nPCs parameter controls the number of control probe principal components used to model and remove batch variation. The default is 2. For complex batch structures, increase nPCs. Diagnose using:

Use the number of PCs before the "elbow" point or those explaining >1% variance.

Q4: I have a large batch effect related to Sentrix position. Will Noob + Funnorm correct this?

A: Yes, Functional Normalization is specifically designed to correct for technical variation, including Sentrix position and array row/column effects, using control probe PCA. Ensure your rgSet contains the pd (phenoData) with Slide and Array columns. The correction is applied automatically when these are present. Verify after normalization:

Q5: Can I apply Noob background correction separately before Funnorm?

A: It is not recommended. The preprocessFunnorm function has integrated bgCorr and dyeCorr parameters (both TRUE by default) which apply the Noob method optimally within the normalization pipeline. Performing Noob separately (e.g., using preprocessNoob) and then feeding the result to Funnorm may lead to suboptimal correction because Funnorm expects raw intensities.

Experimental Protocols

Protocol 1: Standard Noob + Functional Normalization Pipeline for Methylation Array Data

- Load Libraries and Data:

library(minfi); library(meffil); - Read Sample Sheet & IDATs:

targets <- read.metharray.sheet("path/to/dir"); rgSet <- read.metharray.exp(targets = targets); - Quality Control: Generate QC report with

qcReport(rgSet, sampNames = pData(rgSet)$Sample_Name, pdf = "qc_report.pdf"). - Apply Functional Normalization with Noob:

mSetFn <- preprocessFunnorm(rgSet, nPCs=2, bgCorr = TRUE, dyeCorr = TRUE, verbose = TRUE). This single command performs Noob background and dye-bias correction, followed by Functional Normalization using control probes. - Extract Normalized Quantities: Obtain beta values with

beta_matrix <- getBeta(mSetFn, type = "Illumina"). Obtain M-values for differential analysis withM_matrix <- getM(mSetFn). - Post-Normalization QC: Plot density of beta values (

densityPlot(beta_matrix)) and perform PCA to check for residual batch effects.

Protocol 2: Batch Effect Diagnosis Pre- and Post-Funnorm

This protocol is crucial for thesis validation of batch correction efficacy.

- Define Batch Covariates: Ensure metadata includes technical factors (e.g.,

Batch,Slide,Array,Processing_Date) and biological factors (e.g.,Disease_Status,Age). - Calculate Principal Variance Components Analysis (PVCA):

- Repeat PVCA on Normalized Data: Generate beta matrix from

mSetFnand repeat step 2. - Compare Results: Successful normalization will drastically reduce the variance proportion attributed to

BatchandSlidein the PVCA plot while preservingGroupvariance.

Data Presentation

Table 1: Comparison of Preprocessing Methods on Benchmark Datasets (Simulated)

| Method (minfi) | Median Probe SD (Beta Values) | % Variance Explained by Known Batch (PVCA) | Computational Time (min, 450k x 12 samples) |

|---|---|---|---|

| Raw (no correction) | 0.125 | 35.2% | 0.5 |

| Noob only | 0.098 | 28.7% | 2.1 |

| Noob + Funnorm (nPCs=2) | 0.081 | 8.5% | 6.3 |

| Noob + SWAN | 0.089 | 18.3% | 4.8 |

| Noob + BMIQ | 0.085 | 22.1% | 8.7 |

Note: SD = Standard Deviation. Lower values indicate better reduction of technical noise. Data simulated from GSE84727.

Table 2: Essential Control Probe Types Used in Functional Normalization

| Control Probe Type | Number on EPIC v1.0 | Primary Function in Funnorm |

|---|---|---|

| Normalization (I) Red | 184 | Correct for dye-bias and batch effects |

| Normalization (I) Grn | 184 | Correct for dye-bias and batch effects |

| Staining | 4 | Assess staining efficiency |

| Extension | 4 | Assess nucleotide extension efficiency |

| Hybridization | 4 | Assess hybridization efficiency |

| Target Removal | 4 | Assess efficiency of target removal |

| Non-polymorphic | 12 | Serve as additional reference points |

| Total Used by Funnorm | ~400 | PCA-derived adjustment factors |

Mandatory Visualizations

Noob + Funnorm Workflow

Funnorm PCA Batch Modeling Logic

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Function in Noob + Funnorm Pipeline |

|---|---|

| Illumina MethylationEPIC v2.0 BeadChip | Array platform containing >935,000 methylation sites and ~400 control probes essential for Funnorm. |

| minfi R Package (v1.46.0+) | Primary software toolkit implementing preprocessFunnorm and preprocessNoob functions. |

RGChannelSet object |

In-memory data class storing raw red/green channel intensities from IDAT files, required input. |

| Control Probe Profiles | Built-in reagent data; intensities from these non-polymorphic probes are used by Funnorm to model batch. |

| meffil Package | Alternative package offering enhanced implementations of normalization, useful for cross-validation. |

| sesame Package | Next-gen preprocessing suite; can be used for comparison or alternative normalization methods. |

| Reference Houseman's algorithm | For cell type composition estimation, often applied post-normalization to adjust for cellular heterogeneity. |

Leveraging Reference Datasets and BMIQ for Probe-Type Normalization

Technical Support Center

Troubleshooting Guides & FAQs

Q1: After applying BMIQ normalization using our in-house reference, we observe a shift in the beta-value distribution for Infinium I probes. What could be the cause and how do we resolve it?

A: This typically indicates a mismatch between the underlying probe-type distributions of your sample and the reference dataset. First, generate a density plot of raw beta values for Type I and Type II probes separately for both datasets. If the distributions are fundamentally different (e.g., bimodal vs. unimodal), your reference may be unsuitable. Solution: Use a larger, publicly available reference dataset (e.g., from GEO, such as GSE105018) that matches your tissue type. Ensure the reference contains enough samples (n>50) to robustly estimate the distributions.

Q2: During the BMIQ normalization process, the script fails with the error: "Error in while (d1 > K[1]) { : missing value where TRUE/FALSE needed". What does this mean?

A: This error usually occurs when the function encounters NA or NaN values in your input beta value matrix. Troubleshooting Steps:

- Check for and remove any probes or samples with all

NAvalues. - Ensure your data import correctly parsed the decimal points.

- Explicitly set non-finite values to

NAbefore normalization:beta_matrix[!is.finite(beta_matrix)] <- NA. - Use the

na.omitargument if supported by your BMIQ package implementation, or impute missing values using theimpute.knnfunction from theimputepackage as a pre-processing step.

Q3: How do we validate that BMIQ normalization has successfully corrected for the probe-type bias?

A: Perform these validation checks:

- Visual Inspection: Plot the density distributions of beta values for Infinium I and Infinium II probes before and after normalization. Successful correction will show the two distributions aligned.

- Quantitative Check: Calculate the mean difference in beta values between probe types for a set of known technical replicate samples. This difference should be significantly reduced post-BMIQ.

- PCA Plot: Run PCA on negative control probes or housekeeping gene probes before and after. Batch effects linked to probe type should diminish post-normalization.

Q4: Can we combine BMIQ-normalized data from different experimental batches or array versions (e.g., EPIC v1 & v2)?

A: BMIQ corrects within-array probe-type bias. It does not correct for between-batch or between-array-version effects. Protocol: You must normalize each batch separately to its own appropriate reference dataset. Afterward, apply an additional batch effect correction method (e.g., ComBat, limma's removeBatchEffect) using the sva package, treating "batch" or "array version" as a known covariate. Always validate with PCA plots post-correction.

Key Experimental Protocol: BMIQ Normalization Using a Public Reference Dataset

Objective: To normalize raw methylation beta values for probe-type design bias using the BMIQ algorithm and a robust external reference.

Materials & Software: R (v4.0+), minfi package, wateRmelon or BMIQ package, a large public methylation dataset (e.g., from GEO).

Methodology:

- Data Preparation: Load your IDAT files using

minfi::read.metharray.exp. Generate a raw beta value matrix (getBeta). Filter out probes with detection p-value > 0.01 in any sample, cross-reactive probes, and SNP-related probes. - Reference Selection: Download a suitable reference dataset (e.g., whole blood samples for blood studies). Process it identically to your dataset to obtain a clean beta matrix.

- Normalization Execution: Use the

BMIQfunction (fromwateRmelonpackage). The key is to designate your dataset as the "to-be-normalized" set and the public data as the "reference" set with theplots=TRUEargument to generate diagnostic plots.

- Quality Control: Examine the generated plots. The density of normalized sample probes should overlay the reference density. Calculate the standard deviation of beta values per probe type; they should be comparable post-normalization.

Research Reagent Solutions & Essential Materials

| Item | Function in Probe-Type Normalization |

|---|---|

| Infinium MethylationEPIC v2.0 BeadChip | The latest array platform; provides the raw signal data requiring normalization for >935,000 CpG sites. |

| IDAT Files | Raw intensity data files from the array scanner; the primary input for all preprocessing. |

| Minimally-Preprocessed Public Methylation Dataset (e.g., from GEO) | Serves as the stable, population-level reference for defining the target distribution for BMIQ. |

R wateRmelon Package |

Provides an optimized, maintained implementation of the BMIQ algorithm for high-throughput processing. |

R minfi Package |

Essential for robustly reading IDAT files, performing basic QC, and generating initial beta value matrices. |

| List of Cross-Reactive Probes (Chen et al.) | A reagent for probe filtering; removes unreliable probes that map to multiple genomic locations. |

| List of SNP-associated Probes | A reagent for probe filtering; removes probes where a underlying SNP may confound methylation measurement. |

Table 1: Comparison of Normalization Performance Metrics (Simulated Data)

| Method | Mean Δβ (Type I vs II) | SD Ratio (Post/Pre) | Runtime (min, n=100) |

|---|---|---|---|

| Raw Data | 0.152 | 1.00 | - |

| BMIQ (with Reference) | 0.008 | 0.95 | 12 |

| Peak-Based Correction | 0.035 | 0.98 | 8 |

| Quantile Normalization | 0.101 | 0.89 | 2 |

Table 2: Recommended Public Reference Datasets for BMIQ

| Tissue Type | GEO Accession | Sample Count (n) | Platform | Recommended For |

|---|---|---|---|---|

| Whole Blood | GSE105018 | 622 | EPIC | Blood, PBMC studies |

| Placenta | GSE195114 | 473 | 450K/EPIC | Developmental studies |

| Various (TCGA) | Multiple | >10,000 | 450K | Pan-cancer analysis |

Diagrams

Diagram 1: BMIQ Normalization Workflow

Diagram 2: Probe-Type Bias Correction Logic

Technical Support Center: Troubleshooting Guides & FAQs

Frequently Asked Questions (FAQs)

Q1: My pipeline integrates data from multiple studies (GEO datasets). After combining IDATs and normalizing, I still see strong batch clustering in my PCA. Which correction method should I apply first: ComBat or limma's removeBatchEffect?

A1: The choice depends on your data structure. Use ComBat (from the sva package) when you have a large number of batches (>2) and suspect batch effects may be complex and non-linear. It is powerful but makes stronger assumptions. Use limma's removeBatchEffect function for a simpler, linear adjustment, especially when you need to preserve biological variation of interest for downstream linear modeling. For methylation data, ComBat is frequently cited, but always validate by checking PCA plots post-correction.

Q2: After applying batch correction, my negative control probes (or housekeeping gene regions) show unexpected shifts in beta value distribution. Is this normal?

A2: No, this is a critical red flag. Batch correction should not drastically alter the expected methylation levels of known stable control regions. This indicates potential over-correction, where the algorithm is removing biological signal. Re-run the correction, ensuring you have correctly specified the model.matrix for biological variables of interest (e.g., disease state) to protect them. Compare beta value distributions pre- and post-correction for these controls.

Q3: I'm using minfi and ChAMP for my pipeline. At what exact step should I perform batch correction: after functional normalization but before DMP detection, or after?

A3: Best practice is to perform batch correction after normalization and quality control but before any differential methylation analysis. In a ChAMP pipeline, the order is: Import IDATs -> Quality Check (champ.QC) -> Normalization (champ.norm) -> Batch Correction (champ.runCombat) -> Differential Methylation Analysis (champ.DMP). This ensures normalized data is corrected for technical variance before statistical testing.

Q4: How do I validate that my batch correction was successful beyond visual PCA inspection? A4: Implement quantitative and biological validation:

- Silhouette Width: Calculate the average silhouette width for batch labels before and after correction. A successful correction will show a significant decrease.

- PVCA (Principal Variance Component Analysis): Quantify the proportion of variance attributable to batch versus biological factors pre- and post-correction.

- Positive Control Recovery: Ensure known differentially methylated regions (e.g., between cell types) remain significant post-correction.

- Negative Control Stability: As in Q2, confirm stable regions are unchanged.

Troubleshooting Guide

Issue: Error in preprocessQuantile or functional normalization due to "subset of samples appears to have been processed on a different array type."

Root Cause: IDAT files from different Illumina methylation array platforms (e.g., 450K vs. EPIC) or versions (EPIC vs. EPICv2) have been inadvertently mixed in the same analysis directory.

Solution:

- Verify array type for each IDAT using

minfi::read.metharray.sheetandminfi::getManifest. - Separate samples by array type. They cannot be normalized together using standard methods.

- If combining platforms is necessary for the analysis, you must use a cross-platform normalization or bridging method, such as:

minfi'spreprocessQuantilewith careful attention to common probes only.- The

SeSAMepipeline with itsarrayTypeparameter. - Third-party tools like

MM285for harmonization.

- Perform batch correction after this specialized normalization, treating "platform" as the primary batch variable.

Issue: ComBat fails with error: "Error in while (change > conv) { : missing value where TRUE/FALSE needed"

Root Cause: This often occurs due to complete separation or near-zero variation in your data for some probes across the specified batches and biological groups. It can also be caused by missing values (NAs) in the data matrix.

Solution:

- Filter Low-Variance Probes: Remove probes with near-constant beta values across all samples (e.g., standard deviation < 0.01) before running ComBat.

- Check for NAs: Ensure your methylation beta value matrix contains no

NAvalues. Impute or remove offending probes. - Simplify the Model: If you have many batches or complex biological covariates, try running ComBat with a simpler model (e.g., only specifying the batch variable) to see if the error persists.

Experimental Protocols

Protocol 1: Integrated Pipeline with ComBat-Seq for Methylation Data

Title: End-to-End Methylation Analysis with Batch Correction.

Objective: Process raw IDAT files to batch-corrected beta values suitable for differential methylation analysis.

Materials: See "Scientist's Toolkit" below.

Software: R (≥4.0), minfi, sva, limma, ChAMP (optional).

Methodology:

- IDAT Import & Annotation: Use

minfi::read.metharray.expto load IDAT files and associated sample sheet. Annotate withminfi::getAnnotation. - Quality Control & Filtering: Generate QC reports (

minfi::qcReport). Filter probes with detection p-value > 0.01 in any sample, cross-reactive probes, and probes on sex chromosomes (unless relevant). - Normalization: Apply Functional Normalization (

minfi::preprocessFunnorm) to remove unwanted technical variation using control probes. - Extract Beta Values: Obtain methylation beta values with

minfi::getBeta. - Batch Correction with ComBat: Use the

svapackage. First, create a model matrix for biological covariates (e.g.,mod <- model.matrix(~ Disease_Status, data=pData)). Then run: - Validation: Perform PCA on

beta_corrected. Calculate silhouette scores and PVCA to quantify batch effect reduction.

Protocol 2: Quantitative Validation Using Principal Variance Component Analysis (PVCA)

Title: PVCA for Batch Effect Quantification.

Objective: Quantitatively assess the proportion of variance explained by batch vs. biological factors before and after correction.

Materials: Methylation beta value matrix, sample metadata table.

Software: R, pvca package (or custom implementation using lme4).

Methodology:

- Prepare Data: Use the filtered, normalized beta matrix (pre- and post-correction).

- Perform PCA: Run PCA on the matrix and retain top

kprincipal components (PCs) that explain >95% variance. - Fit Variance Model: For each PC score (response variable), fit a linear mixed model where batch is a random effect and key biological factors (e.g., phenotype, tissue) are fixed effects.

- Calculate Variance Fractions: Extract the variance components attributed to each term in the model for all PCs.

- Weight and Aggregate: Weight each PC's variance components by the proportion of total variance it explains. Sum across all PCs to get the overall Proportion of Variance Explained by Batch, Biological Factor, Residual, etc.

- Compare: Present results in a table and bar plot comparing pre- and post-correction variance proportions.

Data Presentation

Table 1: Comparison of Batch Correction Methods for Methylation Arrays

| Method (Package) | Underlying Model | Key Strength | Key Limitation | Best For |

|---|---|---|---|---|

ComBat (sva) |

Empirical Bayes, linear model | Robust for many batches; preserves biological signal via model. | Assumes parametric prior; can over-correct. | Multi-study integrations with clear batch labels. |

removeBatchEffect (limma) |

Linear regression | Simple, fast, transparent; good for protecting a primary factor. | Purely linear adjustment; may not capture complex effects. | Adjusting for known technical covariates (e.g., slide, row). |

Harmony (harmony) |

Iterative clustering & integration | Does not require explicit batch labels; integrates based on PCA. | Computationally intensive; results can be sensitive to parameters. | Complex integrations where batch is confounded with biology. |

ARSyN (NOISeq) |

ANOVA model on dimensions | Specifically designed for sequential factors (e.g., time, order). | Less commonly used for methylation; requires specific design. | Removing "run order" or "processing date" effects. |

Table 2: PVCA Results Before and After ComBat Correction (Example Data)

| Variance Component | Proportion of Variance (Pre-Correction) | Proportion of Variance (Post-Correction) |

|---|---|---|

| Batch (Processing Date) | 35% | 8% |

| Disease Status | 22% | 41% |

| Patient Age | 10% | 12% |

| Residual (Unexplained) | 33% | 39% |

Mandatory Visualization

Title: Standardized Methylation Pipeline with Batch Correction

Title: PVCA Validation Workflow

The Scientist's Toolkit

| Research Reagent / Tool | Function in Pipeline |

|---|---|

| Illumina Methylation BeadChip | Platform for genome-wide profiling of DNA methylation at single-CpG-site resolution. |

| IDAT Files | Raw data files containing intensity information for each probe on the array. |

| minfi R/Bioconductor Package | Primary toolkit for importing, QC, normalization, and preprocessing of IDAT files. |

| sva R/Bioconductor Package | Contains the ComBat function for empirical Bayes batch effect adjustment. |

| ChAMP R/Bioconductor Package | A comprehensive all-in-one analysis pipeline wrapper that includes champ.runCombat. |

Functional Normalization (preprocessFunnorm) |

A normalization method using control probes to adjust for technical variation, ideal for batch correction input. |

| SeSAMe R/Bioconductor Package | An alternative pipeline offering robust preprocessing and array-type handling. |

| Housekeeping / Control Probe Regions | Genomic regions known to be stably methylated/unmethylated across tissues; used as negative controls for correction validation. |

Solving Common Pitfalls: Optimizing Batch Correction for Complex Study Designs and Low Signal

Batch effect correction in methylation array research is a critical step for ensuring data integrity. However, over-correction—the unintentional removal of true biological signal—poses a significant risk. This guide addresses common issues and provides solutions to preserve biological variation of interest during normalization and correction procedures.