Batch Effect Correction in Epigenomic Data Analysis: A Complete Guide for Robust Research

This comprehensive guide for researchers and drug development professionals explores the critical challenge of batch effect correction in epigenomic data analysis.

Batch Effect Correction in Epigenomic Data Analysis: A Complete Guide for Robust Research

Abstract

This comprehensive guide for researchers and drug development professionals explores the critical challenge of batch effect correction in epigenomic data analysis. It covers the foundational understanding of technical noise in assays like ChIP-seq and ATAC-seq, reviews methodological approaches from traditional algorithms to modern AI-driven tools, provides practical troubleshooting and optimization strategies, and outlines frameworks for rigorous validation and comparative analysis. The article synthesizes current best practices to enhance data reproducibility, ensure robust biological discovery, and support reliable translation into clinical and pharmaceutical applications.

Foundations of Batch Effects: Unmasking Technical Noise in Epigenomic Studies

Technical Support Center: Troubleshooting Batch Effects in Epigenomic Analysis

This support center provides guidance for addressing common batch effect issues, framed within a thesis on batch effect correction in epigenomic data analysis. The goal is to empower researchers to distinguish technical artifacts from true biological signals.

Frequently Asked Questions (FAQs)

Q1: My PCA plot shows clear clustering by sample processing date, not by treatment group. Is this a batch effect, and how do I confirm it? A: Yes, this is a classic signature of a batch effect. To confirm, perform the following diagnostic steps:

- Correlate Technical Covariates: Calculate the correlation between principal components (PCs) that drive separation (e.g., PC1) and known technical variables (sequencing run, library prep date, technician ID, sample storage time).

- Create a Design Matrix: Statistically test the association using a linear model (e.g.,

limma::duplicateCorrelationin R) where the technical variable is the predictor and the PC score is the outcome. A significant p-value (< 0.05) confirms a batch effect. - Visualize with PVCA: Conduct a Principal Variance Component Analysis (PVCA) to quantify the proportion of total variance attributable to your batch variable versus your biological variable of interest.

Q2: After correcting for batch effects using ComBat, my biological signal seems weaker. Did the correction remove real biology? A: This is a risk of over-correction. ComBat and similar methods rely on accurate modeling of the batch variable. If your biological groups are perfectly confounded with batches (e.g., all Control samples were processed in Batch 1, all Treated in Batch 2), the algorithm cannot distinguish between the two. To troubleshoot:

- Re-examine Design: Check for complete confoundment. If present, the experiment is fundamentally flawed for batch correction, and results must be interpreted with extreme caution.

- Use Negative Controls: If you have control probes or housekeeping genes expected to be stable across conditions, ensure they remain stable post-correction. Their drastic change suggests over-correction.

- Try a Less Aggressive Method: Switch to a method that uses a linear model framework (e.g.,

limma::removeBatchEffect) where you can explicitly preserve your condition variable while regressing out the batch variable. Always compare pre- and post-correction plots.

Q3: I have a complex experimental design with multiple overlapping batch variables (e.g., sequencing lane, extraction kit version, and patient cohort). How do I choose which to correct for? A: Prioritize correction based on the variance explained.

- Perform PVCA: This will rank batch factors by their contribution to overall variance.

- Correct Sequentially: Correct for the largest source of technical variance first, then re-evaluate. Often, major batch effects can mask smaller ones.

- Model Simultaneously: Use a linear model that includes all relevant batch factors as covariates. Tools like

svacan estimate surrogate variables for unmodeled factors.

Q4: For my DNA methylation (Illumina EPIC array) data, what are the best practices for batch correction at the beta-value versus M-value level? A: Current consensus recommends:

- Perform correction on M-values: M-values (log2 ratio of methylated to unmethylated intensities) exhibit more homoscedastic variance and are better suited for parametric statistical models used in batch correction algorithms.

- Apply correction after normalization but before differential analysis: The standard workflow is: i) Raw data import & quality control, ii) Intra-array normalization (e.g., BMIQ, Noob), iii) Inter-array batch correction (e.g., ComBat, RUVm), iv) Statistical analysis for DMPs/DMRs.

- Document: Always note the stage (beta/M), specific values (e.g.,

ComBat(sva)), and parameters (e.g., empirical Bayes) used in your methods section.

Diagnostic Protocol: Quantifying Batch Effect Severity

Objective: To quantitatively assess the impact of a suspected batch variable (e.g., "Processing Week") on your epigenomic dataset before and after correction.

Materials:

- Normalized matrix of epigenomic data (e.g., M-values for methylation, normalized counts for ChIP-seq/ATAC-seq).

- Metadata table with

Sample_ID,Batch_Variable, andCondition_Variable.

Methodology:

- Calculate Principal Components (PCs): Perform PCA on your normalized data matrix.

- Extract PC Variance: Record the percentage of total variance explained by the first 5 PCs.

- Associate PCs with Batch: For each of the first 5 PCs, run an Analysis of Variance (ANOVA) with the

Batch_Variableas the independent variable and the PC loadings as the dependent variable. - Calculate R²: For the ANOVA on the PC that most separates batches (often PC1), calculate the R-squared value. This represents the proportion of variance in that PC explained by the batch.

- Compute Overall Metric: A simple Batch Effect Score can be:

(Variance explained by PC1) * (R² of PC1 ~ Batch). A higher score indicates a more severe, batch-driven global structure.

Interpretation Table:

| Metric | Low Severity (< 10%) | Moderate Severity (10-30%) | High Severity (> 30%) | Action |

|---|---|---|---|---|

| % Var (PC1) explained by Batch (R²) | < 0.1 | 0.1 - 0.3 | > 0.3 | Correction is Critical |

| Visual Clustering by Batch in PC1/PC2 | None | Mild | Strong | Correction is Critical |

| Condition Signal vs. Batch Signal (PVCA) | Condition > Batch | Condition ≈ Batch | Batch > Condition | Re-assay design if possible |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Batch Effect Management |

|---|---|

| Reference Epigenome Standards | Commercially available control DNA (e.g., from Horizon Discovery or CeGaT) with known methylation states. Spiked into experiments across batches to monitor technical performance and anchor corrections. |

| Universal Methylated & Unmethylated DNA | Used as absolute controls for bisulfite conversion efficiency assays, a major source of batch variation in DNA methylation workflows. |

| Pooled Library Spike-Ins (e.g., SNAP-ChIP) | For ChIP-seq, adding a constant amount of chromatin from a different species (e.g., Drosophila) to each sample allows for normalization based on spike-in read counts, controlling for batch differences in IP efficiency and sequencing depth. |

| Unique Molecular Identifiers (UMIs) | Integrated into adapters for assays like scATAC-seq or methyl-seq to correct for PCR amplification biases that can vary batch-to-batch. |

| Inter-plate Control Samples | Aliquots from a single, large biological sample included on every processing batch (e.g., every 96-well plate for arrays) to directly measure inter-batch variation. |

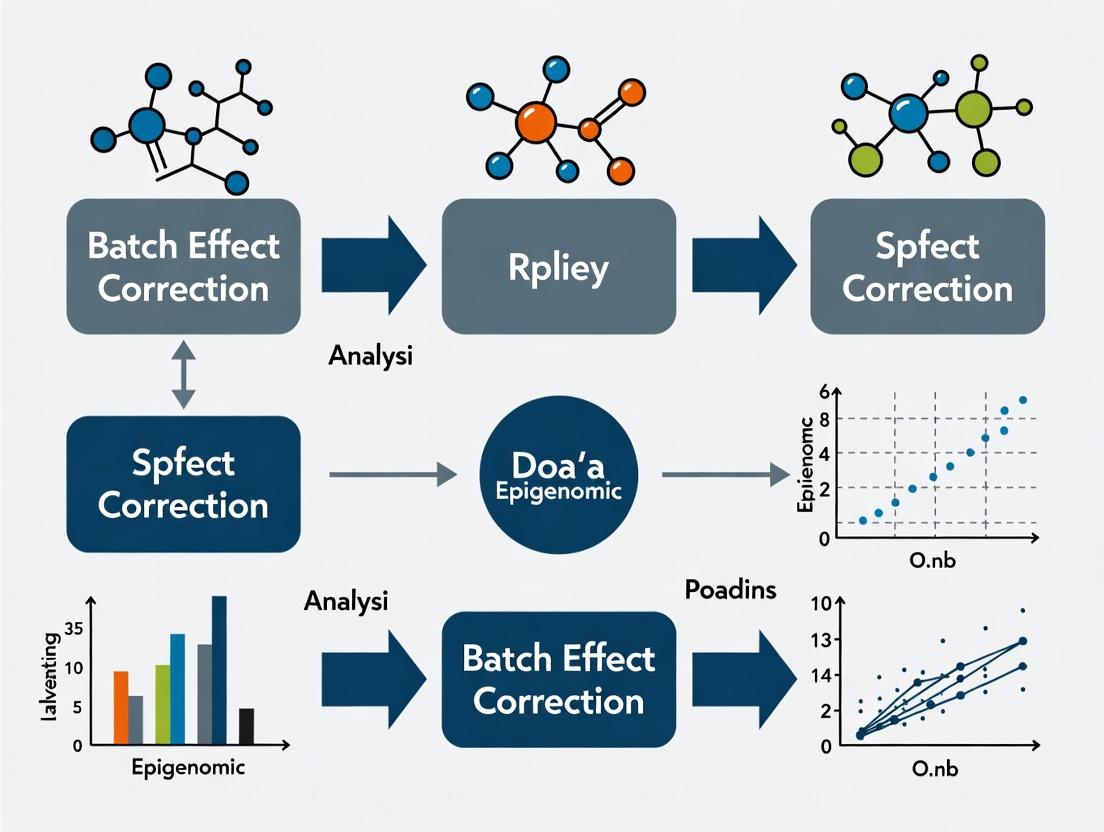

Visualization: Batch Effect Analysis & Correction Workflow

Diagram Title: Epigenomic Batch Effect Correction Decision Workflow

Diagram Title: Key Batch Effect Sources in Epigenomics Workflow

This technical support center is framed within a thesis investigating batch effect correction in epigenomic data analysis. Batch effects, systematic non-biological variations introduced by technical sources, are a critical confounder in integrating data from different assays, protocols, and sequencing runs. Identifying and troubleshooting these common sources is essential for robust research and drug development.

Troubleshooting Guides & FAQs

FAQ Category 1: Assay Protocol Variability

Q1: Our ChIP-seq replicates from the same cell line, processed months apart, show poor correlation in peak calls. What are the most likely protocol-related sources? A: This is a classic batch effect from protocol drift. Key sources include:

- Antibody Lot Variability: Different lots of the same antibody (e.g., H3K27ac) can have varying affinities and specificities.

- Cell Culture Passage Number: Epigenetic states can drift with high passage numbers.

- Sonication Efficiency & Fragment Size Distribution: Inconsistent shearing alters pull-down efficiency and sequencing library profiles.

- Reagent Depletion: Enzymes in kit-based protocols (e.g., for end-repair, ligation) lose activity over time.

Q2: For ATAC-seq, how does nuclei isolation method impact inter-batch consistency? A: The choice between detergent-based or mechanical isolation, and the specific detergent (e.g., NP-40, Igepal CA-630) concentration, significantly affects nuclear purity and accessibility. Inconsistent lysis leads to variable proportions of mitochondrial DNA reads and background signal, creating major batch effects.

FAQ Category 2: Library Preparation & Sequencing

Q3: We pooled libraries sequenced across two different Illumina platforms (e.g., NextSeq 2000 vs NovaSeq 6000). We observe a platform-specific shift in GC-content bias. How do we address this? A: This is a known technical batch source. Different platforms and even different flow cell types within a platform can have distinct optical and clustering biases. Do not correct biologically using GC-content normalization alone, as it may remove real biology. The solution is to:

- Document: Record platform, flow cell type, and control lane data for each library.

- Analyze with Controls: Use pre-defined positive control samples (e.g., reference epigenome cell lines) across batches to quantify the platform effect.

- Apply Platform-Aware Correction: Use batch effect correction tools (e.g., ComBat-seq, limma) that include

sequencing_runorplatformas a covariate in the model.

Q4: How does sequencing depth variation between batches confound differential analysis, and what is the minimum viable depth for batch integration? A: Inadequate or highly variable depth increases noise and false negatives, complicating batch correction. Below are general guidelines for human genomes:

| Assay | Minimum Recommended Depth per Sample | Depth for Robust Differential Analysis | Note on Batch Integration | |

|---|---|---|---|---|

| ChIP-seq (Transcription Factor) | 10-20 million reads | 20-50 million reads | Low-depth samples act as outliers; depth-match before integration. | |

| ChIP-seq (Histone Mark) | 20-30 million reads | 40-60 million reads | ||

| ATAC-seq | 25-50 million reads | 50-100 million reads | High depth needed for single-cell integration. | |

| WGBS | 30-50 million reads | 80-100 million reads | Depth requirements are CpG site coverage-dependent (e.g., 10-30x). | |

| RRBS | 5-10 million reads | 10-20 million reads |

FAQ Category 3: Data Processing & Metadata Tracking

Q5: Our processed data matrix shows batch effects aligned with the "Analyst" metadata field. What pipeline steps are most analyst-dependent? A: Manual, non-standardized steps in bioinformatics pipelines are major batch sources:

- Adapter Trimming Parameters: (e.g., stringency settings in Trim Galore!/Cutadapt).

- Read Alignment Filtering: (e.g., MAPQ score thresholds in BAM filtering).

- Peak Calling Parameters: (e.g.,

-qvalue in MACS2, or using different callers). - Blacklist Region Handling: Inclusion or exclusion of ENCODE blacklists.

Solution: Use containerized, version-controlled pipelines (e.g., Nextflow, Snakemake with Docker/Singularity) and record ALL parameters in a machine-readable metadata sheet.

Detailed Experimental Protocol: Cross-Batch Validation Experiment

Objective: To quantify the batch effect introduced by different library preparation dates for ATAC-seq.

Materials: See "The Scientist's Toolkit" below. Cell Line: K562 (reference epigenome). Experimental Design: Split a large, passage-controlled K562 culture into aliquots. Process them in three identical but temporally separated batches (Batch A, B, C), each including its own positive control (K562) and a negative control (cell-free) sample.

Protocol:

- Nuclei Isolation: Isolate nuclei from 50,000 K562 cells per replicate using the same detergent-based protocol. Perform all batches with the same equipment but on different days.

- Tagmentation: Use the same lot of loaded Tn5 transposase for all batches. Perform reaction for 30 minutes at 37°C in a thermal cycler.

- Library Amplification: Amplify tagmented DNA using 1x KAPA HiFi HotStart ReadyMix. Determine cycle number using a qPCR side reaction (see diagram workflow).

- Library Pooling & Sequencing: Quantify libraries by Qubit and qPCR. Pool equimolar amounts of all libraries from all three batches into a single pool.

- Sequencing: Sequence the single pool on one lane of an Illumina NovaSeq 6000 S4 flow cell to avoid sequencing platform batch effects.

Data Analysis for Batch Detection:

- Process all FASTQ files through a single, standardized pipeline (e.g., ENCODE ATAC-seq pipeline).

- Generate a consensus peak set across all samples.

- Create a count matrix of reads in peaks.

- Perform Principal Component Analysis (PCA). Batch effects are indicated by clustering of samples primarily by preparation date (Batch ID) rather than sample type.

Visualizations

Diagram 1: Cross-Batch ATAC-seq Experimental Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function | Consideration for Batch Control |

|---|---|---|

| Validated Antibodies (ChIP-seq) | Specific immunoprecipitation of DNA-protein complexes. | Use antibodies with high-quality certifications (e.g., CUT&RUN validated). Purchase large lots for multi-batch projects. |

| Loaded Tn5 Transposase (ATAC-seq) | Simultaneously fragments and tags accessible DNA with adapters. | Critical batch source. Pre-purchase a large, single lot from a trusted vendor or prepare a large homemade batch. |

| Methylation-aware Enzymes (WGBS/RRBS) | Bisulfite conversion, methylation-dependent restriction. | Enzyme efficiency varies. Use kits from the same lot and include unmethylated/methylated spike-in controls. |

| Size Selection Beads (SPRI) | Cleanup and size selection of DNA fragments. | Bead lot and age can affect size cutoffs. Calibrate bead-to-sample ratio per new lot. |

| Universal qPCR Library Quant Kit | Accurate quantification of amplifiable library fragments. | Essential for equitable pooling. More accurate than fluorometry alone. Use the same kit standard for all batches. |

| Reference Epigenome Cell Line (e.g., K562) | Positive biological control across batches. | Maintain a large, low-passage frozen stock. Thaw a new vial for each batch to control for culture drift. |

| External Spike-in Control (e.g., S. cerevisiae DNA) | Added in fixed amount before library prep. | Allows direct normalization for technical variation in IP efficiency/tagmentation. |

Technical Support Center: Batch Effect Troubleshooting in Epigenomics

Troubleshooting Guides

Issue: Inconsistent Clustering by Batch in PCA/UMAP

- Symptoms: Samples cluster more strongly by processing date, technician, or reagent lot than by biological condition in dimensionality reduction plots.

- Diagnosis: Strong technical batch effect obscuring biological signal.

- Solution Steps:

- Visualize: Generate a PCA plot colored by known batch covariates (e.g., sequencing run, extraction date).

- Quantify: Calculate metrics like Percent Variance Explained by batch using ANOVA.

- Correct: Apply a batch effect correction method (e.g., ComBat, limma's

removeBatchEffect) after careful model design. - Re-cluster: Re-run PCA/UMAP on corrected data to assess improvement.

Issue: Failed Replication of Differential Methylation Regions (DMRs)

- Symptoms: DMRs identified in a discovery cohort do not validate in a follow-up study, despite similar biology.

- Diagnosis: Batch effects have been confounded with the biological factor of interest, leading to false discoveries.

- Solution Steps:

- Audit Design: Check if batch is perfectly confounded with a group (e.g., all controls processed in Batch 1, all cases in Batch 2). If so, the experiment is irreparably flawed.

- Integrated Analysis: Re-analyze discovery and validation datasets together, including "study" as a batch covariate in the model.

- Use Robust Methods: Apply cross-validation frameworks that account for batch structure to estimate true prediction error.

Issue: Drift in Control Probe Intensity Over Time

- Symptoms: Internal control probes or spike-in controls show a systematic trend across array rows or sequencing runs.

- Diagnosis: Technical drift within or between experimental batches.

- Solution Steps:

- Plot Trends: Graph control probe values by position or run date.

- Normalize: Use intensity-based normalization methods (e.g., quantile normalization for arrays, RUV for sequencing) that leverage these controls.

- Benchmark: Compare pre- and post-normalization values of control probes to ensure stabilization.

Frequently Asked Questions (FAQs)

Q1: What is the first step I should take to diagnose batch effects in my epigenomic dataset? A: The critical first step is visual inspection. Perform an unsupervised analysis (PCA or MDS) on your processed but uncorrected data. Color the data points by all known technical variables (batch, run date, chip, lane) and biological variables. If samples cluster strongly by a technical variable, you have a batch effect. See the workflow diagram below.

Q2: Should I correct for batch effects before or after performing differential analysis?

A: Batch correction should be integrated into the statistical model for differential analysis whenever possible. For example, include 'batch' as a covariate in a linear model (e.g., in limma or DESeq2). Pre-correcting the data and then treating it as raw input for differential analysis can lead to over-fitting and anti-conservative p-values. The key is to account for batch variation while testing for the biological effect of interest.

Q3: How do I choose between ComBat and SVA for batch correction? A: The choice depends on your experimental design and knowledge.

- Use ComBat (empirical Bayes) when you have clearly defined, known batches. It effectively shrinks the batch means toward the overall mean.

- Use SVA (Surrogate Variable Analysis) or RUV (Remove Unwanted Variation) when you suspect unknown or unmeasured sources of batch variation (e.g., subtle environmental differences). These methods estimate latent factors from the data itself.

Q4: Can batch effects ever be "too large" to correct? A: Yes. Correction algorithms rely on the assumption that batch effects do not completely confound the biological signal. If all samples from condition A are in batch 1 and all from condition B are in batch 2, it is mathematically impossible to disentangle biology from batch. No algorithm can salvage such a design. Randomized, balanced block designs are essential.

Table 1: Impact of Batch Effects on Reproducibility in Published Studies

| Study & Data Type | Batch Effect Source | Consequence | Magnitude of Impact |

|---|---|---|---|

| DNA Methylation Array (Lehne et al., 2015) | Processing Plate | False DMPs | Up to 100,000 CpGs associated with plate alone |

| ChIP-seq (Johnson et al., 2007) | Sequencing Run | Inconsistent Peak Calls | >50% of peaks not reproducible between runs |

| RNA-seq (The SEQC Consortium, 2014) | Sequencing Site | Differential Expression Lists | Site explained up to 40% more variance than biology |

| scATAC-seq (Tran et al., 2020) | Donor & Chemistry Lot | Clustering Artifacts | Batch explained ~30% of variance in latent space |

Table 2: Comparison of Common Batch Effect Correction Methods

| Method | Principle | Best For | Key Assumption | Software/Package |

|---|---|---|---|---|

| ComBat | Empirical Bayes shrinkage of batch means | Known batches, balanced designs | Batch effect is additive/multiplicative, not confounded | sva (R) |

limma removeBatchEffect |

Linear model adjustment | Known batches, preparing data for visualization | Linear batch effect | limma (R) |

| SVA | Estimation of surrogate variables | Unknown factors, complex designs | Surrogate variables represent batch, not biology | sva (R) |

| RUV | Using control genes/features | Any design with negative controls | Control features are only affected by batch | RUVseq, ruvim (R) |

| Harmony | Iterative clustering & integration | Single-cell data, high-dimensional spaces | Cells of the same type cluster across batches | harmony (R/Python) |

Experimental Protocol: Diagnosing and Correcting Batch Effects in DNA Methylation Array Data

Objective: To identify and remove technical batch effects from Illumina EPIC array data to recover biologically relevant differential methylation.

Materials: See "Research Reagent Solutions" table below.

Workflow:

- Raw Data Loading: Import IDAT files into R using

minfi. Create anRGChannelSetobject. - Quality Control & Preprocessing:

- Generate QC report (

minfi::qcReport). - Perform functional normalization (

minfi::preprocessFunnorm) to control for probe design bias. - Filter probes: Remove those with detection p-value > 0.01, cross-reactive probes, and SNPs.

- Extract Beta values for analysis.

- Generate QC report (

- Batch Effect Diagnosis:

- Perform PCA on the 50,000 most variable CpGs.

- Color PCA plots by

Array_ID,Slide,Processing_Date, andBiological_Group. - Calculate variance contributions using

pvca::pvcaBatchAssess.

- Statistical Modeling & Correction:

- If batches are known and balanced: Use a linear model with

limma. Includebatchandbiological_groupin the design matrix. Fit model and extract contrasts for biology. - If unknown factors suspected: Run

sva::sva()to estimate surrogate variables (SVs). Add top SVs to thelimmadesign matrix.

- If batches are known and balanced: Use a linear model with

- Post-Correction Validation:

- Re-run PCA on the residuals of the model (or corrected values).

- Confirm clustering by

Biological_Groupand dispersion by technical factors is minimized. - Test positive and negative control genomic regions.

Visualizations

Title: Batch Effect Diagnosis and Correction Workflow

Title: Confounded vs Balanced Experimental Design

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Robust Epigenomic Studies

| Item | Function | Consideration for Batch Effects |

|---|---|---|

| Reference Standards (e.g., commercially available methylated/unmethylated DNA) | Serve as inter-laboratory and inter-batch positive controls. Normalization anchor. | Use the same master aliquots across all batches of an experiment. |

| Spike-In Controls (e.g., ERCC RNA spikes, methylated lambda phage DNA) | Added in known quantities to track technical variation in library prep, sequencing efficiency, and detection thresholds. | Enables methods like RUV that use these controls to estimate unwanted variation. |

| Whole Genome Amplified DNA (e.g., from cell lines) | Homogeneous, renewable biological material used as a process control across multiple batches/runs. | Monitor technical performance drift over time independent of sample biology. |

| Automated Nucleic Acid Extraction System | Standardizes the pre-analytical phase, a major source of batch variation. | Reduces technician-to-technician variability. Calibrate regularly. |

| Multi-Sample Reagent Kits (with single lot number) | Using a single kit lot for an entire study minimizes reagent-driven batch effects. | Plan procurement to acquire all necessary kits from one manufacturing lot. |

| Barcoded Adapters & Indexed Sequencing Primers | Allows multiplexing of samples from different conditions across sequencing lanes. | Critical: Balance biological conditions across lanes/runs to avoid confounding. |

Technical Support Center

FAQs & Troubleshooting Guides

Q1: During Principal Component Analysis (PCA) of my DNA methylation array data, the first principal component (PC1) strongly correlates with sample processing date, not biological group. What does this indicate and how should I proceed? A1: This is a classic sign of a significant technical batch effect. PC1 capturing processing date suggests technical variation outweighs biological signal. Proceed as follows:

- Diagnostic: Confirm by coloring the PCA plot by

Sample_PlateorProcessing_Batch. A strong clustering by these factors confirms the issue. - Action: Apply a batch effect correction method like ComBat or limma's

removeBatchEffect. Re-run PCA post-correction. The correlation of PC1 with the batch variable should be minimized. See the Batch Effect Diagnostic Workflow diagram.

Q2: After applying ComBat to my ChIP-seq peak intensity matrix, my biological replicates from the same condition are no longer clustering together in the hierarchical clustering dendrogram. What went wrong? A2: This indicates potential over-correction, where the algorithm has removed biological signal along with batch noise. This often occurs when batch is confounded with condition.

- Check Confounding: Create a table of your experimental design. If all samples from

Condition_Awere processed inBatch_1and all fromCondition_BinBatch_2, the effects are perfectly confounded. - Solution: In confounded scenarios, use an unsupervised approach. Shift focus to detection rather than full correction. Use the batch-aware metrics and visualizations (like PVCA or batch-specific density plots) to quantify and document the batch effect's strength in your final report, acknowledging it as a study limitation.

Q3: When using the SVA package to estimate surrogate variables for my RNA-seq data, how do I determine if I am capturing batch or biology? A3: This is a critical diagnostic step. You must correlate the estimated surrogate variables (SVs) with both known batch factors (e.g., technician, run date) and key biological covariates (e.g., disease status, age).

- Protocol:

- Run

sva::svaseq()to estimatenSVs. - Perform a linear regression or calculate correlation coefficients between each SV (SV1, SV2,...) and each known metadata variable.

- Create a correlation heatmap (SV vs. Metadata). SVs strongly associated with technical factors are good candidates for inclusion in your downstream model. See the Surrogate Variable Analysis Diagnostics diagram.

- Run

Q4: My Negative Control probes in the 450K/EPIC array show widely differing median intensities between two batches. What is an acceptable threshold for deviation? A4: Significant deviation in negative control probes is a red flag for intensity batch effects. Use quantitative thresholds.

- Diagnostic Metric: Calculate the median intensity of the Type-I and Type-II negative control probes for each sample.

- Acceptance Threshold: A difference in median intensity > 500-1000 arbitrary units between the median of one batch and another is a cause for concern. It suggests global background differences that require correction via normalization (e.g., BMIQ, Dasen) before any downstream batch adjustment.

Experimental Protocols for Key Diagnostic Experiments

Protocol 1: Principal Variance Component Analysis (PVCA) for Batch Effect Quantification PVCA integrates PCA and Variance Components Analysis to quantify the proportion of variance attributable to each batch factor.

- Input: Normalized (but not batch-corrected) data matrix (e.g., beta-values for methylation, counts for sequencing).

- Perform PCA: Run PCA, retaining top

kprincipal components (PCs) that explain e.g., 70% of total variance. - Variance Components: Using the loadings of these

kPCs as the outcome variable, fit a linear mixed model with your batch factors (e.g.,~ (1|Processing_Date) + (1|Slide) + (1|Disease_Status)). - Calculation: For each factor, average the variance explained across the

kPCs, then compute its weight as a percentage of the total variance explained by these PCs. - Output: A table and bar chart showing the variance proportion for each factor.

Protocol 2: Density Plot and Median Absolute Deviation (MAD) Comparison Across Batches This assesses location and scale differences in probe or gene-level distributions.

- Input: The normalized intensity or value matrix.

- Per-Batch Aggregation: For each batch, calculate the density distribution of all values across all samples in that batch.

- Visualization: Plot overlapping density curves, one per batch, colored by batch.

- Quantitative Metric: For each batch, calculate the Median Absolute Deviation (MAD) of all data points. Compare MAD values between batches. A >10% relative difference in MAD suggests a scale batch effect.

- Action: Methods like ComBat (with

parametric=TRUE) can adjust for both location (mean) and scale (variance) differences.

Table 1: Quantitative Thresholds for Common Batch Effect Diagnostics

| Diagnostic Method | Metric | Acceptable Threshold | Flag for Action | Typical Tool/Package |

|---|---|---|---|---|

| PCA | % Variance explained by PC correlated with a known batch factor | < 10% of PC1 variance | > 25% of PC1 variance | stats::prcomp(), ggplot2 |

| PVCA | Proportion of variance attributed to a technical factor | < 5% total variance | > 15% total variance | pvca::pvcaBatchAssess() |

| Control Probes (Array) | Difference in median intensity between batches | < 500 a.u. | > 1000 a.u. | minfi::getQC() |

| Hierarchical Clustering | Cophenetic correlation coefficient of batch-subtrees | > 0.8 (high fidelity) | < 0.6 (poor structure) | stats::hclust(), cophenetic() |

| MAD Comparison | Relative difference in MAD between batches | < 10% | > 20% | stats::mad() |

Mandatory Visualizations

Title: Batch Effect Diagnostic Workflow

Title: Surrogate Variable Analysis Diagnostics

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials & Tools for Epigenomic Batch Effect Management

| Item Name | Function/Description | Example Product/Software |

|---|---|---|

| Reference Epigenome Control Samples | Commercially available standardized DNA (e.g., from multiple cell lines) processed alongside experimental samples to monitor inter-batch technical variation. | Coriell Institute Cell Lines, commercial "pooled" reference standards. |

| Universal Methylation Controls | Synthetic DNA spikes with known methylation levels (0%, 50%, 100%) added to each sample before processing to calibrate assay efficiency across batches. | Zymo Research's EpiTect Methylation Control Set. |

| Bisulfite Conversion Efficiency Kit | qPCR-based assay to verify complete bisulfite conversion of unmethylated cytosines, a major source of pre-analytical batch effects. | Qiagen's EpiTect PCR Control Set. |

| Bioinformatic Pipeline Manager | Tool to ensure identical software versions, reference genomes, and parameters are used across all analyses to prevent computational batch effects. | Nextflow, Snakemake, Docker/Singularity containers. |

| Batch Effect Correction Software Suite | Integrated R/Bioconductor packages providing multiple diagnostic and correction algorithms. | sva, limma, ComBat (in sva), harmony. |

| High-Throughput Scanner with Calibration | For microarray platforms, a scanner with daily calibration protocols to ensure consistent fluorescence detection intensity. | Illumina iScan system with associated calibration cartridges. |

The Correction Toolkit: Algorithms and Applications for Epigenomic Data

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My ComBat-corrected data shows over-correction, with biological signal being removed along with batch effects. What went wrong and how can I fix it?

A: This occurs when the model mistakenly interprets biological variation of interest as a batch effect. The primary cause is incorrect specification of the model matrix.

- Solution: Use the

model.matrixparameter insva::ComBatto explicitly protect your biological covariates of interest (e.g., disease state). Always visualize Principal Component Analysis (PCA) plots pre- and post-correction, coloring by both batch and biological condition. - Protocol: 1) Run PCA on uncorrected data. 2) Apply ComBat with

model=model.matrix(~your_biological_covariate). 3) Run PCA on corrected data. 4) Compare variance explained by first PCs attributed to batch vs. biology.

Q2: When using RUV (Remove Unwanted Variation), how do I select appropriate negative control features or estimate the factor k?

A: This is the most critical step for RUV success.

- For Negative Controls: Use spike-ins (for sequencing) or predefined housekeeping genes/probes empirically validated to be stable across your conditions. If unavailable, use in silico empirical controls (e.g., genes with lowest variance across samples) with caution.

- For Estimating k: Use the

RUVSeq::RUVkestimation function or a sensitivity analysis. The guideline is:- Perform RUV correction across a range of k values (e.g., 1-10).

- For each k, assess the algorithm's objective (e.g., removal of batch signal).

- Plot the residual batch effect (via PCA or ANOVA) against k. Choose the k where the marginal gain plateaus (the "elbow").

Q3: Harmony integration runs indefinitely or fails to converge on my large single-cell epigenomics dataset. How can I optimize it?

A: This is often due to excessive dataset size or high dimensionality.

- Solution: Implement the following parameter adjustments in

harmony::RunHarmony:- Increase

max.iter.harmonyfrom default 10 to 20 or 30. - Reduce PCA dimensions (

npcs), often 20-50 is sufficient. - Increase the

epsilon.clusterandepsilon.harmonytolerance parameters (e.g., to 1e-5) for earlier convergence. - Subsample your cells for a preliminary run to find optimal parameters before a full run.

- Increase

- Workflow: Subsample -> Test Parameters -> Full Run.

Q4: My deep learning autoencoder for batch correction is not generalizing to new, unseen batches. How do I improve out-of-sample performance?

A: This indicates overfitting to the training batches.

- Solution:

- Architecture: Incorporate stronger regularization (e.g., dropout layers, L1/L2 weight decay) within the encoder.

- Training Strategy: Use a held-out validation batch (not used in training) to monitor performance and implement early stopping.

- Algorithm Choice: Consider using a Domain-Adversarial Neural Network (DANN) framework, which explicitly learns features invariant to batch through a gradient reversal layer, improving generalizability.

Comparative Performance Data

Table 1: Algorithm Comparison for Epigenomic Data Correction

| Algorithm | Core Method | Key Strengths | Key Limitations | Suggested Use Case |

|---|---|---|---|---|

| ComBat | Empirical Bayes | Fast, robust for small N, preserves biological variance. | Assumes parametric distribution, requires batch annotation. | Methylation array data (450K/EPIC), known batch design. |

| RUV | Factor Analysis | Flexible (multiple versions), uses controls. | Control selection is critical; can be computationally heavy. | ChIP-seq, ATAC-seq with spike-ins or reliable controls. |

| Harmony | Iterative Clustering | Non-linear, integrates well with scRNA/scATAC-seq workflows. | Convergence issues on very large data; stochastic results. | Single-cell epigenomic data integration (scATAC-seq). |

| scGen | Variational Autoencoder (VAE) | Maps perturbed states, predicts unseen conditions. | High computational demand, requires large sample size. | In-silico control prediction in epigenetic drug screens. |

| DANN | Adversarial Learning | Promotes batch-invariant features, generalizes well. | Complex training, sensitive to hyperparameters. | Harmonizing large-scale public datasets from different labs. |

Experimental Protocol: Benchmarking Batch Effect Correction

Objective: To evaluate and select the optimal batch correction algorithm for an epigenomic dataset (e.g., DNA methylation array data from two laboratories).

Materials:

- Raw beta/m-value matrices from all batches.

- Metadata file with batch ID and biological condition.

- R/Python environment with necessary packages (

sva,Harmony,scikit-learn,PyTorch/TensorFlow).

Procedure:

- Preprocessing & QC: Normalize data within batches using standard pipelines (e.g.,

minfifor methylation). Filter low-quality probes/cells. - Baseline Assessment: Perform PCA on the uncorrected data. Calculate two metrics:

- Batch Variance: Average variance explained by PCs correlated with batch (PCs 1-2).

- Biological Cluster Separation: Silhouette score or PCA-based MANOVA for known biological groups.

- Correction Application: Apply each correction algorithm (ComBat, RUV-4, Harmony, etc.) independently to the normalized data.

- Post-Correction Evaluation: Repeat PCA and metric calculation on each corrected dataset.

- Optimal Algorithm Selection: The optimal method maximizes biological separation while minimizing residual batch variance. Visual inspection of PCA plots is essential.

Visualizations

Benchmarking Workflow for Batch Correction

Taxonomy of Batch Correction Algorithms

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Epigenomic Batch Correction Studies

| Item | Function in Context | Example/Note |

|---|---|---|

| Reference Standard Samples | Inter-batch calibration control. Processed identically across all batches to track technical variation. | Commercial methylated/unmethylated DNA controls (e.g., from Zymo Research). |

| Spike-in Controls | Exogenous controls added pre-processing for factor-based correction (RUV). | ERCC RNA spikes (for RNA-seq) or SNAP-CPG synthetic methylated DNA spikes. |

| Housekeeping Gene/Region Panel | Set of empirically stable genomic loci used as negative controls. | Methylation: ALU-C4, LINE-1 repeats (controversial). Expression: ACTB, GAPDH. |

| High-Performance Computing (HPC) Access | Running complex integrations (Harmony) or deep learning models requires significant RAM/GPU. | Cloud (AWS, GCP) or institutional cluster with >= 32GB RAM, multi-core CPUs, optional GPU. |

| Containerization Software | Ensures reproducibility of analysis pipelines across computing environments. | Docker or Singularity containers with all dependencies (R, Python, packages) version-locked. |

Technical Support Center: Troubleshooting Guides and FAQs

This support center addresses common issues in epigenomic assay data generation and preprocessing, framed within a thesis on batch effect correction. Identifying and mitigating these issues at the wet-lab and early analysis stage is critical for robust multi-batch, multi-omics integration.

ChIP-seq Troubleshooting

Q1: My ChIP-seq library has very low yield after adapter ligation and PCR. What could be the cause? A: Low yield often stems from insufficient input DNA or suboptimal fragmentation. Verify DNA concentration post-sonication with a fluorometric assay (e.g., Qubit). Ensure shearing parameters are optimized for your cell type; cross-linked chromatin can be harder to shear. Over-crosslinking is a common culprit. As a guideline, use 0.5–1 million cells or 5–10 µg of chromatin per IP, and titrate formaldehyde concentration (typically 1%) and crosslinking time (8-12 minutes).

Q2: I observe high background/peaks in my control (IgG) sample. How can I improve specificity? A: High background indicates non-specific antibody binding. Pre-clear chromatin with Protein A/G beads before IP. Increase wash stringency (e.g., use high-salt wash buffers: 500 mM NaCl). Titrate the antibody amount; too much antibody increases background. Always use a validated, ChIP-grade antibody. For your thesis, note that high background in controls can be misidentified as biological signal, creating severe batch-specific artifacts if antibody lots vary.

Table 1: ChIP-seq Common Issues & Solutions

| Issue | Potential Cause | Diagnostic Check | Solution |

|---|---|---|---|

| Low read alignment rate | Poor fragmentation size | Bioanalyzer trace of sonicated DNA | Optimize sonication cycles/duty time |

| Few peaks called | Over-fixation | Test crosslinking time series | Reduce formaldehyde % or duration |

| High duplicate reads | Low input material | Check PCR duplication rate in metrics | Scale up starting cell number |

| Streaky peaks in IgG | Non-specific binding | Compare IgG signal to input | Increase wash stringency, pre-clear |

ChIP-seq Protocol (Core)

- Crosslink cells with 1% formaldehyde for 10 min at RT. Quench with 125 mM glycine.

- Lyse cells and shear chromatin via sonication to 200–500 bp fragments. Verify size on agarose gel.

- Immunoprecipitate: Incubate clarified lysate with target-specific antibody (e.g., 1–5 µg) overnight at 4°C. Capture with Protein A/G beads.

- Wash beads sequentially with Low Salt, High Salt, LiCl, and TE buffers.

- Reverse crosslinks at 65°C overnight with shaking. Purify DNA via phenol-chloroform extraction.

- Library preparation: End repair, A-tailing, adapter ligation, and PCR amplification (typically 12–18 cycles).

- Sequence on Illumina platform (≥20 million non-duplicate reads recommended).

ATAC-seq Troubleshooting

Q3: My ATAC-seq fragment size distribution lacks a clear nucleosomal periodicity. Why? A: This suggests over-digestion or inadequate lysis. The most critical step is the lysis of cell membranes without damaging nuclei. Use ice-cold lysis buffer and precisely time the 3-minute incubation. Do not vortex. Use fresh Tn5 transposase and ensure it is thoroughly but gently mixed. For frozen cells, nuclei isolation must be optimized. Lack of periodicity can mask accessible regions, introducing variability that correlates with sample preparation batch.

Q4: There is mitochondrial DNA contamination (>20% of reads). How do I reduce it? A: High mitochondrial reads indicate nuclear membrane rupture. Be gentler during centrifugation steps (use 500 x g, not higher). For difficult samples, perform a nuclei purification step using a sucrose gradient or a commercial nuclei isolation kit. You can also bioinformatically filter these reads, but high levels signal poor-quality data that may require batch-specific preprocessing.

Table 2: ATAC-seq Quality Metrics & Targets

| Metric | Ideal Target | Problem if Outside Range | Batch Effect Risk |

|---|---|---|---|

| Mitochondrial reads | <10% | Over-digestion, poor lysis | High – correlates with sample prep date |

| TSS Enrichment Score | >10 | Low signal-to-noise | Medium – can confound integration |

| Fraction of reads in peaks (FRiP) | >0.2 | Low complexity/library yield | High – major source of technical variance |

| Nucleosomal periodicity | Visible 1-6N peaks | Over-digestion | Medium – affects peak calling consistency |

ATAC-seq Protocol (Omn-ATAC)

- Harvest & Lyse: Pellet 50,000-100,000 viable cells. Resuspend in cold ATAC-seq Lysis Buffer (10 mM Tris-HCl pH 7.4, 10 mM NaCl, 3 mM MgCl2, 0.1% IGEPAL CA-630). Incubate 3 min on ice.

- Wash nuclei with cold PBS. Pellet at 500 x g for 10 min at 4°C.

- Tagmentation: Incubate nuclei with Tagment DNA Buffer and Tn5 Transposase (Illumina) for 30 min at 37°C with shaking. Immediately purify using a MinElute PCR Purification Kit.

- PCR Amplify: Amplify tagmented DNA with 1/2 volume reaction of NEBNext High-Fidelity 2X PCR Master Mix and custom barcoded primers (typically 10–12 cycles).

- Double-Size Select using SPRI beads (e.g., 0.5X and 1.3X ratios) to remove primer dimers and large fragments.

- Sequence on Illumina platform (paired-end, ≥50 million reads recommended).

DNA Methylation (e.g., Whole-Genome Bisulfite Sequencing) Troubleshooting

Q5: After bisulfite conversion, my DNA recovery is extremely low, impacting library complexity. A: DNA degradation during conversion is common. Ensure DNA is high-quality (A260/A280 ~1.8, A260/A230 >2.0). Use a commercial bisulfite conversion kit with a dedicated desulfonation step and optimized buffers. Include carrier RNA if recommended. Elute in a low-EDTA TE buffer or water. For low-input protocols, use post-bisulfite adapter tagging (PBAT) methods to minimize loss.

Q6: My bisulfite conversion rate is below 99%. How does this affect analysis and batch correction? A: Incomplete conversion leads to false positive methylation calls. It can be batch-specific if conversion efficiency varies between runs. Calculate conversion rate from spike-in lambda phage DNA or non-CpG cytosines in the genome. If rate is low, check bisulfite solution age/freshness, ensure precise thermocycler incubation (temperature and time), and verify pH of conversion reagents. Data with variable conversion rates must be normalized or flagged before integration.

Table 3: Methylation Assay Performance Benchmarks

| Parameter | WGBS Target | RRBS Target | EPIC Array Target | Notes |

|---|---|---|---|---|

| Bisulfite Conversion Efficiency | ≥99.5% | ≥99.5% | ≥99% | Monitor via non-CpG C's or controls |

| Mapping Efficiency | ~70% | ~60% | >95% | WGBS/RRBS use bisulfite-aware aligners |

| CpG Coverage Depth (Mean) | 15-30X | 50-100X | NA | Critical for differential analysis |

| Duplicate Rate | <20% | <15% | NA | High rates indicate low input/PCR bias |

WGBS Protocol Summary

- DNA Extraction & QC: Use phenol-chloroform or column-based method. Verify integrity.

- Bisulfite Conversion: Use the Zymo Research EZ DNA Methylation-Lightning Kit.

- Denature DNA in M-Dilution Buffer at 37°C for 15 min.

- Add CT Conversion Reagent, incubate: 98°C for 8 min, 54°C for 60 min.

- Desulphonate using M-Binding Buffer and spin columns. Wash and elute.

- Library Construction: Use a post-bisulfite library prep kit (e.g., Accel-NGS Methyl-Seq DNA Library Kit) to prevent adapter conversion. Includes end repair, tailing, adapter ligation, and Uracil-Insensitive PCR (8-12 cycles).

- Sequence on Illumina platform (paired-end, targeting 30X coverage for human genomes).

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for Epigenomic Assays

| Item | Function | Example Product/Brand |

|---|---|---|

| ChIP-grade Antibody | Specific immunoprecipitation of target protein or histone mark. | Cell Signaling Technology, Abcam, Diagenode |

| Protein A/G Magnetic Beads | Efficient capture of antibody-antigen complexes. | Dynabeads (Thermo Fisher), Sera-Mag beads |

| Tn5 Transposase | Simultaneous fragmentation and tagging of accessible DNA for ATAC-seq. | Illumina Tagment DNA TDE1 Enzyme |

| Nuclei Isolation Buffer | Gentle lysis of plasma membrane while keeping nuclei intact. | 10mM Tris, 10mM NaCl, 3mM MgCl2, 0.1% IGEPAL |

| Bisulfite Conversion Kit | Efficient deamination of unmethylated cytosines to uracils. | Zymo EZ DNA Methylation-Lightning Kit |

| SPRI Beads | Size selection and purification of DNA fragments. | AMPure XP Beads (Beckman Coulter) |

| Uracil-Insensitive Polymerase | PCR amplification of bisulfite-converted DNA without bias. | Kapa HiFi Uracil+ (Roche) |

| DNA High-Sensitivity Assay Kit | Accurate quantification of low-concentration DNA. | Qubit dsDNA HS Assay (Thermo Fisher) |

Experimental Workflow Diagrams

Title: ChIP-seq Experimental Workflow

Title: ATAC-seq Experimental Workflow

Title: WGBS Methylation Analysis Workflow

Title: Batch Effect Sources in Epigenomics

FAQs & Troubleshooting Guide

Q1: After correcting for batch effects in my DNA methylation array data, the principal component analysis (PCA) still shows strong clustering by plate. What is the most likely cause?

A1: This often indicates an incomplete or suboptimal correction. The primary causes and solutions are:

- Cause: Non-linear or complex batch effects not addressed by linear correction methods (e.g., ComBat).

Action: Implement non-linear or parametric methods. Re-run correction using

ComBatwith theparametric=TRUEoption or use a non-linear method likeSVA(Surrogate Variable Analysis) orRUVm(Remove Unwanted Variation for methylation). Ensure your model matrix correctly specifies the biological variable of interest.Cause: Outliers or failed samples within a batch disproportionately influencing the correction.

- Action: Perform stringent pre-correction quality control (QC). Remove samples with low detection p-values (>0.01 for >5% of probes) or high missing value rates. Recalculate median intensity checks and inspect sample-wise beta value density plots per batch.

Q2: When integrating multiple public ChIP-seq datasets for histone mark analysis, what is the best practice to handle variation in peak calling and sequencing depth during batch correction?

A2: The key is to correct on consolidated signal matrices, not called peaks. Follow this protocol:

- Reprocess All Datasets Uniformly: Re-align all raw FASTQ files from different studies to the same reference genome (e.g., GRCh38) using the same aligner (e.g.,

bowtie2orBWA). - Create a Consensus Signal Matrix: Use a tool like

ChIPseekerorDiffBindto define a set of consensus genomic regions (e.g., all promoter regions or union of all called peaks). Count reads mapping to each region for every sample. - Correct on Read Counts: Apply batch correction methods designed for count data, such as

RUVSeqorlimma-voomwith batch as a covariate, to the consolidated read count matrix. Do not correct on BAM file read depths directly.

Q3: My differential binding analysis after batch correction yields no significant hits, suggesting over-correction. How can I diagnose and prevent this?

A3: Over-correction occurs when the batch variable is confounded with the biological signal. Diagnose with this workflow:

- Pre- and Post-Correction Visualization: Generate PCA plots before and after correction. The "after" plot should show reduced batch clustering but maintained grouping for strong, known biological groups (e.g., cell type).

- Use Negative Controls: Include negative control genomic regions (e.g., housekeeping gene promoters expected not to differ) in your analysis. If these become artificially differential post-correction, over-correction is likely.

- Solution - Use Guided Correction: If diagnosed, switch to a guided/supervised method. Use control probes (

RUVm), negative control samples, or known invariant histone marks to estimate the batch effect, preserving the biological signal of interest.

Key Experimental Protocols

Protocol 1: Systematic Batch Effect Diagnosis for Epigenomic Data

Objective: To quantitatively assess the presence and source of batch effects in a DNA methylation (450k/EPIC array) dataset.

Methodology:

- Data Loading & QC: Load IDAT files using

minfiR package. GeneratedetectionPvalues and remove probes with p > 0.01 in >5% of samples. Remove samples with a high proportion of undetected probes. - Normalization: Apply functional normalization (

preprocessFunnorminminfi) to remove intra-array technical variation. - Variance Partitioning: Use the

variancePartitionR package to fit a linear mixed model. Specify the formula:~ (1|Batch) + (1|Slide) + (1|Array) + Age + Disease_Status. This quantifies the percentage of variance attributable to each technical and biological factor. - Visual Diagnosis: Plot the first 4 principal components (PCs) from the

getBetamatrix, colored byBatch,Slide,Processing_Date, andDisease_Status.

Protocol 2: Integration of ATAC-seq Datasets Using Harmony for Batch Correction

Objective: To integrate single-cell or bulk ATAC-seq datasets from multiple studies for consolidated peak analysis.

Methodology:

- Peak Calling & Matrix Creation: Call peaks on each dataset individually using

MACS2. Create a unified peak set usingbedtools merge. Generate a binary accessibility matrix or a count matrix usingfeatureCounts. - Dimensionality Reduction: Perform Latent Semantic Indexing (LSI) on the matrix (via

SignacorcisTopic) to obtain cell-by-topic or sample-by-component matrices. - Harmony Integration: Input the LSI components and a metadata vector specifying the dataset/batch source into the

RunHarmonyfunction. The algorithm iteratively removes dataset-specific clustering. - Downstream Analysis: Use the harmonized embeddings for clustering, visualization (UMAP/t-SNE), and differential accessibility testing.

Table 1: Comparison of Common Batch Effect Correction Methods for Epomics

| Method (Package) | Data Type Suitability | Model Type | Key Strength | Key Limitation |

|---|---|---|---|---|

ComBat (sva) |

Microarrays, Normalized Counts | Linear (Empirical Bayes) | Robust for small batches, preserves biological signal. | Assumes linear effects; can struggle with severe non-linearity. |

ComBat-seq (sva) |

Raw RNA-Seq Counts | Linear (Negative Binomial) | Works directly on counts, avoiding log-transformation artifacts. | Slower on very large datasets. |

Harmony (harmony) |

Single-cell, Dimensional Reductions | Non-linear, Iterative | Excellent for visualization integration, fast. | Corrects embeddings, not original data matrix. |

RUVm (missMethyl) |

DNA Methylation Arrays | Linear (Negative Controls) | Uses control probes for guided correction. | Requires reliable control features. |

limma with removeBatchEffect (limma) |

Continuous Data (Microarrays) | Linear | Simple, fast, integrates with linear modeling. | Can be prone to over-correction if not carefully modeled. |

Table 2: Variance Partitioning Results in a Simulated Methylation Dataset

| Source of Variation | Percentage of Total Variance (%) | Interpretation |

|---|---|---|

| Batch (Processing Date) | 35% | Major technical artifact requiring correction. |

| Disease Status | 22% | Primary biological signal of interest. |

| Slide | 10% | Moderate technical artifact. |

| Age | 8% | Expected biological covariate. |

| Residual | 25% | Unexplained technical/biological noise. |

Visualizations

Title: Step-by-Step Batch Effect Correction Workflow

Title: Visual Diagnosis of Over-Correction in PCA

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Batch Effect Management |

|---|---|

| Inert Control Probes (EPIC Array) | Embedded on methylation arrays to monitor technical performance; used as negative controls in RUVm to estimate batch effect. |

| Reference Epigenome Standards | (e.g., from IHEC or Epigenomics QA consortium) Biofluid or cell line standards processed in every batch to track inter-batch technical variation. |

| Spike-in Genomic DNA | Foreign DNA (e.g., phage Lambda, E. coli) spiked into samples pre-processing for ATAC-seq/ChIP-seq to normalize for technical variation in library prep. |

| Commercial Pre-Made Bisulfite Conversion Kits | Standardized, high-efficiency kits to reduce batch variability introduced during the critical bisulfite conversion step in methylation workflows. |

| UMI Adapters (for scATAC-seq) | Unique Molecular Identifiers in sequencing adapters to correct for PCR amplification bias, a major batch-specific technical noise source. |

Technical Support Center

FAQs and Troubleshooting Guides

Q1: After applying batch correction to my Illumina EPIC array data, I still see a strong association between principal component 1 (PC1) and the processing batch. What should I check?

- A: This persistent batch effect often indicates the presence of strong technical variation that standard normalization (e.g., BMIQ, dasen) cannot address. Proceed with this protocol:

- Pre-filter probes: Remove probes containing SNPs (dbSNP155) and cross-reactive probes (Chen et al., 2013). This reduces biologically irrelevant signal.

- Identify Batch-Associated Features: Perform a linear model analysis (

limmapackage in R) where methylation beta value is the outcome and only the batch ID is the predictor. Select the top 1,000 most significantly batch-associated CpG sites (lowest p-values). - Aggressive Correction: Use a supervised method like ComBat (using the

svapackage) or REFactor, but restrict the correction only to this subset of batch-driven probes. This preserves global biological signal while targeting technical artifacts. - Re-evaluate: Re-run PCA on the corrected subset and the full dataset. PC1 should no longer correlate with batch.

- A: This persistent batch effect often indicates the presence of strong technical variation that standard normalization (e.g., BMIQ, dasen) cannot address. Proceed with this protocol:

Q2: When integrating public datasets from the BLUEPRINT and ENCODE consortia with my in-house WGBS data, Harmony/ComBat merges samples by cell type but removes the biological difference between normal and disease states. How can I preserve this?

- A: This is a classic over-correction issue. The protocol must explicitly preserve the

disease_statecovariate.- Create a Protected Covariate Model Matrix: In R, for ComBat, specify the

modelparameter. For example:model.matrix(~disease_state + age, data=pheno_data). Do not includebatchorconsortiumin this model. - Specify Batch Variable: Clearly define the

batchparameter as the consortium/lab source (e.g., BLUEPRINT, ENCODE, Lab_X). - Use a Refined Method: For WGBS data, consider using

RUVseqwith negative control genes (e.g., housekeeping genes with stable expression across your conditions) orHarmonywith a stronger theta parameter (e.g., theta=3) to increase dataset-specific clustering, ensuring thedisease_stateis declared as a grouping variable to protect.

- Create a Protected Covariate Model Matrix: In R, for ComBat, specify the

- A: This is a classic over-correction issue. The protocol must explicitly preserve the

Q3: My ATAC-seq peak counts from two large projects show batch effects that confound differential accessibility analysis. How do I correct count-based data?

- A: ATAC-seq count data requires methods designed for sequencing depth and over-dispersion.

- Data Transformation: Convert raw peak counts to Counts Per Million (CPM) or use variance stabilizing transformation (VST) from

DESeq2. - Apply Appropriate Correction: Use

removeBatchEffectfrom thelimmapackage on the transformed data, or employDESeq2's inherent multi-factor design. In DESeq2, create a design that accounts for both batch and condition:design(dds) <- ~ batch + condition. - Validation: Post-correction, plot a Multi-Dimensional Scaling (MDS) plot. Samples should cluster primarily by biological condition, not by project batch.

- Data Transformation: Convert raw peak counts to Counts Per Million (CPM) or use variance stabilizing transformation (VST) from

- A: ATAC-seq count data requires methods designed for sequencing depth and over-dispersion.

Key Metrics from Large-Scale Epigenomic Consortia (Batch Effect Insights)

Table 1: Impact of Batch Correction Methods on Data Integration Success Rates

| Consortium / Project | Primary Data Type | Uncorrected Data: % Variance from Batch (PC1) | Post-Correction Method | Corrected Data: % Variance from Batch (PC1) | Key Lesson |

|---|---|---|---|---|---|

| TCGA (Pan-Cancer) | DNA Methylation (450k) | 35-70% | ComBat (with protected covariates) | 5-15% | Preserving tissue-of-origin as a covariate is critical. |

| BLUEPRINT | WGBS, ChIP-seq | 25-50% | RUVs | 8-12% | Using empirical control features outperforms mean-centering. |

| PsychENCODE | Hi-C, Histone Mods | 40-60% | Harmony | 10-18% | Effective for complex, high-dimensional data integration. |

| IHEC (Cross-Consortium) | Multi-assay | 50-80% | LIGER (NMF-based) | 12-20% | Joint matrix factorization robust to assay-specific noise. |

Experimental Protocol: Validating Batch Correction in Multi-Site DNA Methylation Studies

Title: Stepwise Validation of Batch Effect Correction for EPIC Array Data.

Objective: To rigorously assess the effectiveness of batch correction in removing technical artifacts while preserving biological signal.

Materials: See "The Scientist's Toolkit" below.

Procedure:

- Raw Data Acquisition & Annotation: Download IDAT files and associated phenotype sheets from GEO (e.g., GSE123456). Annotate thoroughly:

Sentrix_ID,Slide,Array,Processing_Date,Lab. - Preprocessing & QC: Use

minfito load data. ApplypreprocessNoobfor background correction. Filter probes with detection p-value > 0.01. Remove poor-quality samples (median intensity < 10.5). Calculate beta values. - Initial Diagnostics: Perform PCA on 50,000 most variable CpGs. Color PCA plot by

LabandBiological_Group. Record % variance explained by PC1/PC2 and their correlation with known batch factors. - Batch Correction: Apply

ComBatfrom thesvapackage. Use model:model.matrix(~Biological_Group, data=pheno). Input: M-values (log2 transform of beta values). - Post-Correction Diagnostics: Repeat PCA. Generate:

- Boxplot: Compare distributions of control probe intensities (e.g., Type-I/II normalization probes) across batches before/after.

- Silhouette Plot: Calculate silhouette width to quantify mixing of batches within biological clusters.

- Biological Signal Preservation Test: Perform differential methylation analysis (DMP) using

limmaon uncorrected and corrected M-values. Compare the top 100 DMPs identified; >80% overlap indicates successful signal preservation.

Visualization: Experimental Workflow for Batch Correction Validation

Title: Batch Correction Validation Workflow

Visualization: Pathway of Batch Effect Sources in Multi-Consortium Data

Title: Sources of Variation in Epigenomic Data

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Batch-Conscious Epigenomic Analysis

| Item | Function & Relevance to Batch Correction |

|---|---|

| Reference Epigenomes (e.g., from IHEC) | Gold-standard datasets for alignment, normalization, and cross-lab comparison. |

| Commercial Control DNA (e.g., CpGenome Universal Methylated DNA) | Provides an unmethylated/methylated baseline for assay calibration across runs. |

| Bisulfite Conversion Kits (e.g., EZ DNA Methylation kits) | High-efficiency, consistent conversion is critical; kit lot is a major batch variable. |

| UMI Adapters for WGBS/ATAC-seq | Unique Molecular Identifiers to mitigate PCR duplicate bias, a sequencing-level batch effect. |

| Bioinformatics Pipelines (e.g., nf-core/methylseq, nf-core/atacseq) | Containerized, version-controlled pipelines ensure reproducibility across compute environments. |

| Interim Control Samples | Aliquots from a large, homogeneous biological sample run in every batch to directly monitor technical drift. |

Beyond Basic Correction: Troubleshooting, Optimization, and Advanced Strategies

This troubleshooting guide addresses common issues encountered during batch effect correction in epigenomic data analysis, framed within a broader research thesis on improving data integration for downstream biological interpretation.

FAQs & Troubleshooting Guides

Q1: How can I tell if my batch effect correction method has failed due to under-correction? A: Under-correction is diagnosed when significant batch-associated variation remains in the principal component analysis (PCA) or when sample clustering in dimensionality reduction plots (t-SNE, UMAP) is primarily driven by batch identity rather than biological condition. Quantitative metrics like the Percent Variance Explained (PVE) by batch before and after correction should show minimal reduction. A Persistent Batch Variance (PBV) score above a field-accepted threshold (e.g., >15%) often indicates under-correction.

Q2: What are the key signs of over-correction in my integrated epigenomic dataset? A: Over-correction, or the removal of biological signal along with batch noise, manifests as:

- The loss of separation between known biologically distinct groups (e.g., case vs. control).

- An implausibly high correlation between samples from different batches that exceeds within-batch correlations for the same condition.

- A drastic reduction in the number of differentially methylated regions (DMRs) or accessible chromatin regions (ACRs) identified post-correction, especially for known true positives.

Q3: Which statistical tests are most reliable for diagnosing residual batch effects? A: Use a combination of tests:

- Visual: PCA, boxplots of sample distances.

- Quantitative: ANOVA/PERMANOVA (using batch as factor on principal components), Silhouette Width (measuring batch-driven clustering), and the kBET (k-nearest neighbor batch effect test) rejection rate. A rejection rate > 0.1-0.2 suggests significant residual batch effects.

Q4: My corrected data shows good batch mixing but poor biological separation. Is this always over-correction? A: Not necessarily. It could indicate confounded study design, where batch is perfectly correlated with a key biological group. No computational method can disentangle this. Always review your experimental design matrix before concluding over-correction.

Table 1: Key Diagnostic Metrics for Batch Effect Correction

| Metric | Formula/Description | Under-Correction Indicator | Over-Correction Indicator | Ideal Target |

|---|---|---|---|---|

| PVE by Batch | (Var(PC~batch~) / Total Var(PC)) * 100 |

High post-correction value (>10%) | Very low value (~0%) | Low, but non-zero (e.g., <5%) |

| Silhouette Width | (b - a) / max(a, b) (a=mean intra-batch distance, b=mean nearest-inter-batch distance) |

Positive value (closer to 1) | Strong negative value | Value close to 0 |

| kBET Rejection Rate | Proportion of local neighborhoods rejecting the null hypothesis (batch labels are random) | Rate > 0.2 | Rate ~ 0, but biological signal also lost | Rate < 0.1 |

| Biological Signal Preservation | e.g., Log2 fold-change of known positive control DMRs | Maintained High | Drastically Reduced | Maintained High |

Experimental Protocols for Diagnosis

Protocol 1: Systematic Evaluation of Correction Performance

- Data Preparation: Generate a negative control dataset by shuffling biological labels within each batch. Retain a positive control set with known true biological differences.

- Apply Correction: Run your chosen batch correction method (e.g., ComBat, limma, Harmony, SVA) on both control sets and the real data.

- Assess:

- On Negative Control: Successful correction should not create artificial biological clusters. Use PCA to check.

- On Positive Control: Successful correction should preserve the known biological separation. Calculate the Adjusted Rand Index (ARI) between known and clustered labels.

- On Real Data: Use metrics from Table 1.

Protocol 2: Simulation-Based Calibration

- Simulate Data: Use packages like

sconeor custom scripts to simulate epigenomic data (e.g., DNA methylation beta values) with known, tunable magnitudes of batch effect and biological effect. - Apply Correction: Apply your pipeline to the simulated data.

- Quantify: Calculate the F1-score or AUROC for recovering the simulated true biological signal. Plot the trade-off curve between batch removal (measured by PBV) and biological signal recovery.

Visualization of Diagnostic Workflow

Title: Workflow for Diagnosing Batch Correction Pitfalls

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Batch Effect Diagnostic Experiments

| Item | Function in Diagnosis | Example/Specification |

|---|---|---|

| Reference Epigenome Standards | Provide a known biological signal across batches to assess preservation post-correction. | EpiSyte Spike-in Controls (methylation), SNAP-ChIP spike-in chromatin. |

| Inter-Batch Control Samples | Identical biological material split across all batches. Critical for distinguishing technical from biological variance. | Universal Human Methylation Reference (UHMR), pooled cell line aliquots. |

| Batch-Aware Analysis Software | Implement and compare correction algorithms and diagnostics. | R/Bioconductor: sva, limma, harmony. Python: scanpy, bbknn. |

| High-Throughput Sequencing Platform | Generate the primary epigenomic data (e.g., bisulfite-seq, ATAC-seq). Must track run-specific batch IDs. | Illumina NovaSeq, PacBio Sequel II (for long-read epigenomics). |

| Diagnostic Metric Scripts | Custom or packaged code to calculate Silhouette Width, kBET, PVE, etc. | R script bundles from published benchmarks (e.g., from Nature Methods batch effect reviews). |

Troubleshooting Guides & FAQs

Q1: After applying ComBat to my DNA methylation array data, I've lost the significant differential methylation I expected between my case and control groups. What went wrong? A: This is a classic sign of over-correction, where biological signal is erroneously modeled as batch noise. The issue often lies in the model design matrix.

- Cause: If your batch variable is perfectly or highly correlated with your biological condition (e.g., all Batch A samples are 'Case', all Batch B are 'Control'), ComBat will attribute the condition-specific variance to the batch and remove it.

- Solution:

- Use the

modargument: Include your condition of interest as a covariate in the model (mod = model.matrix(~condition, data=phenoData)). This explicitly tells ComBat to preserve variance associated with this variable. - Evaluate with PCA: Run PCA on the data both before and after correction. Plot PC1 vs. PC2. If the pre-correction plot shows separation primarily by batch, and the post-correction plot shows no separation by condition or batch, over-correction is likely.

- Alternative Methods: Consider methods like

HarmonyorRUVthat are more robust to this confounding, or use negative control genes (those not expected to vary by condition) to guide correction.

- Use the

Q2: My negative control probes (e.g., housekeeping genes, ERV probes) show drastic shifts after batch correction. Should I be concerned? A: Yes, this is a major red flag. Negative controls should remain stable if the correction is working properly.

- Cause: The correction algorithm is applying overly aggressive adjustments, distorting even invariant features. This indicates underlying model assumptions are violated or the batch effect is non-linear/complex.

- Solution:

- Diagnostic Plot: Create a boxplot of negative control probe values (or mean values per sample) before and after correction. They should be centered around the same mean.

- Parameter Adjustment: For ComBat, try setting

mean.only=TRUEif the bias is believed to be additive only. Forsva, adjust then.svparameter; too many surrogate variables (SVs) will remove biological signal. - Switch Algorithm: Try a non-parametric or mean-centering method like

limma::removeBatchEffectwith careful covariate specification, which may be less aggressive.

Q3: When using sva or RUV, how do I determine the optimal number of factors (k) or surrogate variables (SVs) to estimate? A: Choosing 'k' is critical—too few leaves residual batch noise, too many removes biology.

- Cause: The default heuristic methods (e.g.,

num.svinsva) can overestimate in complex datasets. - Solution - Empirical Protocol:

- Leek's Approach (

sva): Use thenum.svfunction with several different statistics (beorleek). Treat the result as an upper bound. - Elbow Plot (RUV/secular): Perform the correction across a range of k values (e.g., 1 to 10). For each k, calculate the mean variance of your negative control probes. Plot k against this variance. Choose the k at the "elbow" where variance plateaus.

- Benchmark with Spikes: If you have spike-in controls or validated positive/negative genomic regions, assess the recovery of known signals (e.g., differential analysis p-value distribution) across different k values.

- Leek's Approach (

Q4: My batch-corrected data fails downstream validation in qPCR or functional assays. How can I improve real-world concordance? A: This points to a disconnect between statistical correction and biological truth.

- Cause: Global correction methods apply uniform adjustments that may not be appropriate for all genomic regions or feature types.

- Solution:

- Subset-and-Correct: Perform batch correction within similar biological feature categories (e.g., promoters, enhancers, gene bodies) separately, as batch effects can manifest differently in each.

- Integrate Validation Data: Use paired qPCR data from a subset of samples and key targets to anchor the correction. Methods like

ConQuRfor microbiome data illustrate this principle. - Prioritize Robust Methods: Use methods that incorporate sample replicates or technical replicates if available. Reference-based integration (e.g., using a common reference sample in all batches) is the gold standard for preserving absolute signal magnitude.

Q5: What are the key metrics to quantitatively assess if my batch correction preserved biological signal? A: Rely on a multi-metric dashboard, not a single number.

| Metric | Target Outcome | Tool/Method |

|---|---|---|

| PCA Variance | Reduction in batch clustering in PC2/PC3, while biological condition separation in PC1 is maintained or enhanced. | plotPCA (DESeq2/limma) |

| Average Silhouette Width | Increases for biological condition labels, decreases for batch labels. | cluster::silhouette |

| Differential Analysis Yield | The number of significant findings (e.g., DMPs, DEGs) should be plausible and enriched for expected pathways. | limma, DSS, bumphunter |

| Negative Control Stability | Variance/Mean of control probes/genes should decrease or remain low. | IQR/Boxplot analysis |

| Positive Control Recovery | Known true positive signals should remain statistically significant with strong effect sizes. | Enrichment analysis on gold-standard sets |

Experimental Protocol: Batch Effect Diagnosis & Correction Validation

Title: Stepwise Protocol for Evaluating Batch Correction in Epigenomic Studies

Raw Data QC & Visualization:

- Generate PCA and t-SNE plots colored by Batch ID, Biological Condition, Processing Date, and Sample GC Bias.

- Calculate average correlation matrices within and between batches.

Application of Correction:

- Apply chosen correction method (e.g.,

ComBat,Harmony,RUV) with and without the biological condition as a covariate. - For sva: Estimate surrogate variables using the

svapackage with a full model (~condition) and a null model (~1). Use the estimated SVs as covariates in your downstream linear model.

- Apply chosen correction method (e.g.,

Post-Correction Diagnostic:

- Re-generate all visualizations from Step 1 on the corrected data.

- Perform hierarchical clustering on the top variable features; the dendrogram should cluster by biology, not batch.

- Run a statistical test for association (ANOVA) between principal components (PC1-PC5) and both batch and condition variables. Batch should no longer be significant.

Biological Signal Audit:

- Perform a standard differential analysis pipeline on the corrected data.

- Compare the resulting gene list or feature list to a pre-defined "gold standard" set from literature or pilot studies. Calculate precision and recall.

- Use pathway analysis (GO, KEGG) and assess if the enriched terms are biologically coherent.

Visualizations

Title: Decision Workflow for Batch Effect Correction

Title: Signal Partitioning in Batch Correction Models

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Batch Effect Management |

|---|---|

| Reference Standard Epigenomic DNA | A commercially available, well-characterized DNA sample (e.g., from a cell line) run as an inter-batch control to track technical variability and anchor corrections. |

| Spike-in Control Oligonucleotides | Synthetic, non-human DNA sequences with known methylation status or chromatin profiles spiked into samples pre-processing to monitor and correct for batch-specific efficiency losses. |

| Universal Human Methylation BeadChip Controls | The built-in control probes on platforms like the Illumina EPIC array, used to assess staining, extension, hybridization, and specific base extension steps across batches. |

| Duplicated Biological Replicates | The same biological sample (e.g., from a large tissue aliquot or cell culture) split and processed in different batches, providing the ground truth for evaluating correction success. |

| Batch-Aware Nucleic Acid Kits | Using a single, large lot of key reagents (bisulfite conversion kits, library prep kits) for an entire study to minimize reagent-driven batch effects. |